Lambda Architecture using Azure #CosmosDB: Faster performance, Low TCO, Low DevOps

Posted on

4 min read

Azure Cosmos DB provides a scalable database solution that can handle both batch and real-time ingestion and querying and enables developers to implement lambda architectures with low TCO. Lambda architectures enable efficient data processing of massive data sets. Lambda architectures use batch-processing, stream-processing, and a serving layer to minimize the latency involved in querying big data.

To implement a lambda architecture, you can use a combination of the following technologies to accelerate real-time big data analytics:

- Azure Cosmos DB, the industry’s first globally distributed, multi-model database service.

- Apache Spark for Azure HDInsight, a processing framework that runs large-scale data analytics applications

- Azure Cosmos DB change feed, which streams new data to the batch layer for HDInsight to process

- The Spark to Azure Cosmos DB Connector

We wrote a detailed article that describes the fundamentals of a lambda architecture based on the original multi-layer design and the benefits of a “rearchitected” lambda architecture that simplifies operations.

What is a lambda architecture?

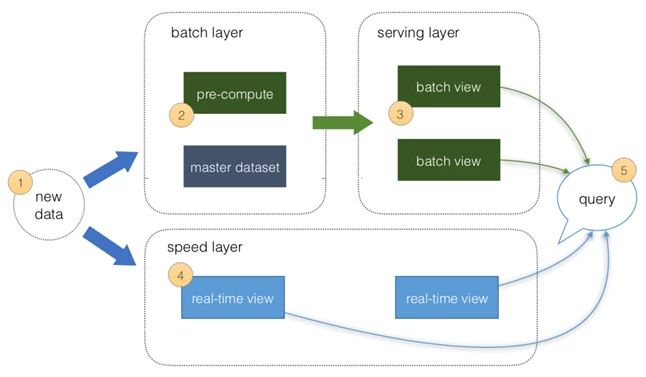

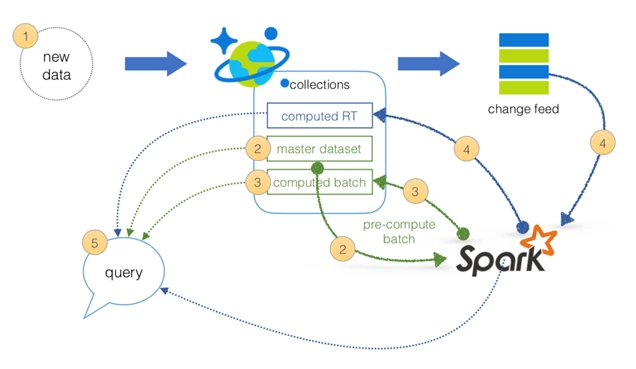

The basic principles of a lambda architecture are depicted in the figure above:

- All data is pushed into both the batch layer and speed layer.

- The batch layer has a master dataset (immutable, append-only set of raw data) and pre-computes the batch views.

- The serving layer has batch views for fast queries.

- The speed layer compensates for processing time (to the serving layer) and deals with recent data only.

- All queries can be answered by merging results from batch views and real-time views or pinging them individually.

Speed layer

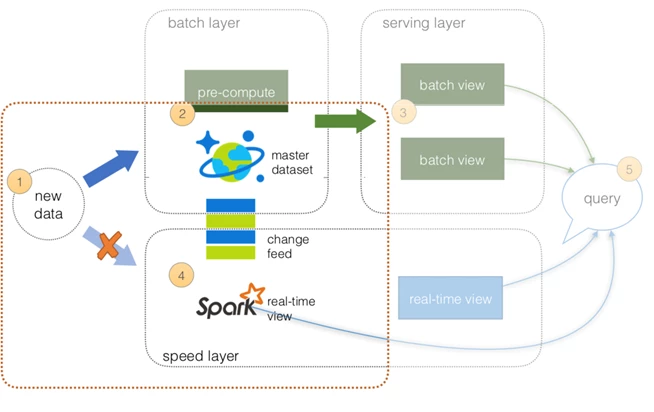

For speed layer, you can utilize the Azure Cosmos DB change feed support to keep the state for the batch layer while revealing the Azure Cosmos DB change log via the Change Feed API for your speed layer.

What’s important in these layers:

- All data is pushed only into Azure Cosmos DB, thus you can avoid multi-casting issues.

- The batch layer has a master dataset (immutable, append-only set of raw data) and pre-computes the batch views.

- The serving layer is discussed in the next section.

- The speed layer utilizes HDInsight (Apache Spark) to read the Azure Cosmos DB change feed. This enables you to persist your data as well as to query and process it concurrently.

- All queries can be answered by merging results from batch views and real-time views or pinging them individually.

For code example, please see here.

Batch and serving layers

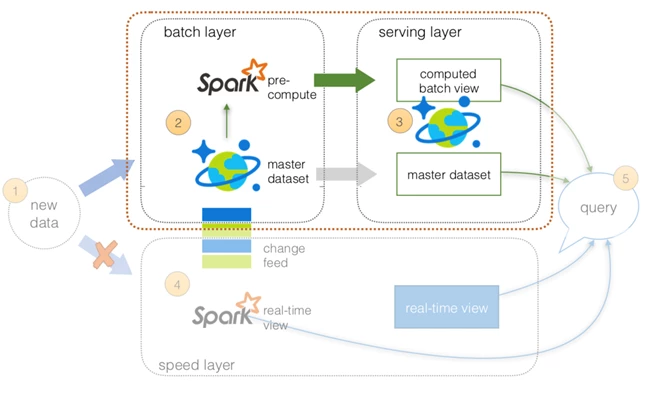

Since the new data is loaded into Azure Cosmos DB (where the change feed is being used for the speed layer), this is where the master dataset (an immutable, append-only set of raw data) resides. From this point onwards, you can use HDInsight (Apache Spark) to perform the pre-compute functions from the batch layer to serving layer, as shown in the following figure:

What’s important in these layers:

- All data is pushed only into Azure Cosmos DB (to avoid multi-cast issues).

- The batch layer has a master dataset (immutable, append-only set of raw data) stored in Azure Cosmos DB. Using HDI Spark, you can pre-compute your aggregations to be stored in your computed batch views.

- The serving layer is an Azure Cosmos DB database with collections for the master dataset and computed batch view.

- The speed layer is discussed later in this article.

- All queries can be answered by merging results from the batch views and real-time views or pinging them individually.

For code example, please see here and for complete code samples, see azure-cosmosdb-spark/lambda/samples including:

- Lambda Architecture Rearchitected – Batch Layer HTML | ipynb

- Lambda Architecture Rearchitected – Batch to Serving Layer HTML | ipynb

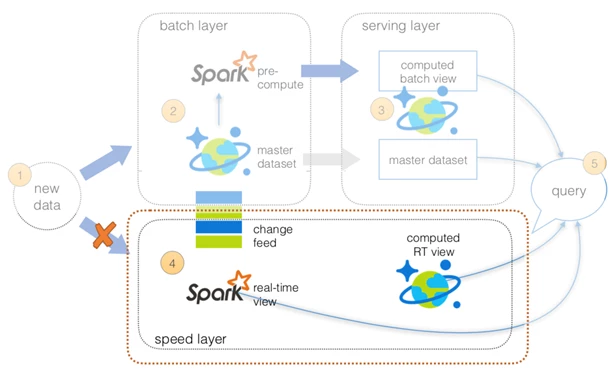

Speed layer

As previously noted, using the Azure Cosmos DB Change Feed Library allows you to simplify the operations between the batch and speed layers. In this architecture, use Apache Spark (via HDInsight) to perform the structured streaming queries against the data. You may also want to temporarily persist the results of your structured streaming queries so other systems can access this data.

To do this, create a separate Azure Cosmos DB collection to save the results of your structured streaming queries. This allows you to have other systems access this information not just Apache Spark. As well with the Azure Cosmos DB Time-to-Live (TTL) feature, you can configure your documents to be automatically deleted after a set duration. For more information on the Azure Cosmos DB TTL feature, see Expire data in Azure Cosmos DB collections automatically with time to live.

Lambda Architecture using Azure Cosmos DB: Faster performance, Low TCO, Low DevOps

As noted above, you can simplify the original lambda architecture (with batch, serving, and speed layers) by using Azure Cosmos DB, Azure Cosmos DB Change Feed Library, Apache Spark on HDInsight, and the native Spark Connector for Azure Cosmos DB.

This simplifies not only the operations but also the data flow.

- All data is pushed into Azure Cosmos DB for processing

- The batch layer has a master dataset (immutable, append-only set of raw data) and pre-computes the batch views

- The serving layer has batch views of data for fast queries.

- The speed layer compensates for processing time (to the serving layer) and deals with recent data only.

- All queries can be answered by merging results from batch views and real-time views.

Next steps

If you haven’t already, download the Spark to Azure Cosmos DB connector from the azure-cosmosdb-spark GitHub repository and explore the additional resources in the repo:

- Lambda architecture

- Distributed aggregations examples

- Sample scripts and notebooks

- Structured streaming demos

- Change feed demos

- Stream processing changes using Azure Cosmos DB Change Feed and Apache Spark

You might also want to review the Apache Spark SQL, DataFrames, and Datasets Guide and the Apache Spark on Azure HDInsight article. The full version of this article is published in our docs. Using the steps outlined in this blog, anyone, from a large enterprise to an individual developer can now build a lambda architecture for big data with Azure Cosmos DB in a matter of minutes. You can Try Azure Cosmos DB for free today, no sign up or credit card required. Stay up-to-date on the latest Azure Cosmos DB news and features by following us on Twitter #CosmosDB, @AzureCosmosDB.

– Your friends at Azure Cosmos DB