We’re excited to announce new Azure Cosmos DB capabilities at Microsoft Build 2019 that enable anyone to easily build intelligent globally distributed apps running at Cosmos scale:

- Planet scale, operational analytics with built-in support for Apache Spark in Azure Cosmos DB

- Built-in Jupyter notebooks support for all Azure Cosmos DB APIs

See the other Azure Cosmos DB announcements and hear voices of our customers.

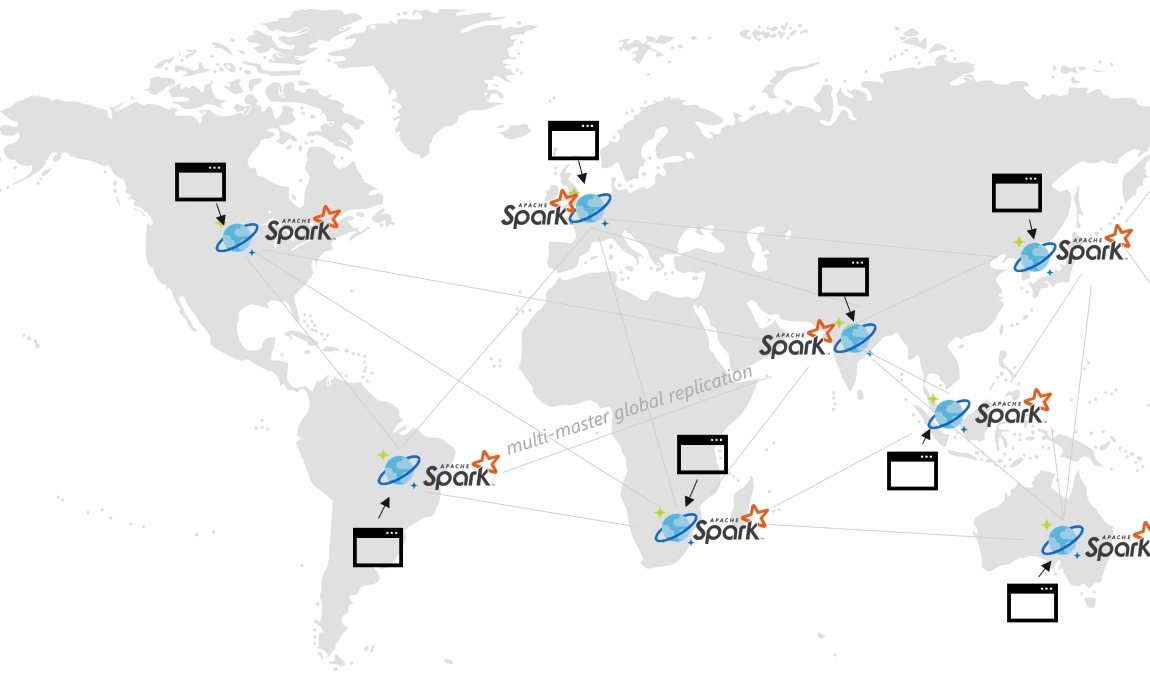

Built-in support for Apache Spark in Azure Cosmos DB

Our customers love the fact that Azure Cosmos DB enables them to elastically scale throughput with guaranteed low latency worldwide, and they also want to run operational analytics directly against the petabytes of operational data stored in their Azure Cosmos databases. We are excited to announce the preview of native integration of Apache Spark within Azure Cosmos DB. You can now run globally distributed, low latency operational analytics and AI on transactional data stored within your Cosmos databases. This provides the following benefits:

- Fast time-to-insights with globally distributed Spark. With the native Apache Spark support on your multi-mastered globally distributed Cosmos database, you can now get blazing fast time-to-insight all around the world. Since your Cosmos database is globally distributed, all the data is ingested and queries are served against the local database replica closest to both the producers and the consumers of data, all around the world.

- Fully-managed experience and SLAs. Apache Spark jobs enjoy the industry leading comprehensive 99.999 SLAs offered by Azure Cosmos DB without any hassle of managing separate Apache Spark clusters. Azure Cosmos DB automatically and elastically scales the compute required to execute your Apache Spark jobs across all Azure regions associated with your Cosmos database.

- Efficient execution of Spark jobs on multi-model operational data. All your Spark jobs are executed directly on the indexed multi-model data stored inside the data partitions of your Cosmos containers without requiring any unnecessary data movement.

- OSS APIs for transactional and analytical data processing. Along with using the familiar OSS client drivers for Cassandra, MongoDB, and Gremlin (along with the Core SQL API) for your operational workloads, you can now use Apache Spark for your analytics – all operating on the same underlying globally distributed data stored in your Cosmos database.

We believe that the native integration of Apache Spark into Azure Cosmos DB bridges the transactional and analytic divide that has been one of the major customer pain points building cloud-native applications at global scale.

Several of Azure’s largest enterprise customers are running globally distributed operational analytics on their Cosmos databases with Apache Spark. Coca-Cola is one such customer, watch their story.

Coca-Cola using Azure Cosmos DB for globally-distributed operational analytics

“Being able to scale globally and have insights that are actually delivered within minutes at a global scale is very important for us. Putting our data in a service like Azure Cosmos DB allows us to draw insights across the world much faster, going from the hours that we used to take a couple years ago, down to minutes.”

– Neeraj Tolmare, CIO, Global Head of Digital & Innovation at The Coca-Cola Company

Explore more of the Azure Cosmos DB API for Apache Spark.

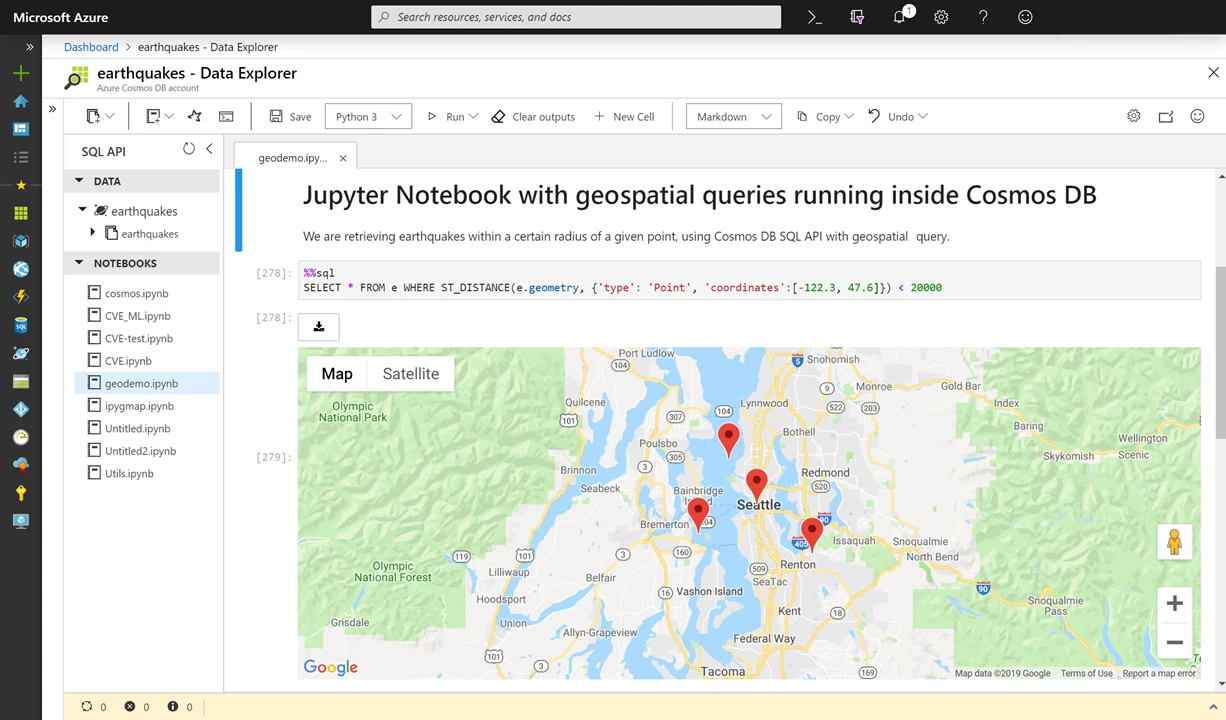

Cosmic notebooks

We are also thrilled to announce the preview of Jupyter notebooks running inside Azure Cosmos DB, made available for all APIs (including Cassandra, MongoDB, SQL, Gremlin and Apache Spark) to further enhance the developer experience on Azure Cosmos DB. With the native notebook experience support for all Azure Cosmos DB APIs and all data models, developers can now interactively run queries, execute ML models, explore and analyze the data stored in their Cosmos databases. The notebook experience also enables easy exploration with the stored data, building and training machine learning models, and performing inferencing on the data using the familiar Jupyter notebook experience, directly inside the Azure portal.

Learn more about Jupyter notebooks.

Built-in support for Jupyter notebooks in Azure Cosmos DB

We also announced a slew of new capabilities and improvements for developers, including a new API for etcd offering native support for Azure Cosmos DB backed etcd to power your self-managed Kubernetes clusters on Azure, support for OFFSET/SKIP to our SQL APIs and other SDK improvements.

We are extremely grateful to our customers, who are building amazingly cool, globally distributed apps and trusting Azure Cosmos DB with their mission critical workloads at massive scale. Their stories inspire us.

- Have questions? Email us at AskCosmosDB@microsoft.com any time.

- Try out Azure Cosmos DB for free. (No credit card required)

- For the latest Azure Cosmos DB news and features, stay up-to-date by following us on Twitter #CosmosDB, @AzureCosmosDB.