At Microsoft Ignite 2019, we announced general availability of the new SAP HANA Large Instances powered by the 2nd Generation Intel Xeon Scalable processors, formally Cascade Lake, supporting Intel® Optane™ persistent memory (PMem).

Microsoft’s largest SAP customers are continuing to consolidate their business functions and growing their footprint. S/4 HANA workloads demand increasingly larger nodes as they scale up. Some scenarios for high availability/disaster recovery (HA/DR) and multi-tier data needs are adding to the complexity of operations.

In partnership with Intel and SAP, we have worked to develop the new HANA Large Instances with Intel Optane PMem offering higher memory density and in-memory data persistence capabilities. Coupled with 2nd Generation Intel Xeon Scalable processors, these instances provide higher performance and higher memory to processor ratio.

For SAP HANA solutions, these new offerings help lower total cost of ownership (TCO), simplify the complex architectures for HA/DR and multi-tier data, and offer 22 times faster reload times. The new HANA large instances extend the broad array of the existing large instances offering with the purpose built capabilities critical for running SAP HANA workloads.

Available now

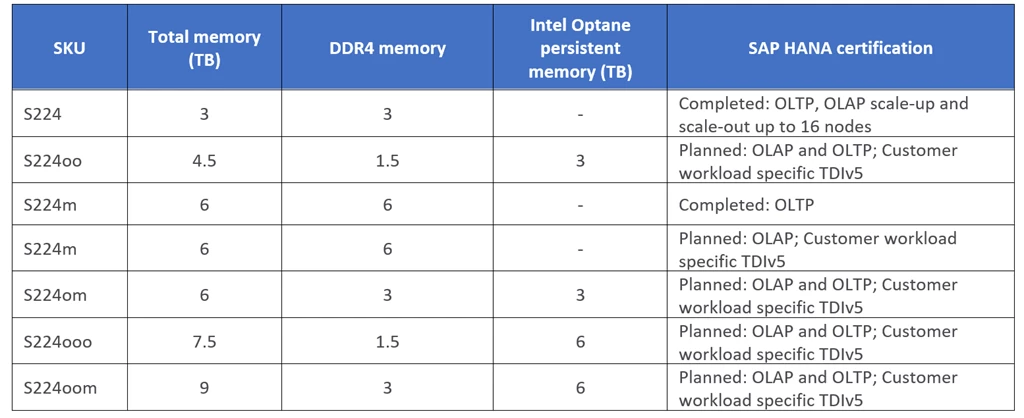

The new S224 HANA Large Instances support 3 TB to 9 TB of memory with four socket 224 vCPUs. The new instances support both DRAM-only and DRAM plus Intel® Optane™ persistent memory combinations.

The variety of SKUs gives our customers the ability to choose the best solution for their SAP HANA in-memory workload needs, with higher memory capacity and lower cost as compared to DRAM-only instances. S224 SKUs with a higher core to working memory ratio are performance-optimized for OLAP while higher working memory to core ratio are better priced for OLTP.

The S224 instances with Intel Optane PMem come in 1:1, 1:2, and 1:4 ratios. Each ratio indicates the size of DRAM memory paired with Intel Optane memory. The architecture options available with these offerings are discussed in the next section. The new HANA Large Instances are available in several Azure regions where HANA Large Instances are available.

Key benefits of deploying S224 instances

Platform consolidation

SAP HANA is an in-memory data platform and its hybrid structure for processing both OLTP and OLAP workloads in real-time with low latency is a major benefit for enterprises using SAP HANA. The 2nd Generation Intel Xeon Scalable processors offer 50 percent higher performance and higher memory to processor ratioi compared to the previous generation processors. Coupled with Intel Optane, the new instances offer even higher memory densities with >3TB per socket.

SAP HANA uses Intel Optane PMem as an extension to DRAM memory by selectively placing the data structures in persistent memory, in a mode called app direct. The column store data which attributes for majority of the data in most HANA systems is enabled for placement in Intel Optane persistent memory where-as working DRAM memory is used for delta merges, row store and cache data.

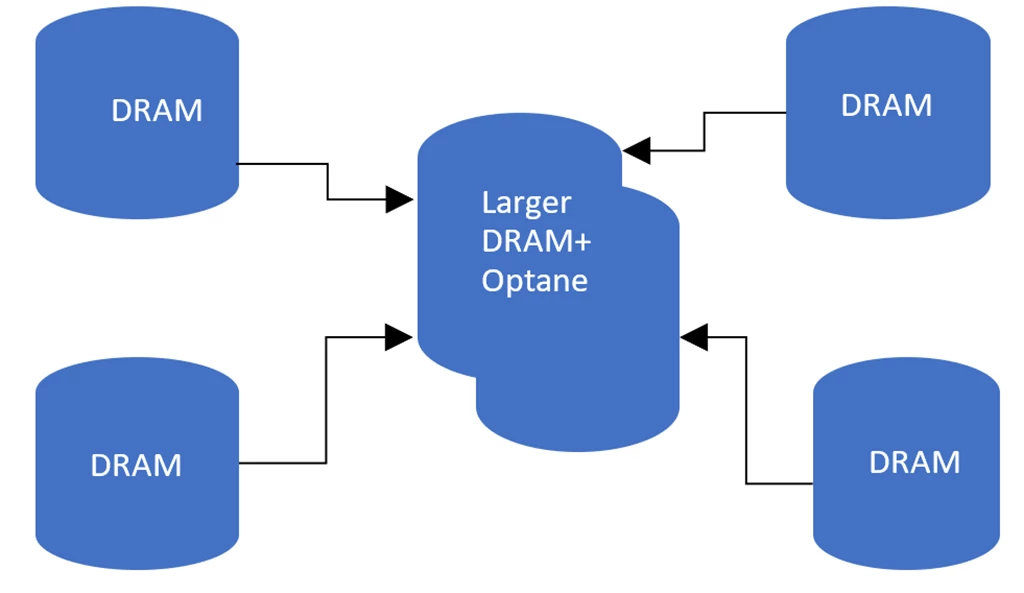

For organizations with growing data needs, the higher memory densities enable a deployment to scale up or scale out with fewer of the S224 SKU’s (seen in Figure 1) as compared to a larger number of DRAM-only nodes on previous generation processors. This enables organizations to consolidate their platform footprint and reduce operational complexity, realizing reduced TCO.

Figure 1: Platform consolidation with higher memory density nodes from larger scale out to fewer scale up.

Faster reload times

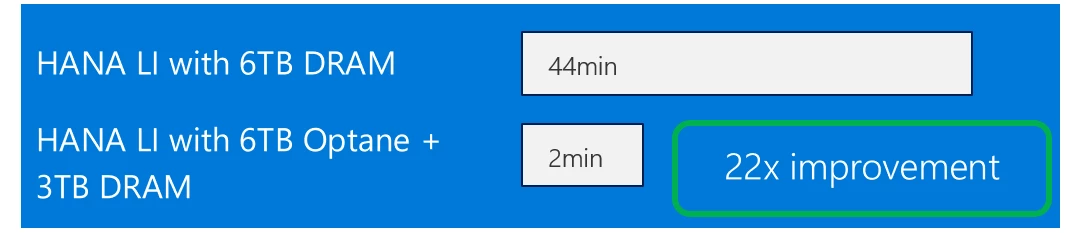

The data stored in the Intel Optane PMem is persistent. This means for SAP HANA deployments using the new instances with Optane PMem, there is no need to load data from disks or slower storage tiers in the event of system reboot. As mentioned previously, SAP HANA leverages app direct mode to store most of the database into Optane persistent memory. When system reboot occurs during upgrades as an example, the data reload time is cut down dramatically, enabling a faster return to normal operations as compared to DRAM-only systems.

In recent testing conducted using two S224 instances, a DRAM-only system running 6 TB of memory, and a system with 9 TB of memory consisting of 3 TB DRAM and 6 TB of Optane PMem in a 1:2 ratio, the data reload time on the Optane system was 22 times faster as compared to the reload time on the DRAM-only system. The load time on the DRAM system post system reboot is around 44 minutes versus 2 minutes on the Optane node.

Figure 2: Internal testing using 3 TB HANA dataset Shows 22x improvement in DB restart times on the new SAP HANA large instances using Intel Optane.

The faster reload and recovery times may help some deployments to run without HA for non-production workloads with reduced service windows, and remove clustering complexity and downtimes needed for upgrades and/or patches. Each SAP HANA large instance region comes with hot spares to cover the scenario of complete system failure and recover the DB using hot spares.

Lower TCO for HA/DR

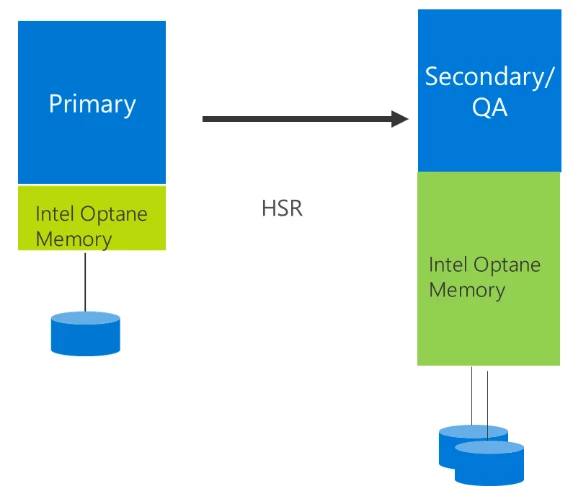

The higher memory density offered with the new instances also enable new deployment options available to enterprises for business continuity purposes. The smaller DRAM-only node at the primary site can replicate the data into a larger Intel Optane node offered in 1:2 and 1:4 ratios, with the data preloaded in persistent memory. Higher density Optane node can be used as a dual-purpose node (as seen in Figure 3) for QA testing and also act as primary node in the event of a failover at the primary site, thereby lowering cost by eliminating the need for standalone instances for QA and DR. The data on the larger Optane node is pre-loaded into Optane PMem, which eliminates the need to load the data from disks and cuts the downtime, thus achieving better RTO and RPO times.

Figure 3: Lower TCO with a dual-purpose node at DR site serving the needs for QA/Dev test and DR.

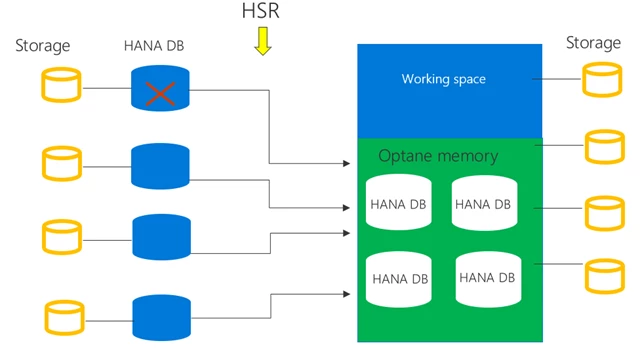

Similarly, HSR replicated configurations in a scale out S/4 HANA setup can be replicated into a single shared HA Optane node in a 1:4 ratio (as seen in Figure 4), reducing the complexity of managing multiple HA instances, thereby lowering TCO and achieving reduced service windows.

Figure 4: Lower TCO for HA and DR using shared higher memory node for scale out deployments.

Enabling SAP HANA on Intel Optane

Supported OS versions

Below is the guidance on the supported OS and HANA versions for using Intel Optane persistent memory technology (PMem).

Following OS versions support Intel Optane in App direct mode:

- RHEL 7.6 or later

- SLES* 12 SP4 or later

- SLES 15 or later

SAP HANA support

SAP HANA 2.0 SPS 03 is the first SAP HANA version to support Intel Optane in app direct mode. The recommended version is SAP HANA 2.0 SPS 04 (or a later version) for customers using Optane nodes. SAP HANA can leverage Intel Optane in app direct mode by configuring PMem regions, namespaces, and file system. The HANA large instance operations team will drive the configuration setup before handing over the Optane node to customers.

SAP HANA configuration

SAP HANA needs to recognize the new Intel Optane PMem DIMMs. The directory that SAP HANA uses as base path must mount onto the file system that were created for PMem. SAP HANA SPS04 or a later version is a requirement for Optane usage. Below is the specific command to set up the base path for the PMem volumes:

In the [persistence]section of the global.ini file, provide a line with a comma-separated list of all mounted PMem volumes by running the following command. Following this, SAP HANA recognizes the PMem devices and loads column store data into the modules.

[persistence]

basepath_persistent_memory_volumes=/hana/pmem/nvmem0; /hana/pmem/nvmem1; /hana/pmem/nvmem2; /hana/pmem/nvmem3

Learn more

If you are interested in learning more about the S224 SKUs, please contact your Microsoft account team. To learn more about running SAP solutions on Azure, visit SAP on Azure or download a free SAP on Azure implementation guide.

i Intel Shows 1.59x Performance Improvement in Upcoming Intel Xeon Processor Scalable Family