This week, we were excited to announce the general availability of Azure File Storage, Microsoft Azure’s fully managed file shares in the cloud at AzureCon 2015. Today, I’d like to provide an inside view on how we built the service.

We took a very different approach with Azure File Storage than to simply use Windows file servers. In order to take advantage of the scalability of Azure Storage, Azure File Storage implements the SMB 3.0 protocol specifically to run at hyper-scale in Azure. Internally, Azure File Storage uses the Azure Table Storage infrastructure to store file and directory metadata that conventional file systems manage in SMB 3.0 clustered solutions. Similarly, Azure File Storage stores each file’s data in an Azure Page Blob. Azure Table and Blob Storage are both massively scalable, robust, and durable, so Azure File Storage store inherits those properties, providing a true active/active continuously-available file share.

A benefit of using these internal Azure technologies to implement Azure File Storage is that we are able to provide a REST interface to Azure file shares. Applications can use the REST interface for a file at the same time that clients invoke the SMB interface because the REST API honors SMB Leases, byte range locks, change notifications, and more, just as SMB clients do.

The two major features added with the release of SMB 3.0 support in the general availability version Azure File Storage not available in the SMB2.1 protocol that the preview implemented are seamless encryption and Persistent Handles. On-the-wire SMB encryption requires both a client and the server to support SMB’s version 3.0 protocol or higher. They collaborate to automatically generate per-session encryption keys so client applications require no modification for use of the feature. SMB 3.0 uses the proven AES 128 CCM encryption algorithm as specified in [RFC4309] to guarantee confidentiality and integrity of the client/server communication.

The addition of encryption allows clients outside of Azure datacenters to use Azure file shares without fear of eavesdropping or tampering by man-in-the-middle attacks. A user or application can literally ‘net use’ a cloud-hosted share from an on-premises client. This can accelerate “lift and shift” projects because you can now keep parts of an application on-premises by simply changing their configuration from using on-premises file shares to Azure File Storage shares. Latency will obviously be higher when using Azure File Storage from outside of Azure than within, but you can use ExpressRoute networking to guarantee highly-available connections with dedicated bandwidth. Note that mounting a share from outside of Azure requires that port 445 (TCP outbound) not be blocked by your ISP or your firewalls.

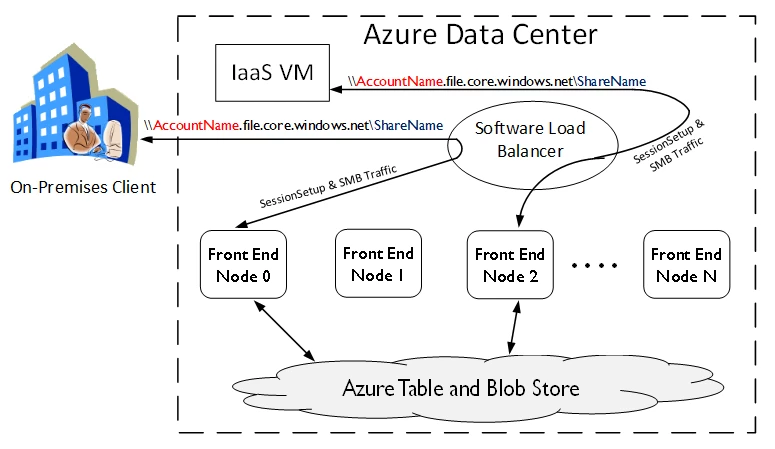

The following diagram illustrates two clients, one on-premises and one inside the Azure datacenter, both connecting to the same share and potentially reading/writing the same file at the same time, even though they are actually connected to different Azure File Storage front ends. With its distributed consistency algorithms Azure File Storage provides the same coherency guarantees as if they were connected to the same physical SMB server.

The second feature introduced with SMB 3.0 support is Persistent Handles. Persistent Handles are an enhancement to SMB 2.1 Durable Handles that removes some of the restrictions of Durable Handles, and includes extra state that is maintained by and sent by the client that Azure File Storage uses after network interruptions and server failures to know what data it durably committed before the disconnect. This is especially important for requests that are not idempotent, since if they were replayed by Azure File Storage if already successfully committed, they may cause application-level data corruption. Persistent Handles allow for transparent reconnects, unlike Durable Handles.

For more details on how to use Azure File Storage, please read the Getting Started with Azure File Storage for Windows and Getting Started with Azure File Storage for Linux.