We recently announced general availability for In-Memory OLTP in Azure SQL Database, for all Premium databases. In-Memory OLTP is not available in databases in the Standard or Basic pricing tiers today.

In-Memory OLTP can provide great performance benefits for transaction processing, data ingestion, and transient data scenarios. It can also help to save cost: you can improve the number of transactions per second, while increasing headroom for future growth, without increasing the pricing tier of the database.

For a sample order processing workload Azure SQL Database is able to achieve 75,000 transactions per second (TPS) in a single database, which is an 11X performance improvement from using In-Memory OLTP, compared with traditional tables and stored procedures. Mileage may vary for different workloads. The following table shows the results for running this workload on the highest available pricing tier, and also shows similar benefits from In-Memory OLTP even in lower pricing tiers.*

| Pricing tier | TPS for In-Memory OLTP | TPS for traditional tables | Performance gain |

| P15 | 75,000 | 6,800 | 11X |

| P2 | 8,900 | 1,000 | 9X |

Table 1: Performance comparison for a sample order processing workload

* For the run on P15 we used a scale factor of 100, with 400 clients; for the P2 run we used scale factor 5, with 200 clients. Scale factor is a measure of database size, where 100 translates to a 15GB database size, when using memory-optimized tables. For details about the workload visit the SQL Server samples GitHub repository.

In this blog post, we are taking a closer look at how the technology works, where the performance benefits come from, and how to best leverage the technology to realize performance improvements in your applications.

Keep in mind that In-Memory OLTP is for transaction processing, data ingestion, data load and transformation, and transient data scenarios. To improve performance of analytics queries, use Columnstore indexes instead. You will find more details about those in the documentation as well as on this blog, in the coming weeks.

How does In-Memory OLTP work?

In-Memory OLTP can provide great performance gains, for the right workloads. One of our customers, Quorum Business Solutions, managed to double a database’s workload while lowering DTU by 70%. In Azure SQL Database, DTU is a measure of the amount of resources that can be utilized by a given database. By reducing resource utilization, Quorum Business Solutions was able to support a larger workload while also increasing the headroom available for future growth, all without increasing the pricing tier of the database.

Now, where does this performance gain and resource efficiency come from? In essence, In-Memory OLTP improves performance of transaction processing by making data access and transaction execution more efficient, and by removing lock and latch contention between concurrently executing transactions: it is not fast because it is in-memory; it is fast because it is optimized around the data being in-memory. Data storage, access, and processing algorithms were redesigned from the ground up to take advantage of the latest enhancements in in-memory and high concurrency computing.

Now, just because data lives in-memory does not mean you lose it when there is a failure. By default, all transactions are fully durable, meaning that you have the same durability guarantees you get for any other table in Azure SQL Database: as part of transaction commit, all changes are written to the transaction log on disk. If there is a failure at any time after the transaction commits, your data is there when the database comes back online. In Azure SQL Database, we manage high availability for you, so you don’t need to worry about it: if an internal failure occurs in our data centers, and the database fails over to a different internal node, the data of every transaction you committed is there. In addition, In-Memory OLTP works with all high availability and disaster recovery capabilities of Azure SQL Database, like point-in-time restore, geo-restore, active geo-replication, etc.

To leverage In-Memory OLTP in your database, you use one or more of the following types of objects:

- Memory-optimized tables are used for storing user data. You declare a table to be memory-optimized at create time.

- Non-durable tables are used for transient data, either for caching or for intermediate result set (replacing traditional temp tables). A non-durable table is a memory-optimized table that is declared with

DURABILITY=SCHEMA_ONLY, meaning that changes to these tables do not incur any IO. This avoids consuming log IO resources for cases where durability is not a concern. - Memory-optimized table types are used for table-valued parameters (TVPs), as well as intermediate result sets in stored procedures. These can be used instead of traditional table types. Table variables and TVPs that are declared using a memory-optimized table type inherit the benefits of non-durable memory-optimized tables: efficient data access, and no IO.

- Natively compiled T-SQL modules are used to further reduce the time taken for an individual transaction by reducing CPU cycles required to process the operations. You declare a Transact-SQL module to be natively compiled at create time. At this time, the following T-SQL modules can be natively compiled: stored procedures, triggers and scalar user-defined functions.

In-Memory OLTP is built into Azure SQL Database, and you can use all these objects in any Premium database. And because these objects behave very similar to their traditional counterparts, you can often gain performance benefits while making only minimal changes to the database and the application. You will find a Transact-SQL script showing an example for each of these types of objects towards the end of this post.

Each database has a cap on the size of memory-optimized tables, which is associated with the number of DTUs of the database or elastic pool. At the time of writing you get one gigabyte of storage for every 125 DTUs or eDTUs. For details about monitoring In-Memory OLTP storage utilization and altering see: Monitor In-Memory Storage.

When and where do you use In-Memory OLTP?

In-Memory OLTP may be new to Azure SQL Database, but it has been in SQL Server since 2014. Since Azure SQL Database and SQL Server share the same code base, the In-Memory OLTP in Azure SQL DB is the same as the In-Memory OLTP in SQL Server. Because the technology has been out for a while, we have learned a lot about usage scenarios and application patterns that really see the benefits of In-Memory OLTP.

Resource utilization in the database

If your goal is to achieve improved performance for the users of you application, whether it is in terms of number of requests you can support every second (i.e., workload throughput) or the time it takes to handle a single request (i.e., transaction latency), you need to understand where is the performance bottleneck. In-Memory OLTP is in the database, and thus it improves the performance of operations that happen in the database. If most of the time is spent in your application code or in network communication between your application and the database, any optimization in the database will have a limited impact on the overall performance.

Azure SQL Database provides resource monitoring capabilities, exposed both through the Azure portal and system views such as sys.dm_db_resource_stats. If any of the resources is getting close to the cap for the pricing tier your database is in, this is an indication of the database being a bottleneck. The main types of resources In-Memory OLTP really helps optimize are CPU and Log IO utilization.

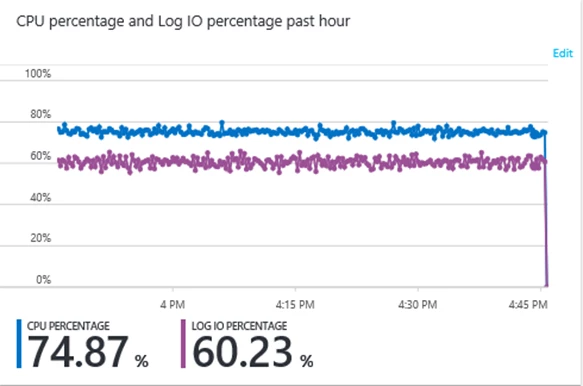

Let’s look at a sample IoT workload* that includes a total of 1 million sensors, where every sensor emits a new reading every 100 seconds. This translates to 10,000 sensor readings needing to be ingested into the database every second. In the tests executed below we are using a database with the P2 pricing tier. The first test uses traditional tables and stored procedures. The following graph, which is a screenshot from the Azure portal, shows resource utilization for these two key metrics.

We see very high CPU and fairly high log IO utilization. Note that the percentages here are relative to the resource caps associated with the DTU count for the pricing tier of the database.

These numbers suggest there is a performance bottleneck in the database. You could allocate more resources to the database by increasing the pricing tier, but you could also leverage In-Memory OLTP. You can reduce resource utilization as follows:

- CPU:

- Replace tables and table variables with memory-optimized tables and table variables, to benefit from the more efficient data access.

- Replace key performance-sensitive stored procedures used for transaction processing with natively compiled stored procedures, to benefit from the more efficient transaction execution.

- Log IO:

- Memory-optimized tables typically incur less log IO than traditional tables, because index operations are not logged.

- Non-durable tables and memory-optimized table variables and TVPs completely remove log IO for transient data scenarios. Note that traditional temp table and table variables have some associated log IO.

Resource utilization with In-Memory OLTP

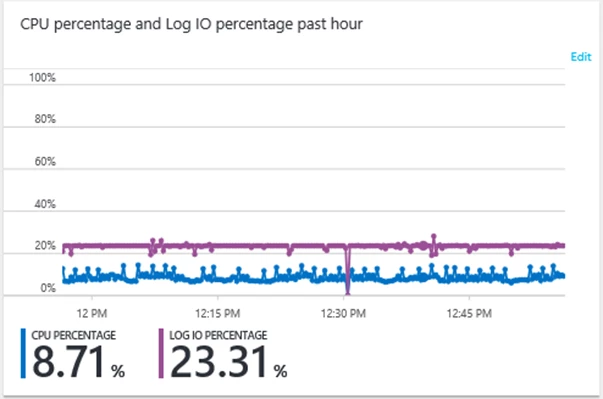

Let’s look at the same workload as above, 10,000 sensor readings ingested per second in a P2 database, but using In-Memory OLTP.

After implementing a memory-optimized table, memory-optimized table type, and a natively compiled stored procedure we see the following resource utilization profile.

As you can see, these optimizations resulted in a more than 2X reduction in log IO and 8X reduction in CPU utilization, for this workload. Implementing In-Memory OLTP in this workload has provided a number of benefits, including:

- Increased headroom for future growth. In this example workload, the P2 database could accommodate 1 million sensors with each sensor emitting a new reading every 100 seconds. With In-Memory OLTP the same P2 database can now accommodate more than double the number of sensors, or increase the frequency with which sensor readings are emitted.

- A lot of resources are freed up for running queries to analyze the sensor readings, or do other work in the database. And because memory-optimized tables are lock- and latch-free, there is no contention between the write operations and the queries.

- In this example you could even downgrade the database to a P1 and sustain the same workload, with some additional headroom as well. This would mean cutting the cost for operating the database in half.

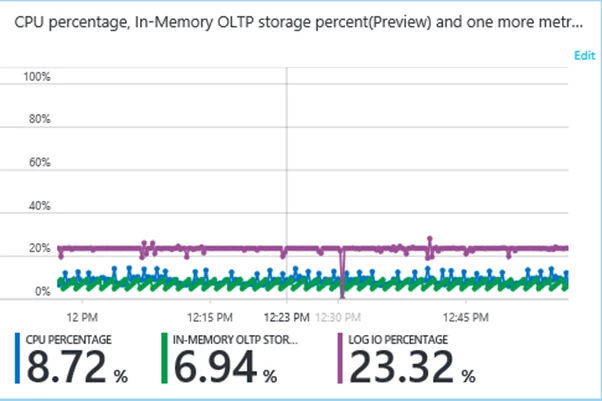

Do keep in mind that the data in memory-optimized tables does need to fit in the In-Memory OLTP storage cap associated with the pricing tier of your database. Let’s see what the In-Memory OLTP storage utilization looks like for this workload:

We see that In-Memory OLTP storage utilization (the green line) is around 7% on average. Since this is a pure data ingestion workload, continuously adding sensor readings to the database, you may wonder, “how come the In-Memory OLTP storage utilization is not increasing over time?”

Well, we are using a memory-optimized temporal table. This means the table maintaining it’s own history, and the history lives on-disk. Azure SQL Database takes care of the movement between memory and disk under the hood. For data ingestion workloads that are temporal in nature, this is a great solution to manage the in-memory storage footprint.

* to replicate this experiment, change the app.config in the sample app as follows: commandDelay=1 and enableShock=0; in addition, to recreate the “before” picture, change table and table type to disk-based (i.e., MEMORY_OPTIMIZED=OFF) and remove NATIVE_COMPILATION and ATOMIC from the stored procedure

Usage scenarios for In-Memory OLTP

As noted at the top of this post, In-Memory OLTP is not a magic go-fast button, and is not suitable for all workloads. For example, memory-optimized tables will not really bring down your CPU utilization if most of the queries are performing aggregation over large ranges of data – Columnstore helps for that scenario.

Here is a list of scenarios and application patterns where we have seen customers be successful with In-Memory OLTP. Note that these apply equally to SQL Server and Azure SQL Database, since the underlying technology is the same.

High-throughput and low-latency transaction processing

This is really the core scenario for which we built In-Memory OLTP: support large volumes of transactions, with consistent low latency for individual transactions.

Common workload scenarios are: trading of financial instruments, sports betting, mobile gaming, and ad delivery. Another common pattern we’ve seen is a “catalog” that is frequently read and/or updated. One example is where you have large files, each distributed over a number of nodes in a cluster, and you catalog the location of each shard of each file in a memory-optimized table.

Implementation considerations

Use memory-optimized tables for your core transaction tables, i.e., the tables with the most performance-critical transactions. Use natively compiled stored procedures to optimize execution of the logic associated with the business transaction. The more of the logic you can push down into stored procedures in the database, the more benefit you will see from In-Memory OLTP.

To get started in an existing application, use the transaction performance analysis report to identify the objects you want to migrate, and use the memory-optimization and native compilation advisors to help with migration.

Data ingestion, including IoT (Internet-of-Things)

In-Memory OLTP is really good at ingesting large volumes of data from many different sources at the same time. And it is often beneficial to ingest data into a SQL database compared with other destinations, because SQL makes running queries against the data really fast, and allows you to get real-time insights.

Common application patterns are: Ingesting sensor readings and events, to allow notification, as well as historical analysis. Managing batch updates, even from multiple sources, while minimizing the impact on the concurrent read workload.

Implementation considerations

Use a memory-optimized table for the data ingestion. If the ingestion consists mostly of inserts (rather than updates) and In-Memory OLTP storage footprint of the data is a concern, either

- Use a job to regularly batch-offload data to a disk-based table with a Clustered Columnstore index; or

- Use a temporal memory-optimized table to manage historical data – in this mode, historical data lives on disk, and data movement is managed by the system.

The following sample is a smart grid application that uses a temporal memory-optimized table, a memory-optimized table type, and a natively compiled stored procedure, to speed up data ingestion, while managing the In-Memory OLTP storage footprint of the sensor data: release and source code.

Caching and session state

The In-Memory OLTP technology makes SQL really attractive for maintaining session state (e.g., for an ASP.NET application) and for caching.

ASP.NET session state is a very successful use case for In-Memory OLTP. With SQL Server, one customer was about to achieve 1.2 Million requests per second. In the meantime they have started using In-Memory OLTP for the caching needs of all mid-tier applications in the enterprise. Details: https://blogs.msdn.microsoft.com/sqlcat/2016/10/26/how-bwin-is-using-sql-server-2016-in-memory-oltp-to-achieve-unprecedented-performance-and-scale/

Implementation considerations

You can use non-durable memory-optimized tables as a simple key-value store by storing a BLOB in a varbinary(max) columns. Alternatively, you can implement a semi-structured cache with JSON support in Azure SQL Database. Finally, you can create a full relational cache through non-durable tables with a full relational schema, including various data types and constraints.

Get started with memory-optimizing ASP.NET session state by leveraging the scripts published on GitHub to replace the objects created by the built-in session state provider.

Tempdb object replacement

Leverage non-durable tables and memory-optimized table types to replace your traditional tempdb-based #temp tables, table variables, and table-valued parameters.

Memory-optimized table variables and non-durable tables typically reduce CPU and completely remove log IO, when compared with traditional table variables and #temp table.

Case study illustrating benefits of memory-optimized table-valued parameters in Azure SQL Database: https://blogs.msdn.microsoft.com/sqlserverstorageengine/2016/04/07/a-technical-case-study-high-speed-iot-data-ingestion-using-in-memory-oltp-in-azure/

Implementation considerations

To get started see: Improving temp table and table variable performance using memory optimization.

ETL (Extract Transform Load)

ETL workflows often include load of data into a staging table, transformations of the data, and load into the final tables.

Implementation considerations

Use non-durable memory-optimized tables for the data staging. They completely remove all IO, and make data access more efficient.

If you perform transformations on the staging table as part of the workflow, you can use natively compiled stored procedures to speed up these transformations. If you can do these transformations in parallel you get additional scaling benefits from the memory-optimization.

Getting started

The following script illustrates how you create In-Memory OLTP objects in your database.

-- memory-optimized table

CREATE TABLE dbo.table1

( c1 INT IDENTITY PRIMARY KEY NONCLUSTERED,

c2 NVARCHAR(MAX))

WITH (MEMORY_OPTIMIZED=ON)

GO

-- non-durable table

CREATE TABLE dbo.temp_table1

( c1 INT IDENTITY PRIMARY KEY NONCLUSTERED,

c2 NVARCHAR(MAX))

WITH (MEMORY_OPTIMIZED=ON,

DURABILITY=SCHEMA_ONLY)

GO

-- memory-optimized table type

CREATE TYPE dbo.tt_table1 AS TABLE

( c1 INT IDENTITY,

c2 NVARCHAR(MAX),

is_transient BIT NOT NULL DEFAULT (0),

INDEX ix_c1 HASH (c1) WITH (BUCKET_COUNT=1024))

WITH (MEMORY_OPTIMIZED=ON)

GO

-- natively compiled stored procedure

CREATE PROCEDURE dbo.usp_ingest_table1

@table1 dbo.tt_table1 READONLY

WITH NATIVE_COMPILATION, SCHEMABINDING

AS

BEGIN ATOMIC

WITH (TRANSACTION ISOLATION LEVEL=SNAPSHOT,

LANGUAGE=N'us_english')

DECLARE @i INT = 1

WHILE @i > 0

BEGIN

INSERT dbo.table1

SELECT c2

FROM @table1

WHERE c1 = @i AND is_transient=0

IF @@ROWCOUNT > 0

SET @i += 1

ELSE

BEGIN

INSERT dbo.temp_table1

SELECT c2

FROM @table1

WHERE c1 = @i AND is_transient=1

IF @@ROWCOUNT > 0

SET @i += 1

ELSE

SET @i = 0

END

END

END

GO

-- sample execution of the proc

DECLARE @table1 dbo.tt_table1

INSERT @table1 (c2, is_transient) VALUES (N'sample durable', 0)

INSERT @table1 (c2, is_transient) VALUES (N'sample non-durable', 1)

EXECUTE dbo.usp_ingest_table1 @table1=@table1

SELECT c1, c2 from dbo.table1

SELECT c1, c2 from dbo.temp_table1

GO

A more comprehensive sample leveraging In-Memory OLTP and demonstrating performance benefits can be found at: Install the In-Memory OLTP sample.

The smart grid sample database and workload used for the above illustration of the resource utilization benefits of In-Memory OLTP can be found here: release and source code.

Try In-Memory OLTP in your Azure SQL Database today!