This post is co-authored with Ravi Panchumarthy and Mattson Thieme from Intel.

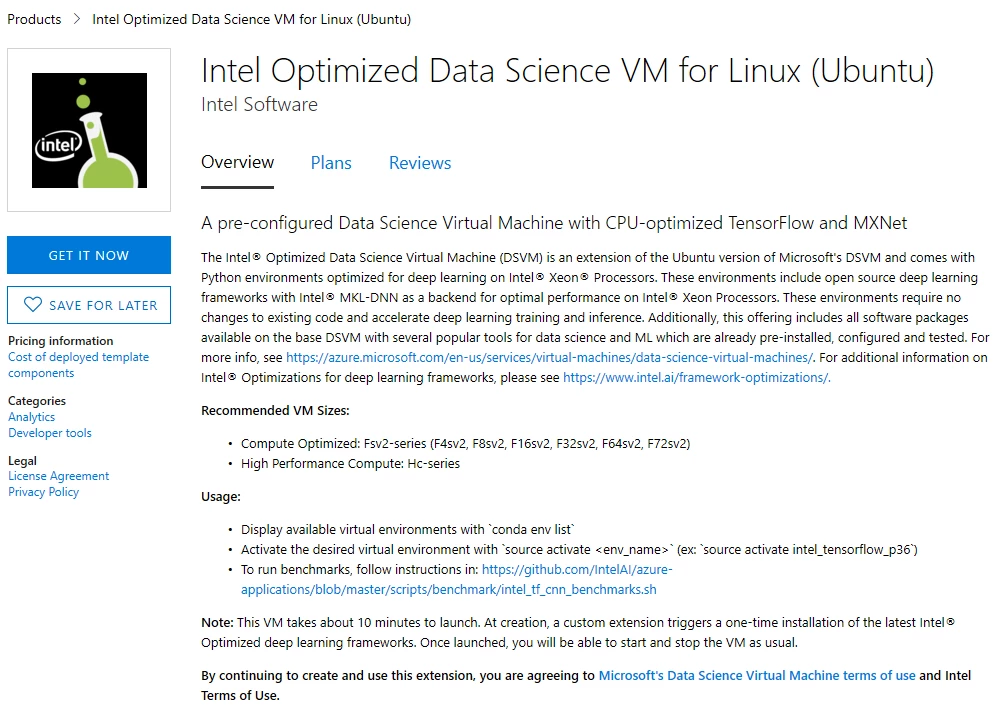

We are happy to announce that Microsoft and Intel are partnering to bring optimized deep learning frameworks to Azure. These optimizations are available in a new offering on the Azure marketplace called the Intel Optimized Data Science VM for Linux (Ubuntu).

Over the last few years, deep learning has become the state of the art for several machine learning and cognitive applications. Deep learning is a machine learning technique that leverages neural networks with multiple layers of non-linear transformations, so that the system can learn from data and build accurate models for a wide range of machine learning problems. Computer vision, language understanding, and speech recognition are all examples of deep learning at play today. Innovations in deep neural networks in these domains have enabled these algorithms to reach human level performance in vision, speech recognition and machine translation. Advances in this field continually excite data scientists, organizations and media outlets alike. To many organizations and data scientists, doing deep learning well at scale poses challenges due to technical limitations.

Often, default builds of popular deep learning frameworks like TensorFlow are not fully optimized for training and inference on CPU. In response, Intel has open-sourced framework optimizations for Intel® Xeon processors. Now, through partnering with Microsoft, Intel is helping you accelerate your own deep learning workloads on Microsoft Azure with this new marketplace offering.

“Microsoft is always looking at ways in which our customers can get the best performance for a wide range of machine learning scenarios on Azure. We are happy to partner with Intel to combine the toolsets from both the companies and offer them in a convenient pre-integrated package on the Azure marketplace for our users”

– Venky Veeraraghavan, Partner Group Program manager, ML platform team, Microsoft.

Accelerating Deep Learning Workloads on Azure

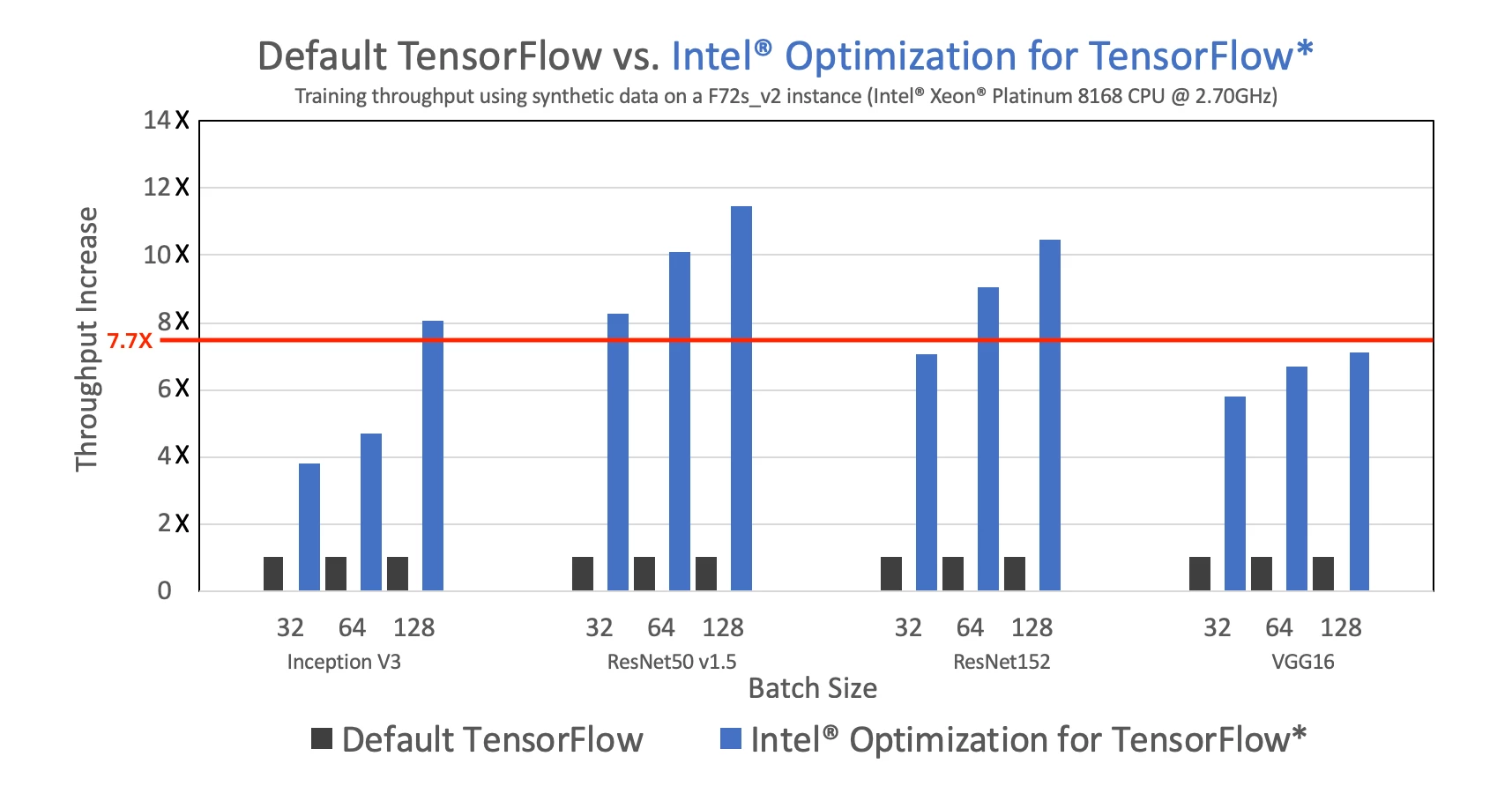

Built on the top of the popular Data Science Virtual Machine (DSVM), this offer adds on new Python environments that contain Intel’s optimized versions of TensorFlow and MXNet. These optimizations leverage the Intel® Advanced Vector Extensions 512 (Intel® AVX-512) and Intel® Math Kernel Library for Deep Neural Networks (Intel® MKL-DNN) to accelerate training and inference on Intel® Xeon® Processors. When running on an Azure F72s_v2 VM instance, these optimizations yielded an average of 7.7X speedup in training throughput across all standard CNN topologies. You can find more details on the optimization practice here.

As a data scientist or AI developer, this change is quite transparent. You still code with the standard TensorFlow or MXNet frameworks. You can also use the new set of Python (conda) environments (intel_tensorflow_p36, intel_mxnet_p36) on the DSVM to run your code to take full advantage of all the optimizations on an Intel® Xeon Processor based F-Series or H-Series VM instance on Azure. Since this product is built using the DSVM as the base image, all the rich tools for data science and machine learning are still available to you. Once you develop your code and train your models, you can deploy them for inferencing on either the cloud or edge.

“Intel and Microsoft are committed to democratizing artificial intelligence by making it easy for developers and data scientists to take advantage of Intel hardware and software optimizations on Azure for machine learning applications. The Intel Optimized Data Science Virtual Machine (DSVM) provides up to a 7.7X speedup on existing frameworks without code modifications, benefiting Microsoft and Intel customers worldwide”

– Binay Ackalloor, Director Business Development, AI Products Group, Intel.

Performance

In Intel’s benchmark tests run on Azure F72s_v2 instance, here are the results comparing the optimized version of TensorFlow with the standard TensorFlow builds.

Figure 1: Intel® Optimization for TensorFlow provides an average of 7.7X increase (average indicated by the red line) in training throughput on major CNN topologies. Run your own benchmarks using tf_cnn_benchmarks. Performance results are based on Intel testing as of 01/15/2019. Find the complete testing configuration here.

Getting Started

To get started with the Intel Optimized DSVM, click on the offer in the Azure Marketplace, then click “GET IT NOW”. Once you answer a few simple questions on the Azure Portal, your VM is created with all the DSVM tool sets and the Intel optimized deep learning frameworks pre-configured and ready to use.

The Intel Optimized Data Science VM is an ideal environment to develop and train deep learning models on Intel Xeon processor-based VM instances on Azure. Microsoft and Intel will continue their long partnership to explore additional AI solutions and framework optimizations to other services on Azure like the Azure Machine Learning service and Azure IoT Edge.

Next steps

- Create your Intel Optimized Data Science VM instance from the Azure Marketplace.

- Learn more about the Intel Optimized Data Science VM.

- Build AI solutions and deploy machine learning models in production at scale using Azure Machine Learning service.

- New to Azure? Get your free trial.