As AI reaches critical momentum across industries and applications, it becomes essential to ensure the safe and responsible use of AI. AI deployments are increasingly impacted by the lack of customer trust in the transparency, accountability, and fairness of these solutions. Microsoft is committed to the advancement of AI and machine learning (ML), driven by principles that put people first, and tools to enable this in practice.

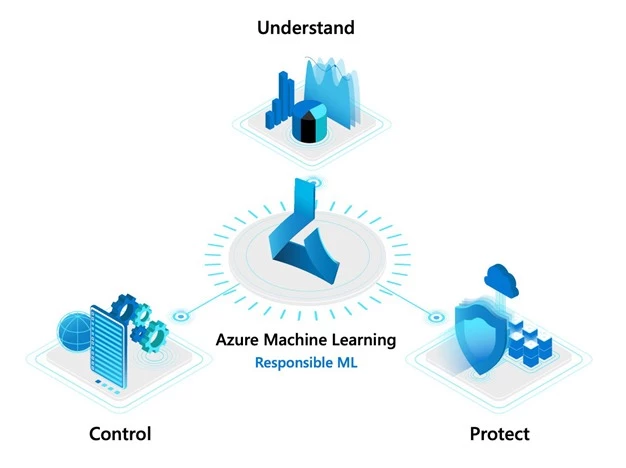

In collaboration with the Aether Committee and its working groups, we are bringing the latest research in responsible AI to Azure. Let’s look at how the new responsible ML capabilities in Azure Machine Learning and our open-source toolkits empower data scientists and developers to understand ML models, protect people and their data, and control the end-to-end ML process.

Understand

As ML becomes deeply integrated into our daily business processes, transparency is critical. Azure Machine Learning helps you to not only understand model behavior but also assess and mitigate unfairness.

Interpret and explain model behavior

Model interpretability capabilities in Azure Machine Learning, powered by the InterpretML toolkit, enable developers and data scientists to understand model behavior and provide model explanations to business stakeholders and customers.

Use model interpretability to:

- Build accurate ML models.

- Understand the behavior of a wide variety of models, including deep neural networks, during both training and inferencing phases.

- Perform what-if analysis to determine the impact on model predictions when feature values are changed.

“Azure Machine Learning helps us build AI responsibly and build trust with our customers. Using the interpretability capabilities in the fraud detection efforts for our loyalty program, we are able to understand models better, identify genuine cases of fraud, and reduce the possibility of erroneous results.”

—Daniel Engberg, Head of Data Analytics and Artificial Intelligence, Scandinavian Airlines

Assess and mitigate model unfairness

A challenge with building AI systems today is the inability to prioritize fairness. Using Fairlearn with Azure Machine Learning, developers and data scientists can leverage specialized algorithms to ensure fairer outcomes for everyone.

Use fairness capabilities to:

- Assess model fairness during both model training and deployment.

- Mitigate unfairness while optimizing model performance.

- Use interactive visualizations to compare a set of recommended models that mitigate unfairness.

“Azure Machine Learning and its Fairlearn capabilities offer advanced fairness and explainability that have helped us deploy trustworthy AI solutions for our customers, while enabling stakeholder confidence and regulatory compliance.” —Alex Mohelsky, EY Canada Partner and Advisory Data, Analytic and AI Leader

Protect

ML is increasingly used in scenarios that involve sensitive information like medical patient or census data. Current practices, such as redacting or masking data, can be limiting for ML. To address this issue, differential privacy and confidential machine learning techniques can be used to help organizations build solutions while maintaining data privacy and confidentiality.

Prevent data exposure with differential privacy

Using the new differential privacy toolkit with Azure Machine Learning, data science teams can build ML solutions that preserve privacy and help prevent reidentification of an individual’s data. These differential privacy techniques have been developed in collaboration with researchers at Harvard’s Institute for Quantitative Social Science (IQSS) and School of Engineering.

Differential privacy protects sensitive data by:

- Injecting statistical noise in data, to help prevent disclosure of private information, without significant accuracy loss.

- Managing exposure risk by tracking the information budget used by individual queries and limiting further queries as appropriate.

Safeguard data with confidential machine learning

In addition to data privacy, organizations are looking to ensure security and confidentiality of all ML assets.

To enable secure model training and deployment, Azure Machine Learning provides a strong set of data and networking protection capabilities. These include support for Azure Virtual Networks, private links to connect to ML workspaces, dedicated compute hosts, and customer managed keys for encryption in transit and at rest.

Building on this secure foundation, Azure Machine Learning also enables data science teams at Microsoft to build models over confidential data in a secure environment, without being able to see the data. All ML assets are kept confidential during this process. This approach is fully compatible with open source ML frameworks and a wide range of hardware options. We are excited to bring these confidential machine learning capabilities to all developers and data scientists later this year.

Control

To build responsibly, the ML development process should be repeatable, reliable, and hold stakeholders accountable. Azure Machine Learning enables decision makers, auditors, and everyone in the ML lifecycle to support a responsible process.

Track ML assets using audit trail

Azure Machine Learning provides capabilities to automatically track lineage and maintain an audit trail of ML assets. Details—such as run history, training environment, and data and model explanations—are all captured in a central registry, allowing organizations to meet various audit requirements.

Increase accountability with model datasheets

Datasheets provide a standardized way to document ML information such as motivations, intended uses, and more. At Microsoft, we led research on datasheets, to provide transparency to data scientists, auditors and decision makers. We are also working with the Partnership on AI and leaders across industry, academia, and government to develop recommended practices and a process called ABOUT ML. The custom tags capability in Azure Machine Learning can be used to implement datasheets today and over time we will release additional features.

Start innovating responsibly

In addition to the new capabilities in Azure Machine Learning and our open-source tools, we have also developed principles for the responsible use of AI. The new responsible ML innovations and resources are designed to help developers and data scientists build more reliable, fairer, and trustworthy ML. Join us today and begin your journey with responsible ML!

Additional resources

- Learn more about responsible ML.

- Get started with a free trial of Azure Machine Learning.

- Learn more about Azure Machine Learning and follow the quick start guides and tutorials.

A previous version of this story referred to the differential privacy toolkit as WhiteNoise.