This post was co-authored by Maneesh Sah, Corporate Vice President, Azure Storage Engineering.

Container is the new virtual machine (VM). Whether you are a CTO, enterprise architect, leading DevOps teams, or building applications, you have already embarked on the journey to containerize your applications or are raring to go—to maximize the benefits of scale, flexibility, and cost. With Kubernetes at the helm, containers have rapidly become a hotbed of innovation and a critical area of transformation for enterprises and startups alike. After the initial focus on stateless containers, running high scale stateful workloads on containers has now become the norm. To run business-critical, enterprise-grade applications on Kubernetes in the cloud, customers need highly scalable, cost-efficient, and performant storage—built-for and with intrinsic support for containers. Today, we are excited to announce the preview of Azure Container Storage, the industry’s first platform-managed container native storage service in the public cloud, providing end to end storage management and orchestration for stateful applications to run efficiently at scale on Azure.

Why Azure Container Storage?

With rapid adoption of Kubernetes, we see a surge of production workloads, both cloud-first as well as app modernization, that need container-native persistent storage for databases (such as MySQL), big data (such as ElasticSearch), messaging applications (such as Kafka), and continuous integration and continuous delivery (CI/CD) systems (such as Jenkins). To run these stateful applications, customers need operational simplicity to deploy and scale storage tightly coupled with the containerized applications. Customers today, however, need to choose between using VM centric cloud storage options, retrofitted to containers, or deploying and self-managing open-source container storage solutions in the cloud—leading to huge operational overhead, scaling bottlenecks, and high cost.

To provide customers with a seamless end-to-end experience, container native storage needs to enable:

- Seamless volume mobility across the cluster to maximize pod availability without bottlenecks on volume attaches and deletes.

- Rapid scaling of large number of volumes as application pods scale up or scale out as needed.

- Optimal price-performance for any volume sizes, especially small volumes that require higher input/output operations per second (IOPS).

- Simple and consistent volume management experience across backing storage types to match workload requirements, such as extremely low latency ephemeral disks versus persistent or scalable remote storage.

Azure Container Storage addresses these requirements by enabling customers to focus their attention on running workloads and applications rather than managing storage. Azure Container Storage is our first step towards providing a transformative storage experience. As a critical addition to Azure’s suite of container services, it will help organizations of all sizes to streamline their containerization efforts and improve their overall storage management capabilities.

Leveraging Azure Container Storage

Azure Container Storage is a purpose-built, software-defined storage solution that delivers a consistent control plane across multiple backing storage options to meet the needs of stateful container applications. This fully managed service provides a volume management layer for stateful container applications enabling storage orchestration, data management, Kubernetes-aware data protection, and rule-based performance scaling.

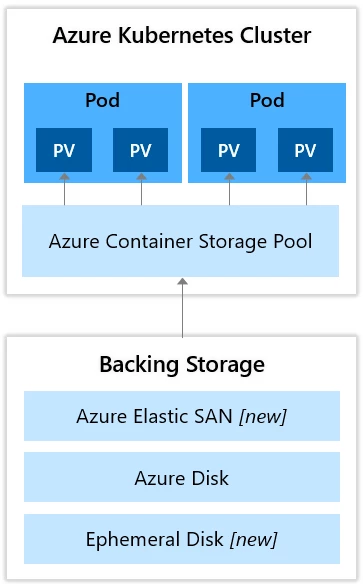

Aligning with open-source container native storage approaches, Azure Container Storage runs microservices-based storage controllers in Kubernetes, to abstract the storage management layer from pods and backing storage, enabling portability across Kubernetes nodes and ability to mount different storage options as shown in the figure.

Azure Container Storage components include:

- A Storage Pool, which is a collection of storage resources grouped and presented as a unified storage entity for your AKS cluster.

- A data services layer, responsible for replication, encryption, and other add-on functionality absent in the underlying storage provider.

- A protocol layer, which exposes provisioned volumes via NVMe-oF protocol to application pods.

With this approach Azure Container Storage offers several differentiated experiences to customers on Azure, including:

Lowering the total cost of ownership (TCO) by providing ability to scale IOPS on smaller volume sizes, to support containerized applications that have dynamic and fluctuating input/output (IO) requirements. This is enabled using shared provisioning of capacity and performance on a storage pool, which can be leveraged by multiple volumes. With shared provisioning, customers can now maximize performance across application containers while keeping TCO down. Instead of allocating capacity and IOPS per persistent volume (PV), which commonly leads to overprovisioning, customers can now create PVs and dynamically share resources from a Storage Pool.

Rapid scale-out of stateful pods, achieved using remote network protocols like NVME-oF and iSCSI to mount PV, enabling effortless scaling on AKS across Compute and Storage. This is specifically beneficial for container deployments that start small and iteratively add resources. Responsiveness is key to ensure that applications are not starved or disrupted, either during initialization or scaling in production. Additionally, application resiliency is key with pod respawns across the cluster requiring rapid PV movement. Leveraging remote network protocols allows us to tightly couple with the pod lifecycle to support highly resilient high scale stateful applications on AKS.

Simplified consistent volume management interface backed by local and remote storage options enabling customers to allocate and use storage via the Kubernetes control plane. This means that customers can leverage ephemeral disks, Azure Disks as well as Azure Elastic SAN via a unified management interface to meet workload needs. For instance, ephemeral storage may be preferable for Cassandra to achieve the lowest latency, while Azure Disks is suitable for PostgreSQL or other database solutions. This unified experience provided by Azure Container Storage simplifies the management of persistent volumes, while delivering a comprehensive solution to address the broad range of performance requirements of various containerized workloads.

Fully integrated day-2 experiences, including data protection, cross-cluster recovery, and observability providing operational simplicity for customers who need to create customer scripts or stitch together disparate tools today. Customers can orchestrate Kubernetes-aware backup of the persistent volumes integrated with AKS generally available to streamline the end-to-end experiences for running stateful container workloads on Azure.

Refer to the technical community blog post for more details about Azure Container Storage.

Getting started

Sign up to participate in the preview and deploy your first stateful container application today.

Refer to the Azure Container Storage documentation to learn more about the service.

We are confident that this new offer will significantly accelerate app modernization and cloud migration. We look forward to hearing your feedback. Please email us at azcontainerstorage@microsoft.com with any questions.