Achieving scalability in quantum computing

As the path to build a quantum computer continues, challenges from across industries await solutions from this new computational power. One of the many examples of high-impact problems that can be solved on a quantum computer is developing a new alternative to fertilizer production. Making fertilizer requires a notable percentage of the world’s annual production of natural gas. This implies high cost, high energy waste, and substantial greenhouse emissions. Quantum computers can help identify a new alternative by analyzing nitrogenase, an enzyme in plants that converts nitrogen to ammonia naturally. To address this problem, a quantum computer would require at least 200 fault-free qubits—far beyond the small quantum systems of today. In order to find a solution, quantum computers must scale up. The challenge, however, is that scaling a quantum computer isn’t merely as simple as adding more qubits.

Building a quantum computer differs greatly from building a classical computer. The underlying physics, the operating environment, and the engineering each pose their own obstacles. With so many unique challenges, how can a quantum computer scale in a way that makes it possible to solve some of the world’s most challenging problems?

Navigating obstacles

Most quantum computers require temperatures colder than those found in deep space. To reach these temperatures, all the components and hardware are contained within a dilution refrigerator—highly specialized equipment that cools the qubits to just above absolute zero. Because standard electronics don’t work at these temperatures, a majority of quantum computers today use room-temperature control. With this method, controls on the outside of the refrigerator send signals through cables, communicating with the qubits inside. The challenge is that this method ultimately reaches a roadblock: the heat created by the sheer number of cables limits the output of signals, restraining the number of qubits that can be added.

As more control electronics are added, more effort is needed to maintain the very low temperature the system requires. Increasing both the size of the refrigerator and the cooling capacity is a potential option, however, this would require additional logistics to interface with the room temperature electronics, which may not be a feasible approach.

Another alternative would be to break the system into separate refrigerators. Unfortunately, this isn’t ideal either because the transfer of quantum data between the refrigerators is likely to be slow and inefficient.

At this stage in the development of quantum computers, size is therefore limited by the cooling capacity of the specialized refrigerator. Given these parameters, the electronics controlling the qubits must be as efficient as possible.

Physical qubits, logical qubits, and the role of error correction

By nature, qubits are fragile. They require a precise environment and state to operate correctly, and they’re highly prone to outside interference. This interference is referred to as ‘noise’, which is a consistent challenge and a well-known reality of quantum computing. As a result, error correction plays a significant role.

As a computation begins, the initial set of qubits in the quantum computer are referred to as ‘physical qubits’. Error correction works by grouping many of these fragile physical qubits, which creates a smaller number of usable qubits that can remain immune to noise long enough to complete the computation. These stronger, more stable qubits used in the computation are referred to as ‘logical qubits’.

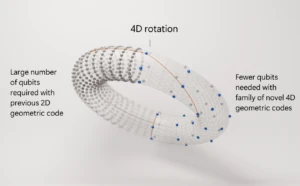

In classical computing, noisy bits are fixed through duplication (parity and Hamming codes), which is a way to correct errors as they occur. A similar process occurs in quantum computing, but is more difficult to achieve. This results in significantly more physical qubits than the number of logical qubits required for the computation. The ratio of physical to logical qubits is influenced by two factors: 1) the type of qubits used in the quantum computer, and 2) the overall size of the quantum computation performed. And due to the known difficulty of scaling the system size, reducing the ratio of physical to logical qubits is critical. This means that instead of just aiming for more qubits, it is crucial to aim for better qubits.

Stability and scale with a topological qubit

The topological qubit is a type of qubit that offers more immunity to noise than many traditional types of qubits. Topological qubits are more robust against outside interference, meaning fewer total physical qubits are needed when compared to other quantum systems. With this improved performance, the ratio of physical to logical qubits is reduced, which in turn, creates the ability to scale.

As we know from Schrödinger’s cat, outside interactions can destroy quantum information. Any interaction from a stray particle, such as an electron, a photon, a cosmic ray, etc., can cause the quantum computer to decohere.

There is a way to prevent this: parts of the electron can be separated, creating an increased level of protection for the information stored. This is a form of topological protection known as a Majorana quasi-particle. The Majorana quasi-particle was predicted in 1937 and was detected for the first time in the Microsoft Quantum lab in the Netherlands in 2012. This separation of the quantum information creates a stable, robust building block for a qubit. The topological qubit provides a better foundation with lower error rates, reducing the ratio of physical to logical qubits. With this reduced ratio, more logical qubits are able to fit inside the refrigerator, creating the ability to scale.

If topological qubits were used in the example of nitrogenase simulation, the required 200 logical qubits would be built out of thousands of physical qubits. However, if more traditional types of qubits were used, tens or even hundreds of thousands of physical qubits would be needed to achieve 200 logical qubits. The topological qubit’s improved performance causes this dramatic difference; fewer physical qubits are needed to achieve the logical qubits required.

Developing a topological qubit is extremely challenging and is still underway, but these benefits make the pursuit well worth the effort.

A solid foundation to tackle problems unsolved by today’s computers

A significant number of logical qubits are required to address some of the important problems currently unsolvable by today’s computers. Yet common approaches to quantum computing require massive numbers of physical qubits in order to reach these quantities of logical qubits—creating a huge roadblock to scalability. Instead, a topological approach to quantum computing requires far fewer physical qubits than other quantum systems, making scalability much more achievable.

Providing a more solid foundation, the topological approach offers robust, stable qubits, and helps to bring the solutions to some of our most challenging problems within reach.

Additional resources

- Follow along on our path to scalable quantum computing with the Microsoft Quantum newsletter

- Learn how we’re empowering the quantum revolution on the Microsoft Quantum website

- Get started with quantum development by downloading the Microsoft Quantum Development Kit