Recently we published a Windows Network Security Whitepaper (download from here) that gives insights on how customers can take advantage of the platform’s native features to best protect their information assets.

This post from Walter Myers, Principal Consultant expands on this whitepaper and describes how to isolate VMs inside a Virtual Network at the network level.

Introduction

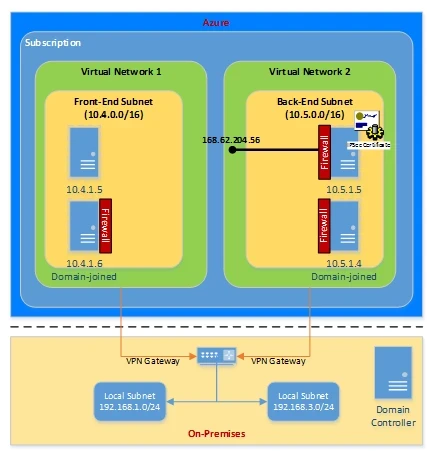

Application isolation is an important concern in enterprise environments, as enterprise customers seek to protect various environments from unauthorized or unwanted access. This includes the classic front-end and back-end scenario where machines in a particular back-end network or sub-network may only allow certain clients or other computers to connect to a particular endpoint based on a whitelist of IP addresses. These scenarios can be readily implemented in Windows Azure whether client applications access virtual machine application servers from the internet, within the Azure environment, or from on-premises through a VPN connection.

Machine Isolation Options

There are three basic options to be discussed in this paper where machine isolation may be implemented on the Windows Azure platform:

- Between machines deployed to a single virtual network

- Between machines deployed to distinct virtual networks

- Between machines deployed to distinct virtual networks where a VPN connection has been established from on-premises with both virtual networks

These options will be detailed in the sections that follow.

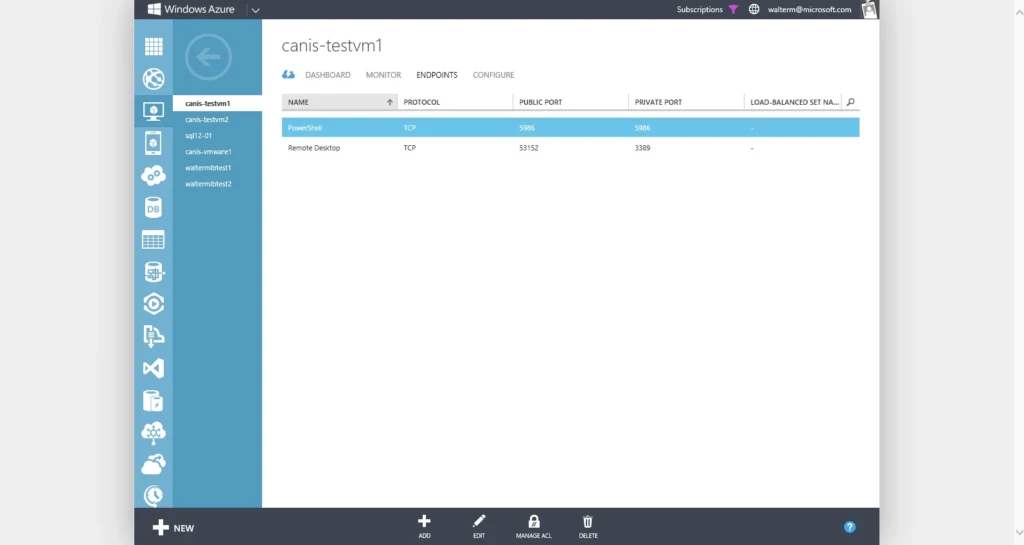

By default, Windows Server virtual machines created from the gallery will have two public endpoints, specifically RDP and Remote PowerShell connections. There will be no other public endpoints except additional endpoints that are added by the administrator. These endpoints and any others created by the administrator may be secured with access control lists (ACLs) on any given IaaS virtual machine. As of this writing ACLs are available for IaaS virtual machines, but not for PaaS web or worker roles.

How Network ACLs Work

An ACL is an object that contains a list of rules. When you create an ACL and apply it to a virtual machine endpoint, packet filtering takes place on the host node of your virtual machine. This means the traffic from remote IP addresses is filtered by the host node for matching ACL rules instead of on your virtual machine. This prevents your virtual machine from spending the precious CPU cycles on packet filtering.

When a virtual machine is created, a default ACL is put in place to block all incoming traffic. However, if an input endpoint is created (for example, port 3389), then the default ACL is modified to allow all inbound traffic for that endpoint. As discussed above, when a virtual machine is created from the Azure gallery, a PowerShell endpoint and an RDP endpoint are created using standard private ports but randomly generated public ports, as seen in the portal below. Inbound traffic from any remote subnet is then restricted to those endpoints and no firewall provisioning is required. All other ports are blocked for inbound traffic unless endpoints are created for those ports. Outbound traffic is allowed by default.

Using Network ACLs, you can do the following:

- Selectively permit or deny incoming traffic based on remote subnet IPv4 address range to a virtual machine input endpoint.

- Blacklist IP addresses

- Create multiple rules per virtual machine endpoint

- Specify up to 50 ACL rules per virtual machine endpoint

- Use rule ordering to ensure the correct set of rules are applied on a given virtual machine endpoint (lowest to highest)

- Specify an ACL for a specific remote subnet IPv4 address.

So network ACLs are the key to protecting virtual machine public endpoints and controlling that type of access to them. Currently, you can specify network ACLs for IaaS virtual machines input endpoints, which allow you to control access from the internet to each virtual machine. Unless you specify endpoints, the virtual machines in a virtual network do not get incoming traffic and this is equivalent to having a default deny ACL at the network level which you can override on a per virtual machine basis. You cannot currently specify an ACL on a specific subnet contained in a virtual network, and we are looking into this for the future.

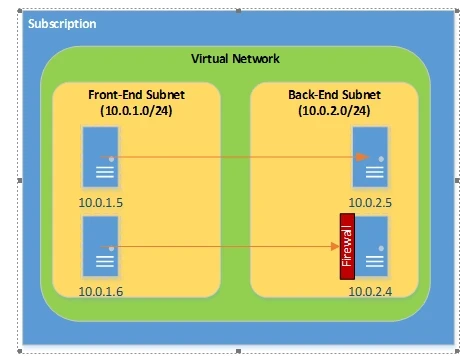

Option 1: Subnets within a Single Virtual Network

Currently, Windows Azure provides routing across subnets within a single virtual network, but does not provide any type of network ACL capability with respect to internal DIP addresses. So in order to restrict access to machines within a single virtual network, those machines must leverage Windows Firewall with Advanced Security, as depicted simply in the diagram below.

In order to secure the server, Windows Firewall could be configured to block all inbound connections, and inbound rules would be setup to determine:

1) what local ports will accept connections,

2) what remote ports from which connections will be accepted,

3) what remote IP addresses will be accepted,

4) what authorized users can make connections, and

5) what authorized computers can make connections

In this case, the firewall exceptions would include local Dynamic IP (DIP) addresses within its own subnet and across other subnets configured for the virtual network. Any public endpoints would be secured with network ACLs. Firewall exceptions should, of course, include the private ports for public endpoints established with network ACLS.

Option 2: Subnets in Different Virtual Networks

In order to protect virtual machines from other machines deployed in other Azure virtual networks, or machines in other Azure cloud services not associated with a virtual network, or machines outside the Windows Azure platform, the Windows Azure network ACL feature would be used to provide access control to virtual machines. This is the most natural scenario in Windows Azure for application isolation, since by default the only access allowed on virtual machines are the default provided RDP and Remote PowerShell public endpoints. For any Azure virtual machine (PaaS or IaaS) that wishes to access another virtual machine in a different virtual network, its virtual IP (VIP) address will be considered as opposed to its DIP addresses within a single virtual network. So when a permit ACL is set on a given virtual machine endpoint, that ACL will consider the public VIP of the machine that desires to make a connection. We can see this in the diagram below.

We can selectively permit or deny network traffic (in the management portal or from PowerShell) for a virtual machine input endpoint by creating rules that specify “permit” or “deny”. By default, when an endpoint is created, all traffic is permitted to the endpoint. So for that reason, it’s important to understand how to create permit/deny rules and place them in the proper order of precedence to gain granular control over the network traffic that you choose to allow to reach the virtual machine endpoint. Note that at the instant you add one or more “permit” ranges, you aredenying all other ranges by default. Moving forward from the first permit range, only packets from the permitted IP range will be able to communicate with the virtual machine endpoint.

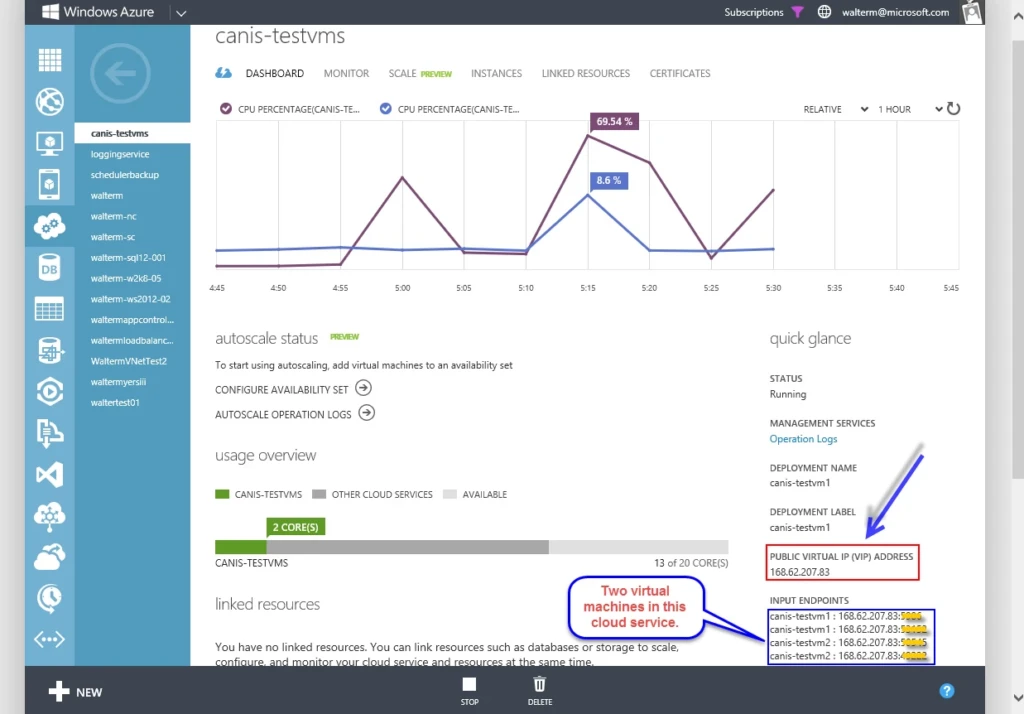

So let’s look at how this practically works with an example that includes two front-end virtual machines in the same cloud service and one back-end virtual machine with SQL Server. We want to secure the SQL Server so only these two front-end machines can gain access. In the screenshot below, we see a cloud service named canis-testvms with public VIP address 168.62.207.83 that contains our two front-end virtual machines named canis-testvm1 and canis-testvm2.

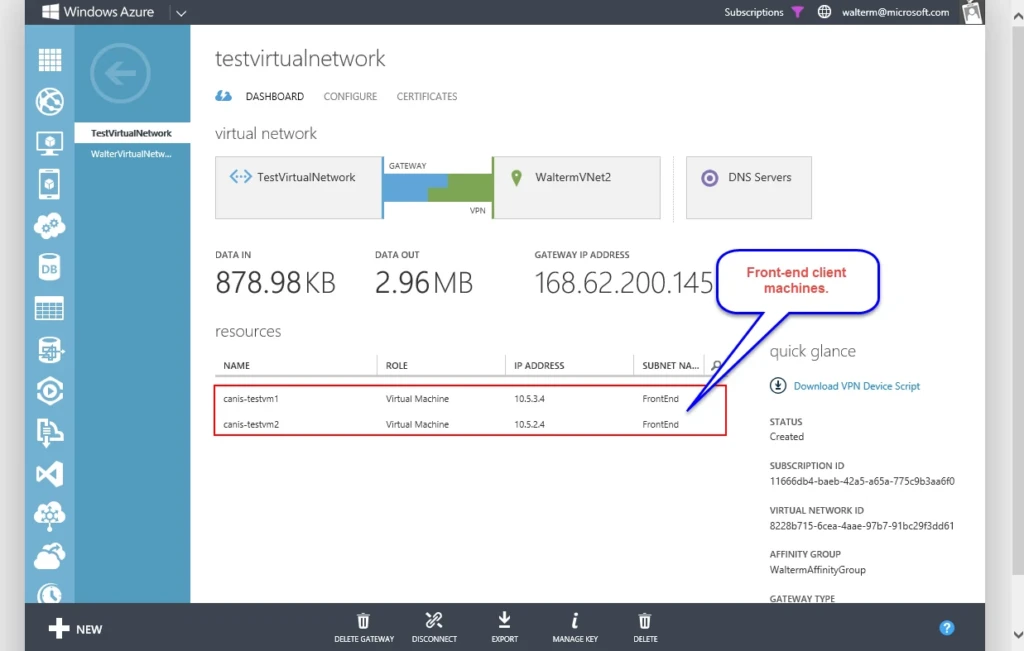

In the screenshot below, we see the two front-end machines, canis-testvm1 and canis-testvm2participating in a virtual network titled testvirtualnetwork, in its FrontEnd subnet.

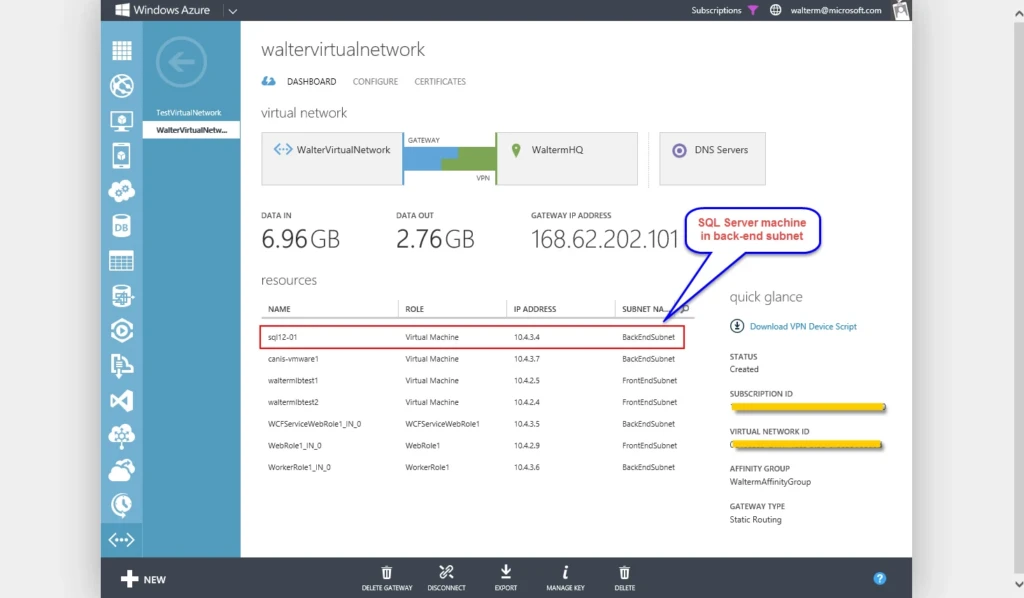

Below, we have a screenshot of the back-end SQL Server machine, sql12-01, which participates in a virtual network titled waltervirtualnetwork, in its BackEndSubnet subnet.

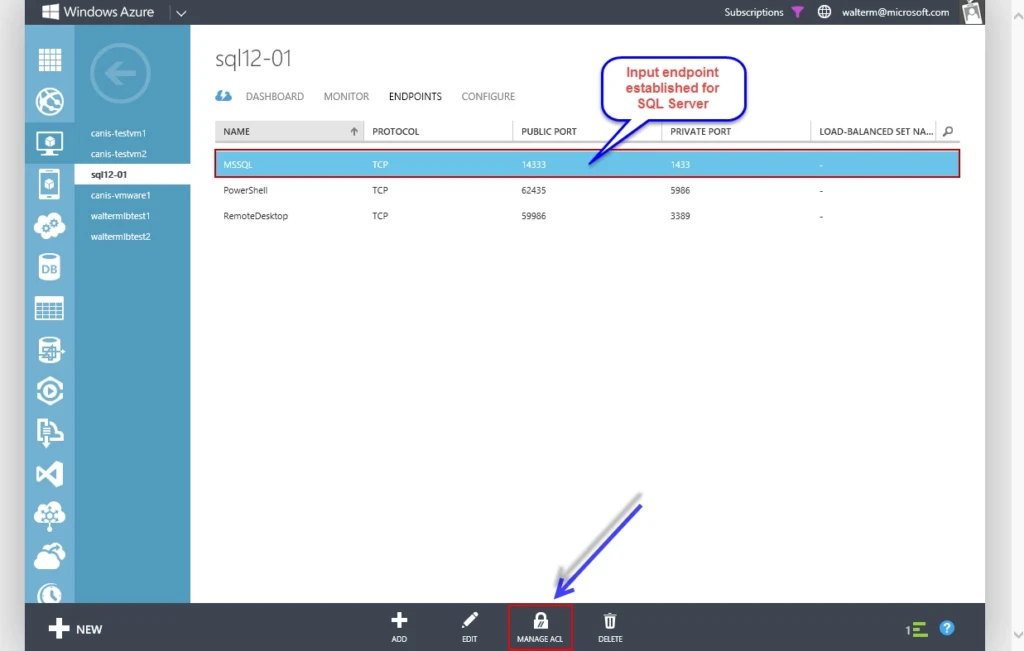

Now we need to configure access to the SQL Server virtual machine. As seen below, the first thing I do is to establish a public endpoint for private port 1433 using 14333 as the public port. As discussed above, the default ACL will allow any remote addresses to access the endpoint, so we will need to remedy this with network ACLs.

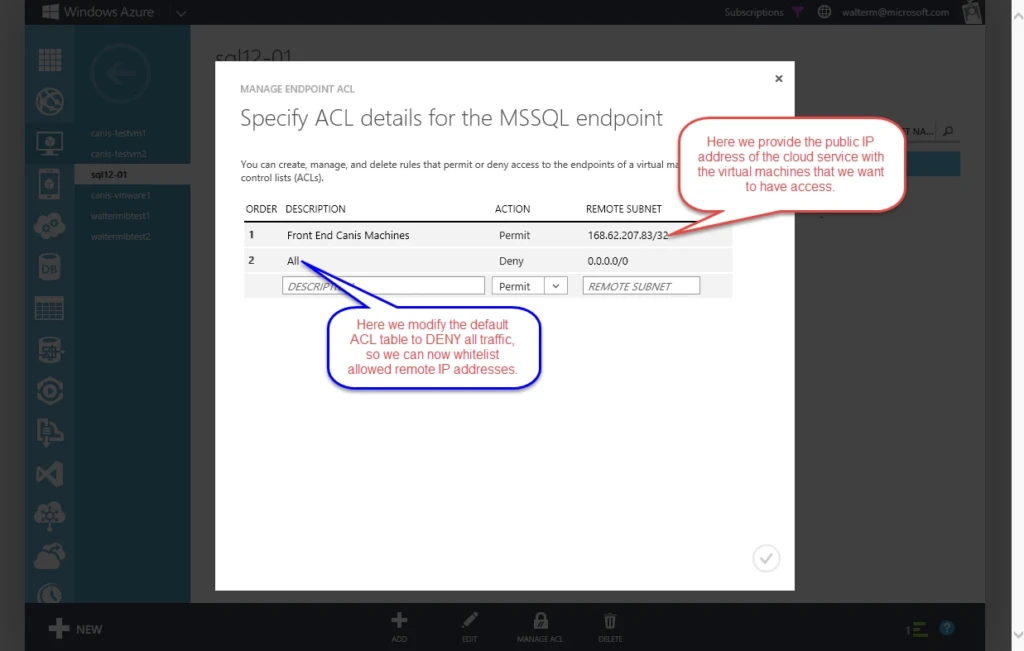

I next choose the Manage ACL button at the bottom, and am presented with the Manage Endpoint ACL dialog, as seen below. The first ACL I add is an ACL that overrides the default ACL with a “Deny” action that locks all remote addresses out. I then add another ACL higher in order specifying the VIP address of the cloud service that contains my front-end machines. I enter this in CIDR format using a /32 network (168.62.207.83/32), which simply maps to the single IP address represented by the cloud service with the front-end machines. From the perspective of the SQL server both front-end clients have the 168.62.207.83 IP address, which are NAT’d within the cloud service.

So this is how you would secure endpoints across virtual networks without a VPN connection using public VIP addresses, or if you have a VPN connection but don’t want to route through your on-premises router to take advantage of DIP addresses. We will cover DIP in the next option with VPN added.

Option 3: Subnets in Different Virtual Networks + VPN

When VPN connections are involved, they don’t change the default isolation of virtual networks, but do present additional options for network connectivity. We will look at a specific example of this here. In this scenario, on-premises subnets that participate in more than one virtual networks will have full access to virtual machines in both virtual networks through the DIP address, when not blocked by firewall rules. So in our scenario here we will have two virtual networks terminated at an on-premises location that need to access each other’s address spaces. This can be accomplished by configuring the on-premises router as the hub in a “spoke-to-spoke” configuration. The router is the hub, and the “spokes” are the VPN devices that terminate the Site-to-Site connections on the Azure side.

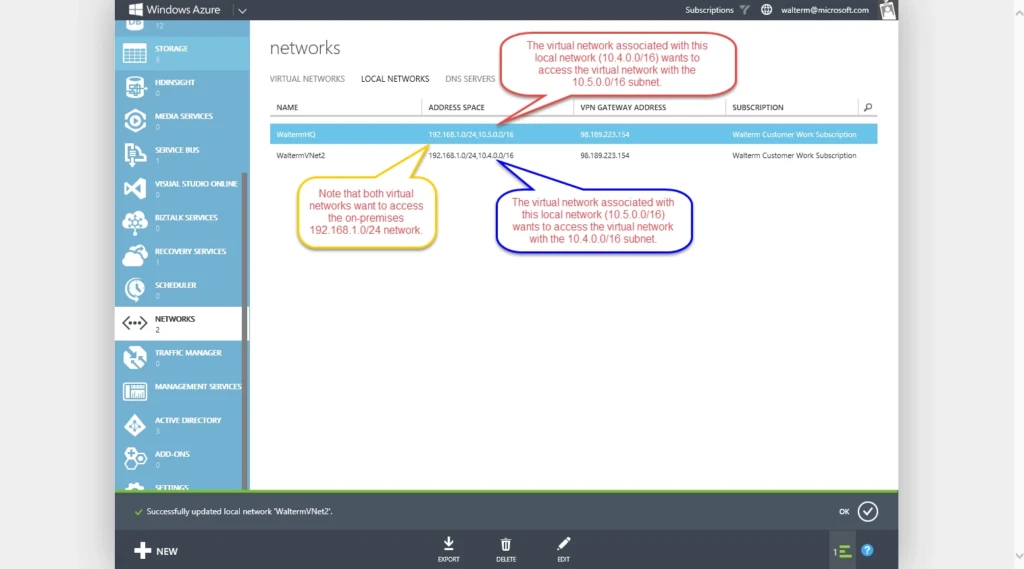

In order to make the spoke-to-spoke configuration work, we first need to configure the local network corresponding to a given virtual network at the gateway on the Azure side, which will allow that virtual network to access the address space of any other virtual network (which, of course, should be a different address space). This can be seen in below in the local networks configuration of the management portal. For each local network, I have an on-premises 192.168.1.0/24 local network address space that each virtual network needs to access, and there is an additional address space added for each virtual network that corresponds to the other virtual network it wants to access.

Next we need to configure the on-premises VPN device (mine is a Cisco ASA 5505), which is basically a matter of adding an access list from one virtual network to the other on both sides to your existing access list. For example, if the local on-premises network has a 192.168.1.0/24 address space, and one of the virtual networks has a 10.5.0.0/16 address space, then we would need an access list entry from the local network to the virtual network (which we typically would have already setup), and another access list entry from the 10.5.0.0/16 virtual network to the 10.4.0.0/16 virtual network, as seen below.

access-list AzureAccess extended permit ip 192.168.1.0 255.255.255.0 10.4.0.0 255.255.0.0

access-list AzureAccess extended permit ip 10.5.0.0 255.255.0.0 10.4.0.0 255.255.0.0

We then would do the same for 10.5.0.0/16 virtual network, as seen below, creating an access list entry from the 10.4.0.0/16 virtual network to the 10.5.0.0/16 network.

access-list AzureAccess2 extended permit ip 192.168.1.0 255.255.255.0 10.5.0.0 255.255.0.0

access-list AzureAccess2 extended permit ip 10.4.0.0 255.255.0.0 10.5.0.0 255.255.0.0

The next step would be to setup a NAT between these two “outside” networks, as seen below.

object-group network VPN_OUT_AZ1

network-object 10.4.0.0 255.255.0.0

object-group network VPN_OUT_AZ2

network-object 10.5.0.0 255.255.0.0

nat (outside,outside) 1 source static VPN_OUT_AZ1 VPN_OUT_AZ1 destination static VPN_OUT_AZ2 VPN_OUT_AZ2 no-proxy-arp route-lookup

Thus each virtual network can access the other through the VPN gateway using DIP addresses (which is now equivalent to the Subnets within a Single Virtual Network scenario above), presuming no firewall rules prevent it. This may not be a desired scenario for some since networking traffic would travel back and forth through the hub, resulting in increased transaction costs and additional latency, but this may be an acceptable tradeoff for organizations that don’t want to expose any external endpoints. Others might prefer to use public endpoints to make connections between two (or more) virtual networks, and may further secure public endpoints using IPsec and Group Policy Objects.

Securing End-to-End Network Communications with Windows Firewall/IPsec

With IPsec integration, Windows Firewall with Advanced Security provides a simple way to enforce authenticated, end-to-end network communications. It provides scalable, tiered access to trusted network resources, helping to enforce integrity of the data, and optionally helping to protect the confidentiality of the data. Internet Protocol security (IPsec) uses cryptographic security services to protect communications over Internet Protocol (IP) networks.

As an example in the diagram below with two virtual networks, we see machine with DIP address 10.5.1.5 not joined to the on-premises domain with an IPsec certificate that can be configured by Windows Firewall with Advanced Security or through PowerShell to secure the machine. This would mean any machines that want to access protected ports would require an IPsec certificate as well. We also have two domain-joined machines, 10.4.1.6 and 10.5.1.4, that can be configured to require IPsec connections through Windows Firewall, PowerShell scripts, or Group Policy. So these machines can be fully protected regardless of whether accessed over internal DIP addresses or through public VIP addresses. For public VIP addresses, we would leverage the network ACLs feature to create a whitelist of allowed external IP addresses, where those external client machines would require an IPsec certificate.

Applying IPsec to a Single Virtual Machine Manually

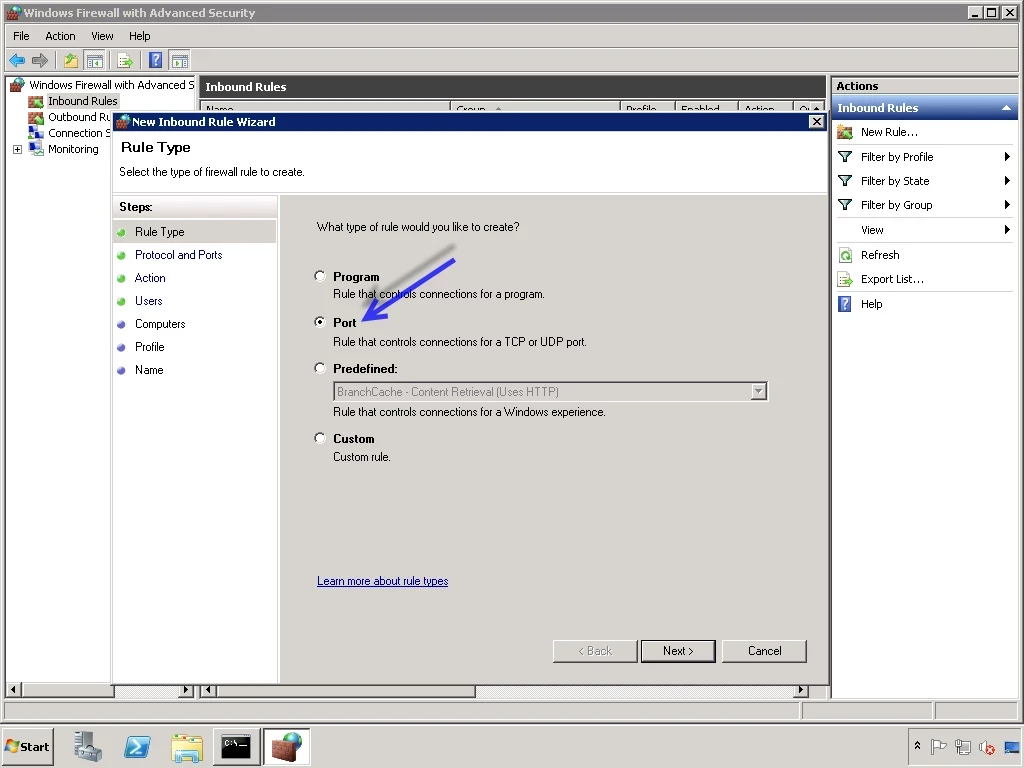

In order to provide maximum protection for a virtual machine within a virtual network, Windows Firewall can be activated and configured initially to block all inbound connections. Then ports could be opened on an as-needed basis, adding IPsec in order to enforce secure connections and also encrypt those connections. To manually configure IPsec in Windows Server 2008 and later, you would launch Windows Firewall, select the Inbound Rules node, and then create a new rule, as seen below.

You can then choose specific ports that you want secured with IPsec, as seen below.

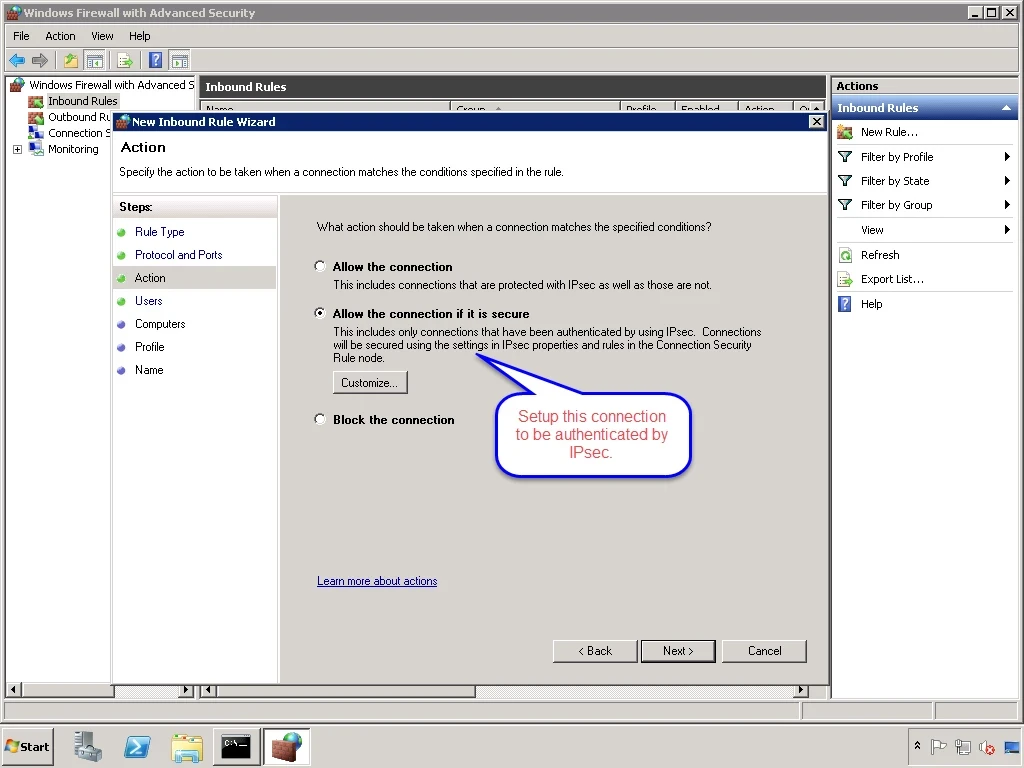

Next, you would specify that you want to secure connections to the desired port.

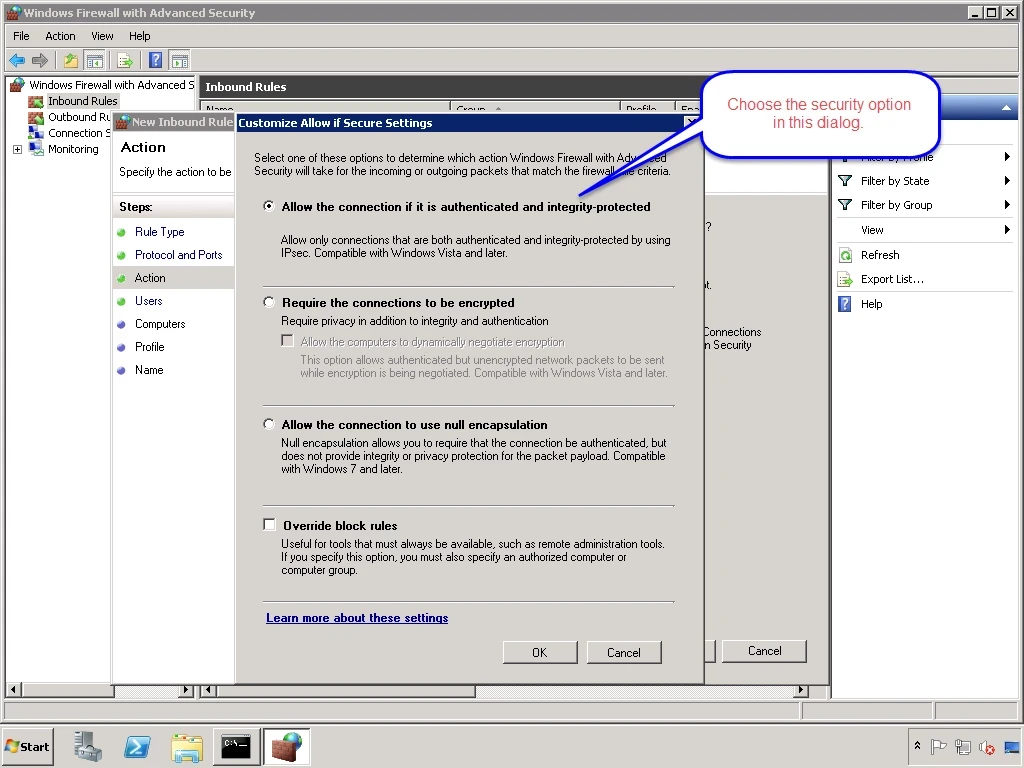

After choosing the Customize… button, you would specify your IPsec settings in the resulting dialog, as seen below. From here you would continue with the wizard and finally give your rule a name.

If you are interested in scripting IPsec configuration with PowerShell, you can learn more by following the link here.

Using Group Policy in a Domain to set IPsec Policy for Virtual Machines

In a situation of virtual networks that are connected to on-premises networks through a VPN connection, computers in the virtual networks can join the on-premises domain. If joined to the on-premises domain, multiple computers can be configured by applying Group Policy with IPsec policies ensuring these computers are protected based on organizational policies. You can find out more about creating IPsec policies using Group Policy here, which we will walk through now.

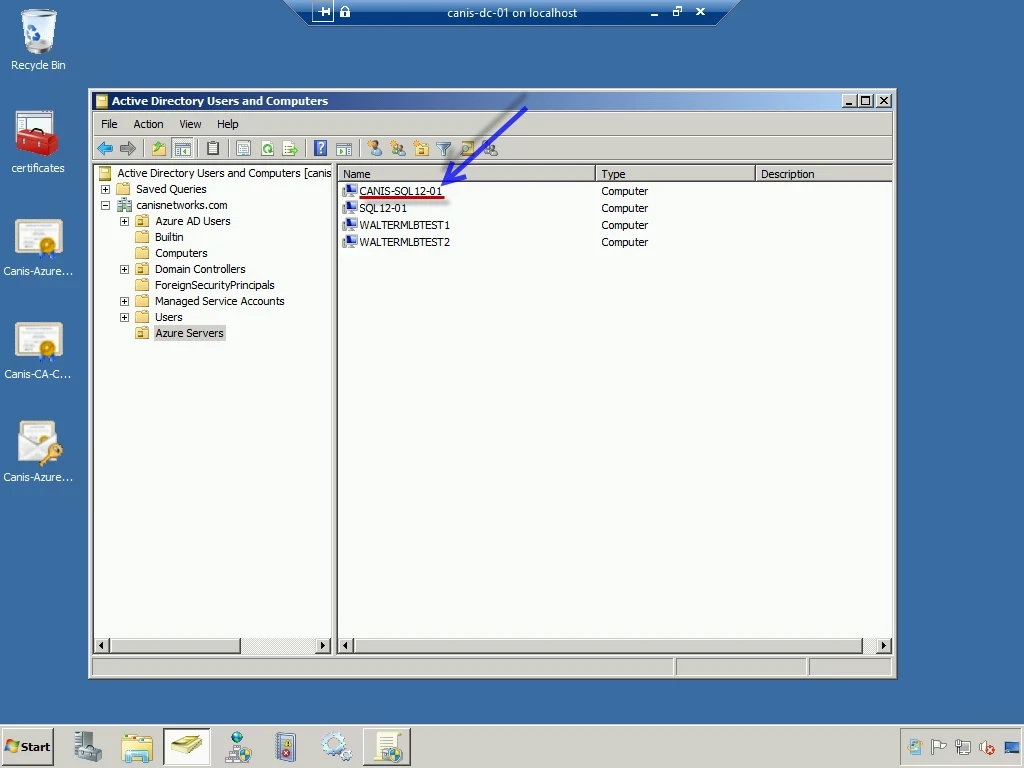

From a domain controller, I want to apply specific policies to domain-joined servers in Windows Azure, so my first task will be to add these machines to their own OU named Azure Servers, as seen below. We will work with the virtual machine named canis-sql12-01 for this walkthrough.

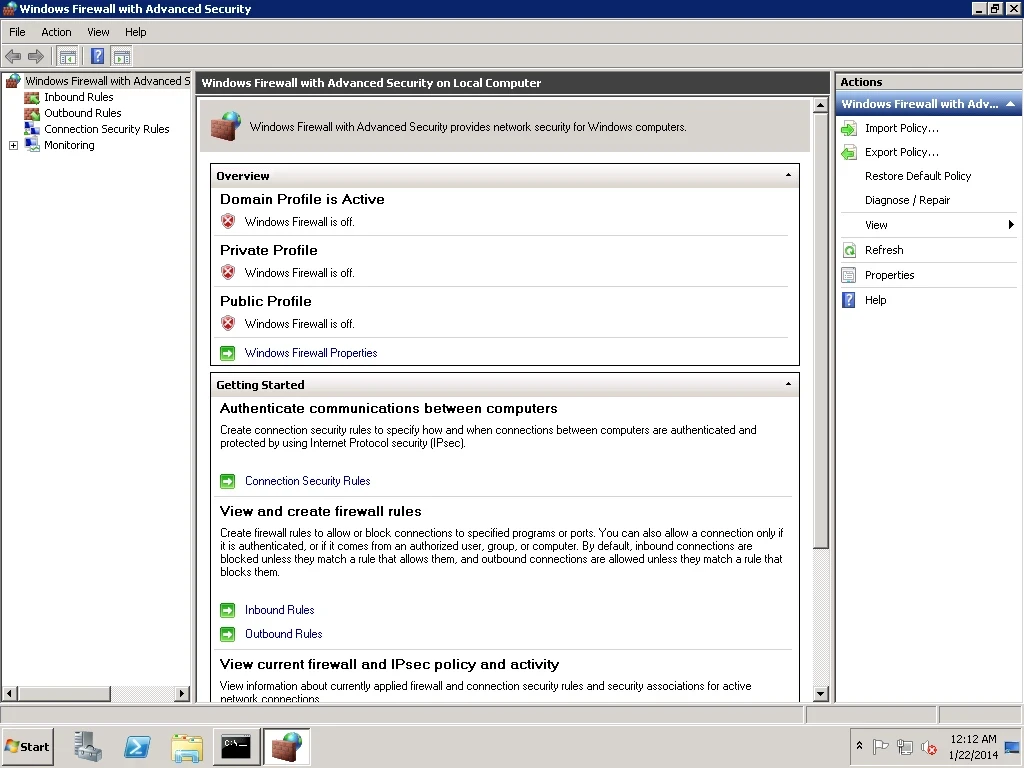

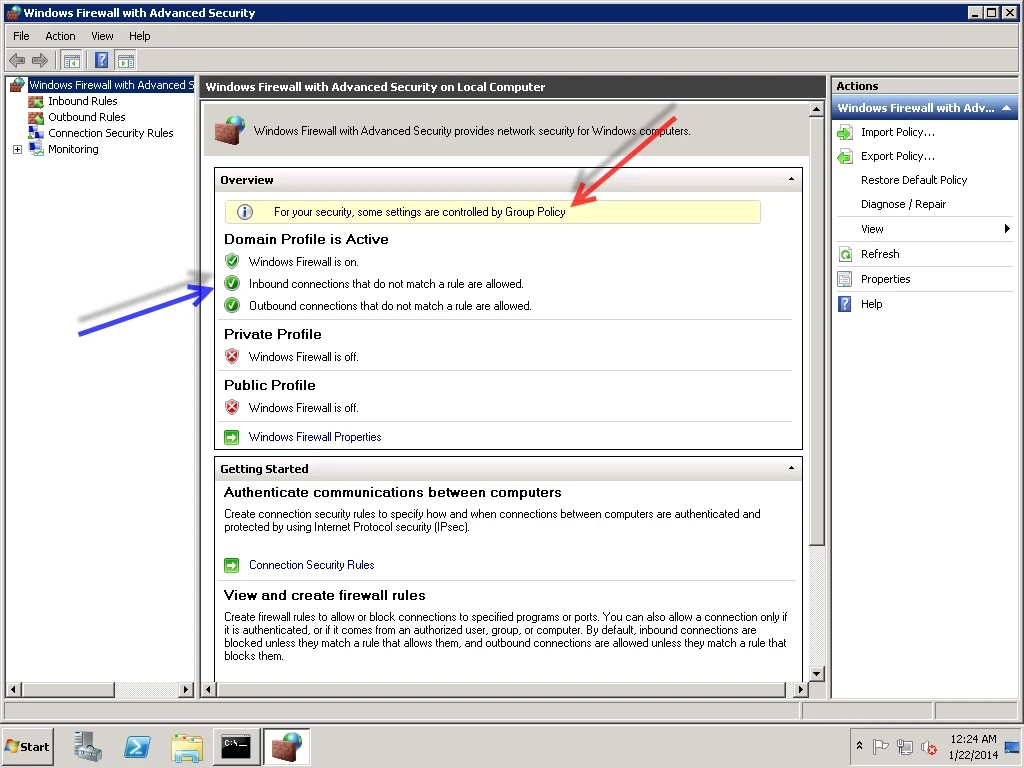

Below is the current firewall state of the virtual machine canis-sql12-01.

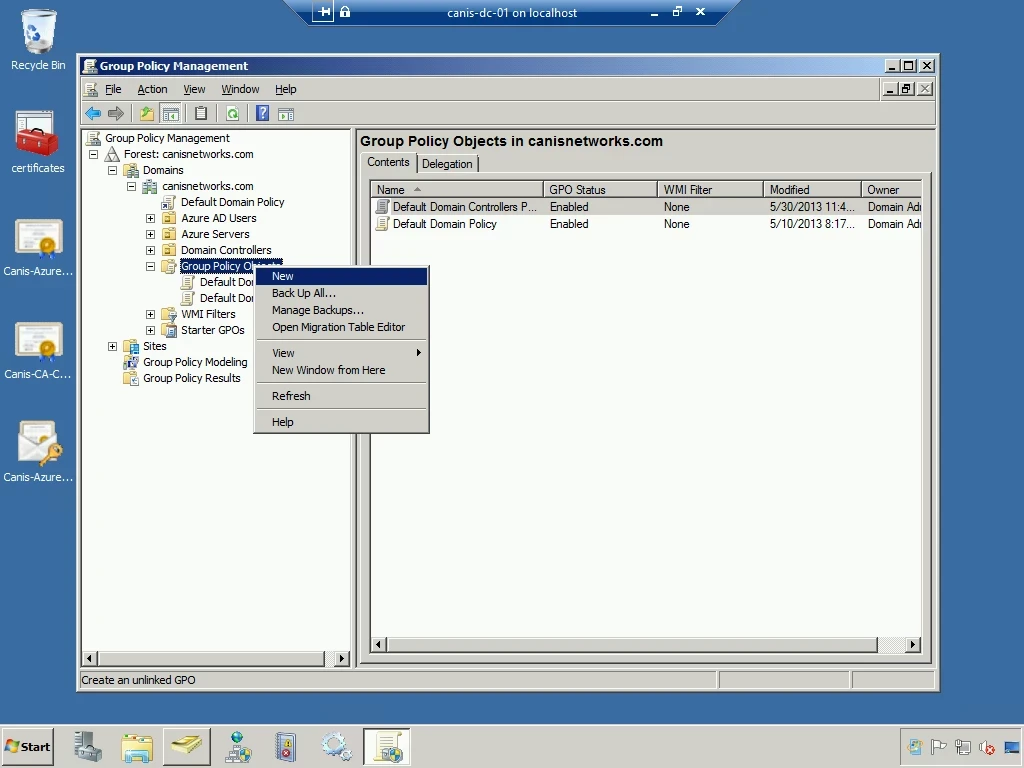

Back at the domain controller, I launch the Group Policy Management console from theAdministrative Tools menu. I then expand out the domain, canisnetworks.com, select the Group Policy Objects node, and right-click it. I then select the New menu item, as seen below.

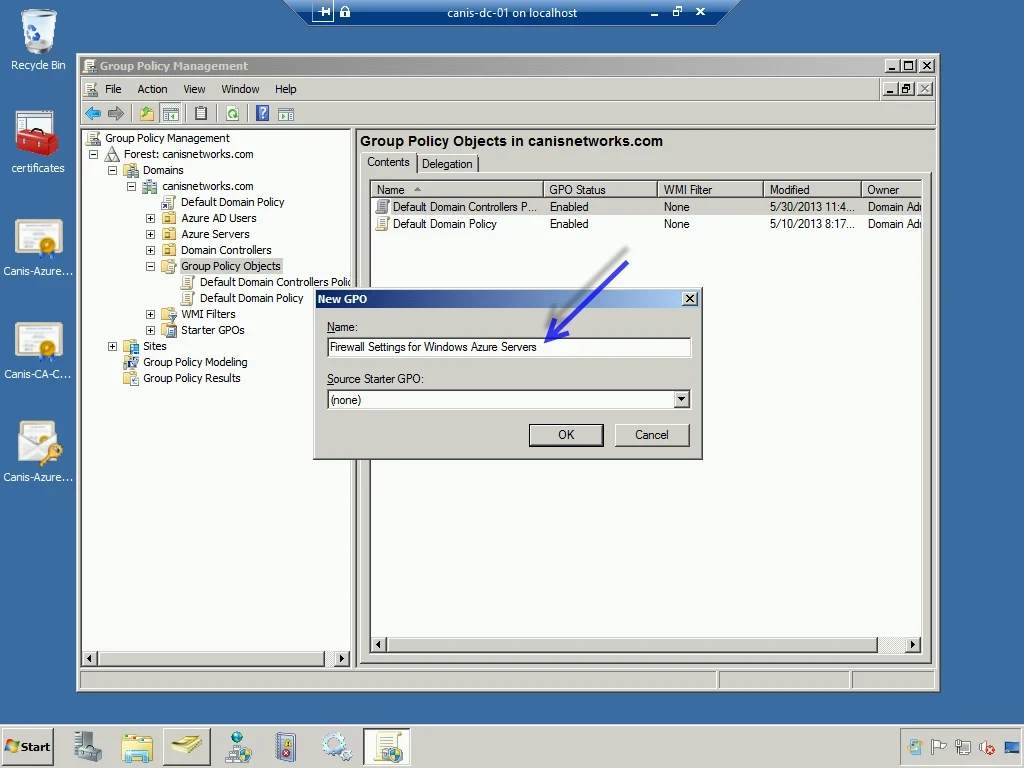

In the New GPO dialog, I provide a descriptive name for the new GPO and select the OK button.

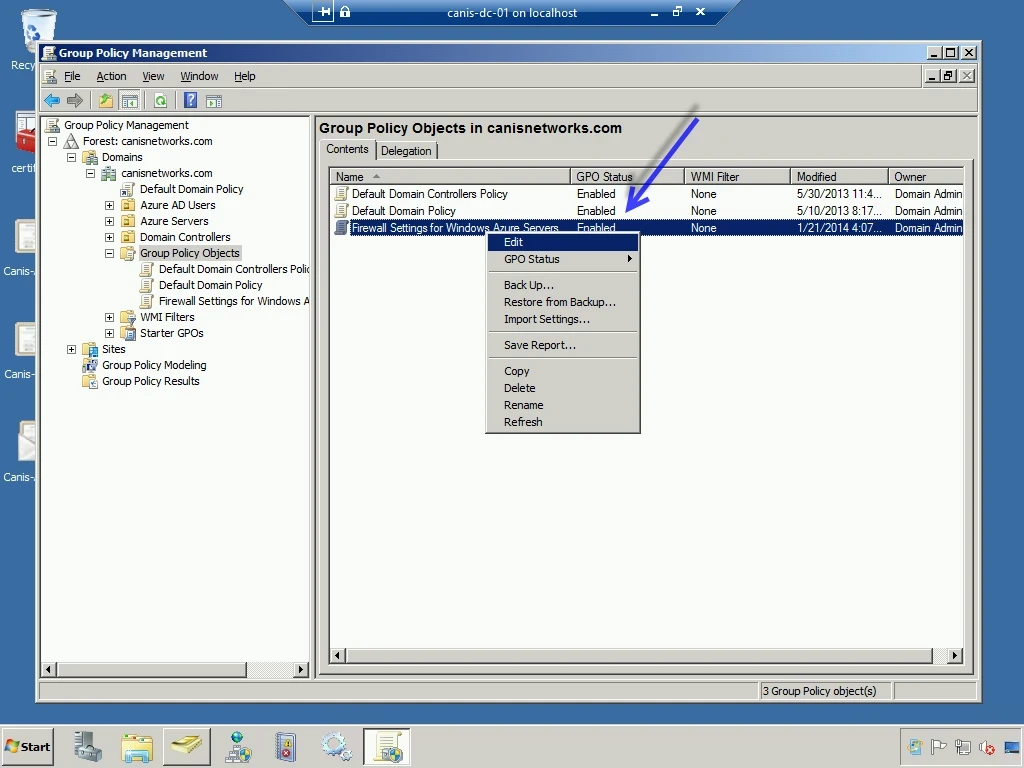

My GPO is now added to the list of GPO objects, so now I need to configure it by right-clicking and selecting the Edit menu item, as seen below.

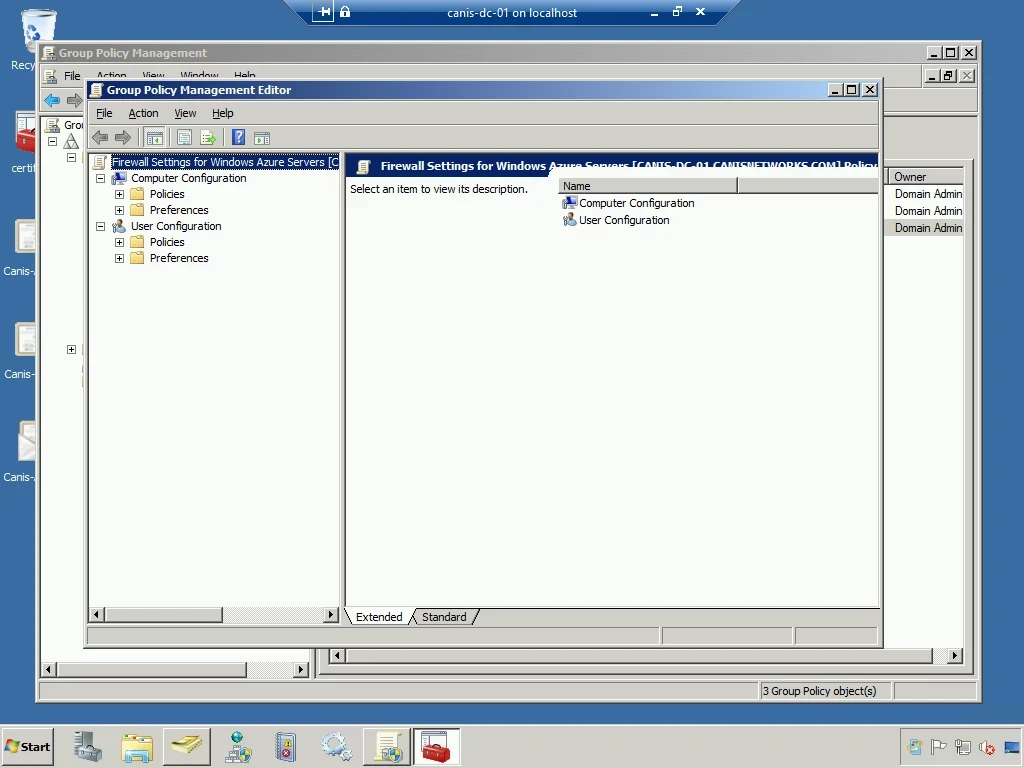

The Group Policy Management Editor is now presented.

I’m going to add two GPOs as follows:

- Turn the Domain Profile on.

- Configure the SCOM monitoring port 5723 to be protected by IPsec. (I would then be able to monitor machines with this GPO is applied over an external IP address securely from an on-premises SCOM installation.)

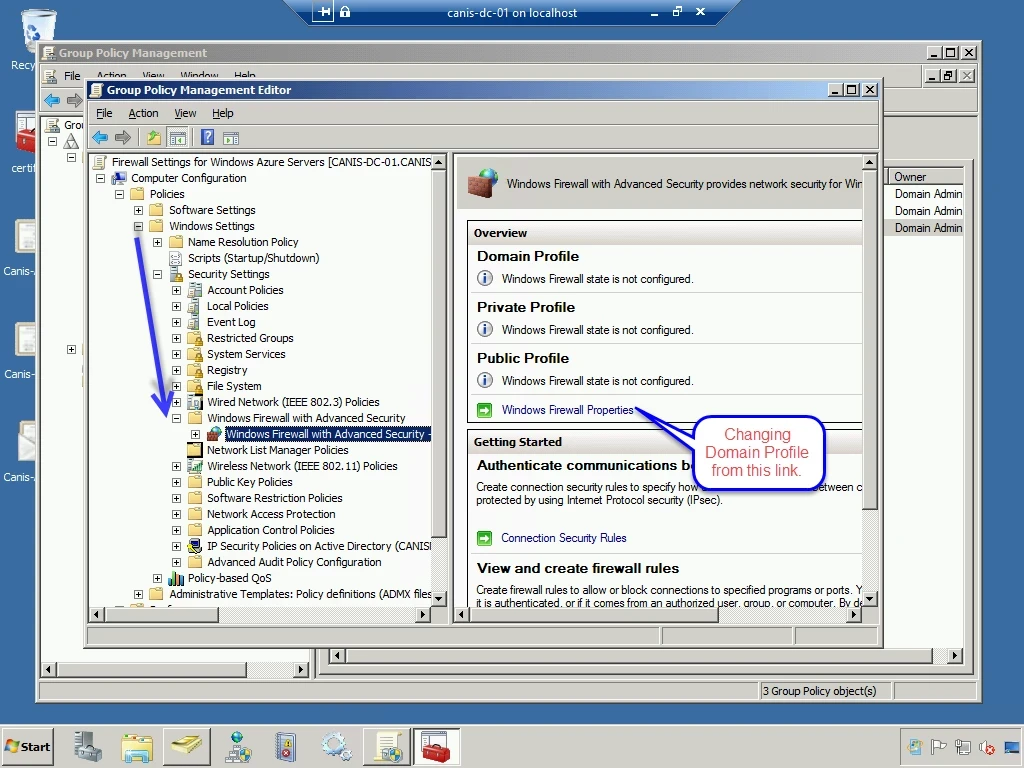

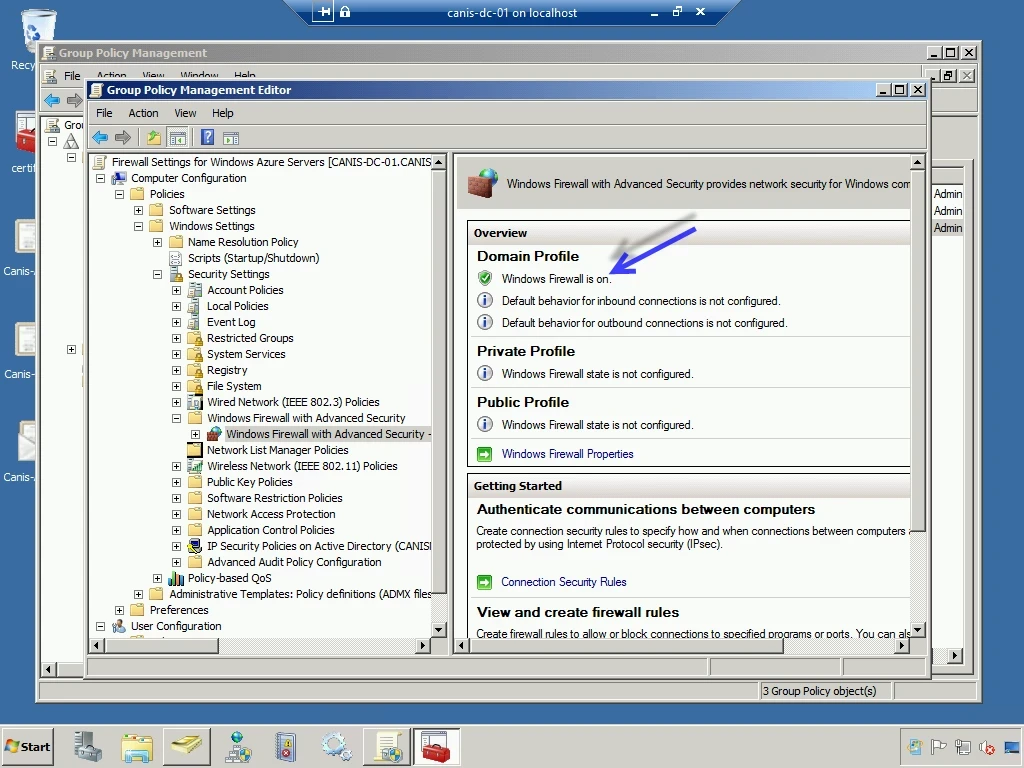

Below, I have expanded the Computer Configuration | Policies | Windows Settings tree and navigated to the Windows Firewall with Advanced Security node. I then select the Windows Firewall Properties link.

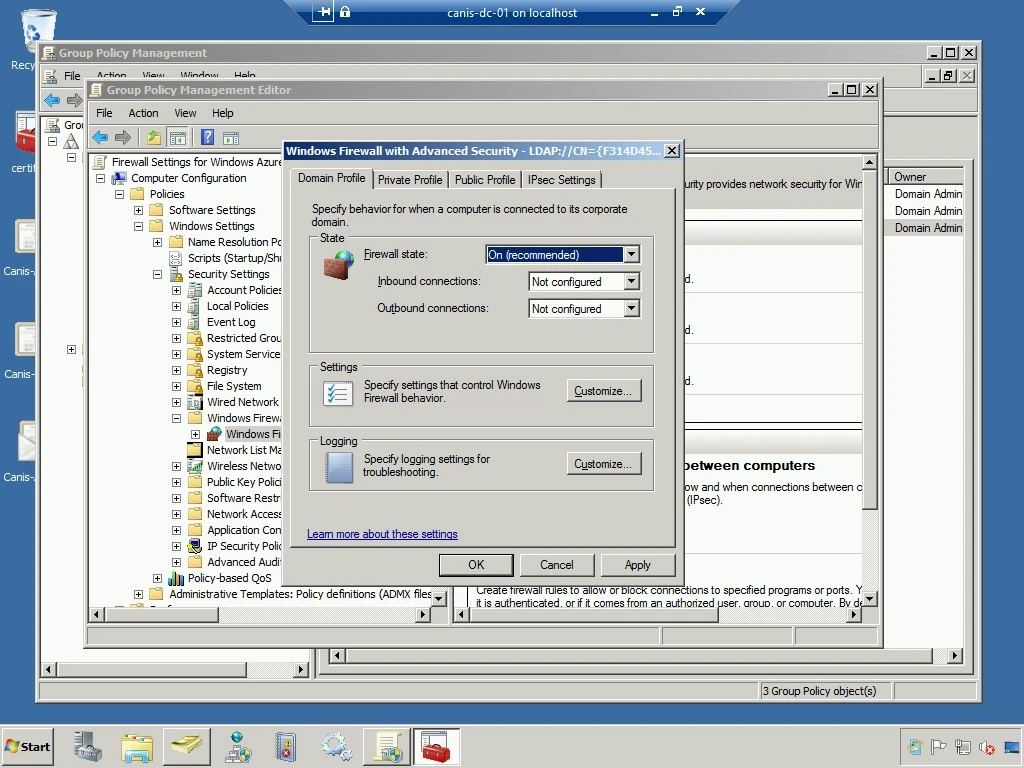

In the dialog seen below, I change the firewall state for the Domain Profile from “Off” to “On (recommended)”. I then select the OK button.

Below, we see the Domain Profile now has the Windows Firewall turned on.

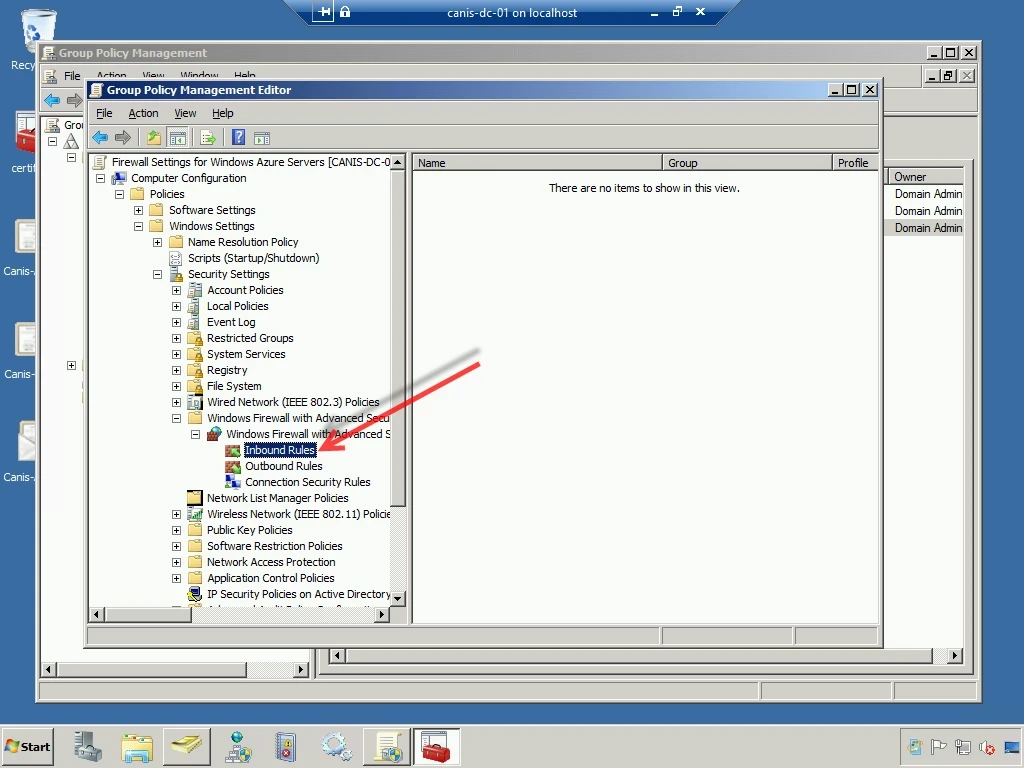

I now expand the Windows Firewall node on the left-hand side and select the Inbound Rulesnode, as seen below. There are currently no rules so the list of rules is empty.

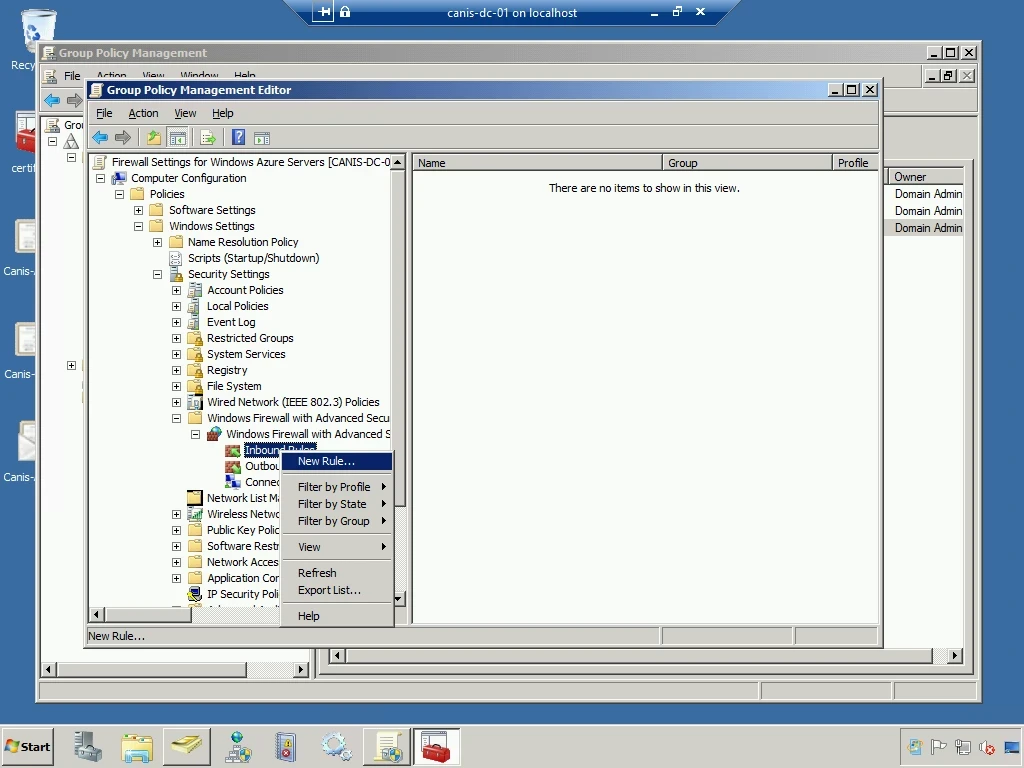

I right-click on the Inbound Rules node and select New Rule… from the popup menu, as seen below.

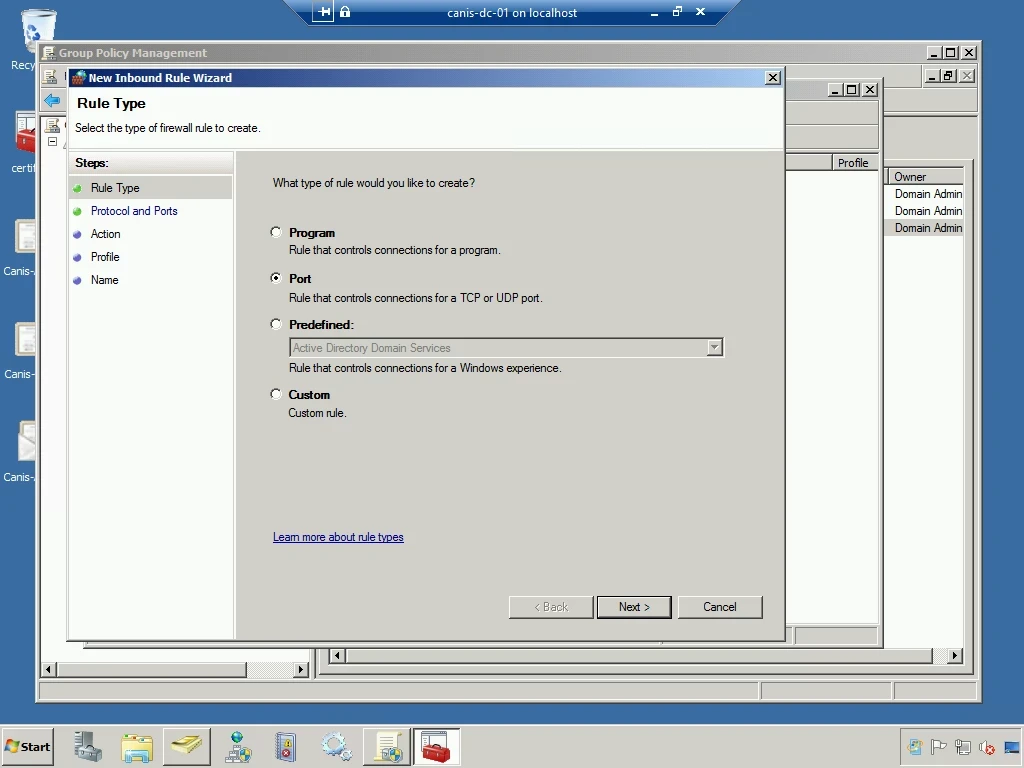

I perform the same manual steps with the New Inbound Rule Wizard as before when I created a rule on an individual machine to secure a port with IPsec. So below I choose a port rule.

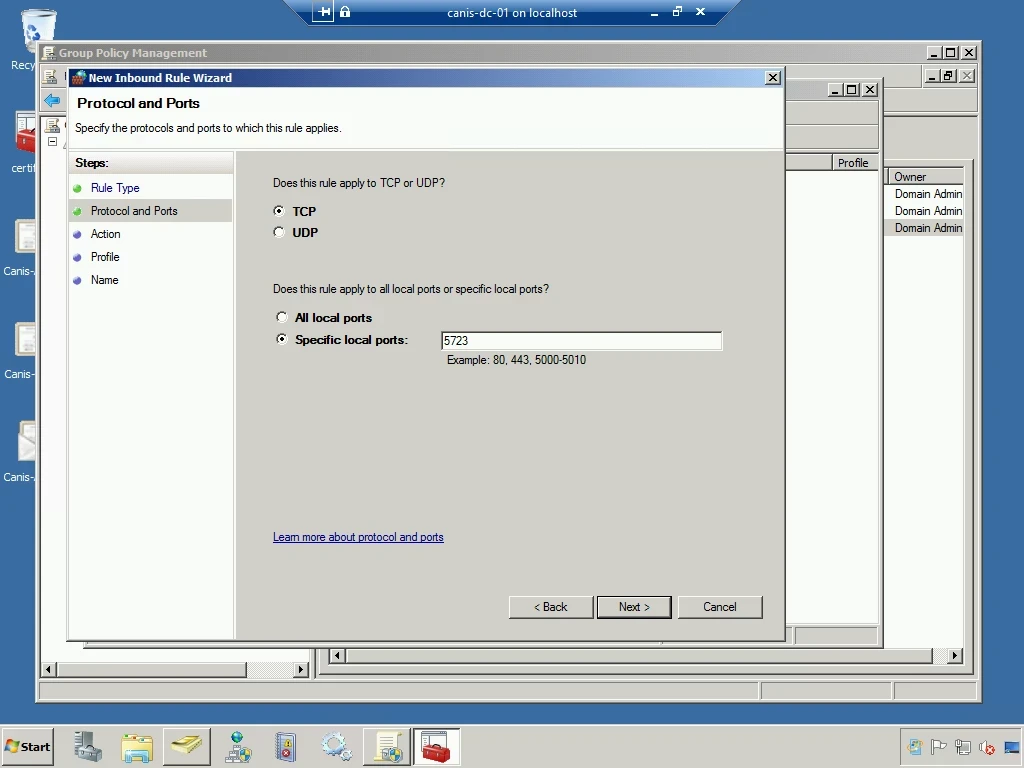

In the Protocol and Ports wizard page, I enter the desired port.

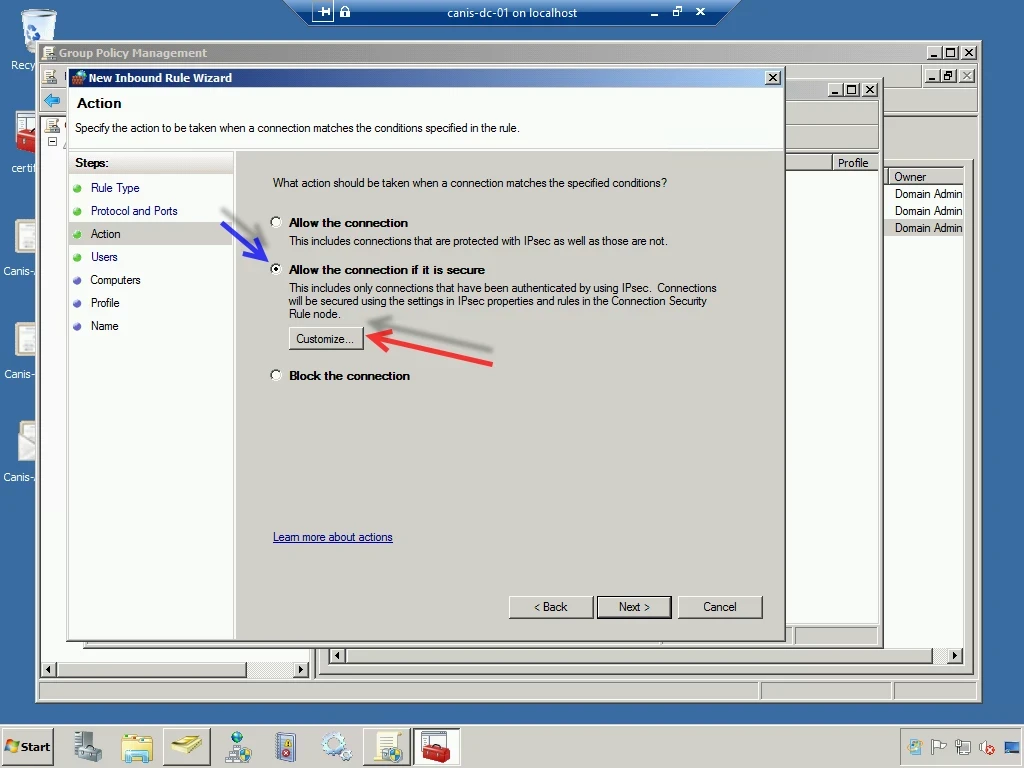

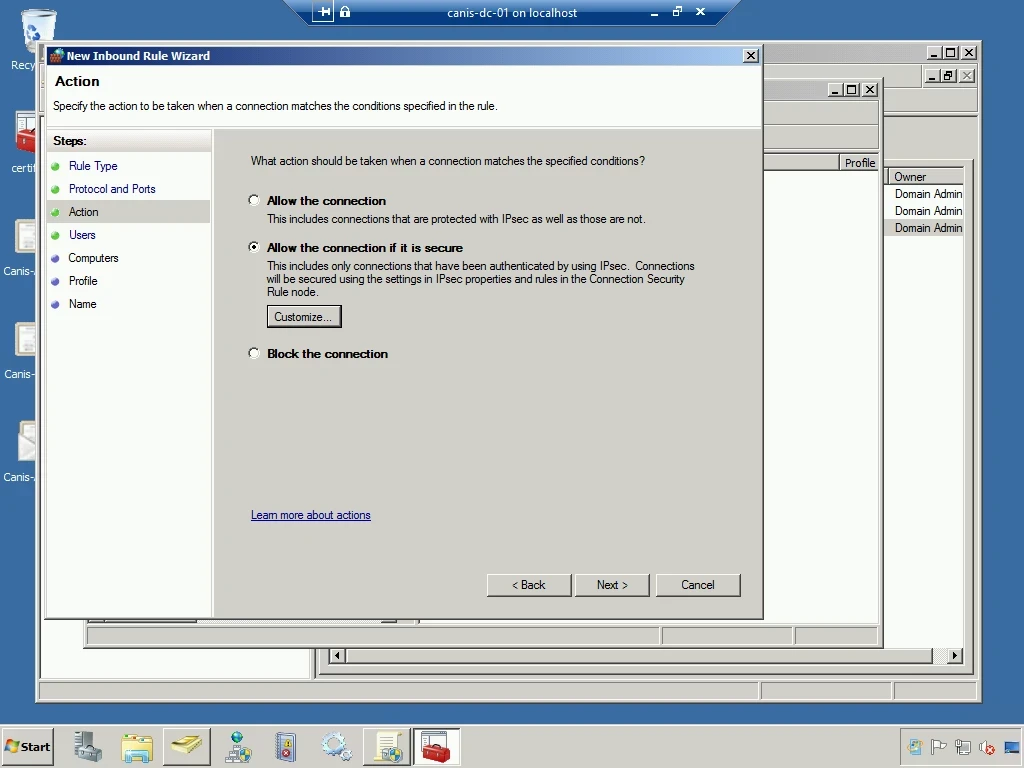

In the Action wizard page, I select the Allow the connection if it is secure option and then select the Customize… button.

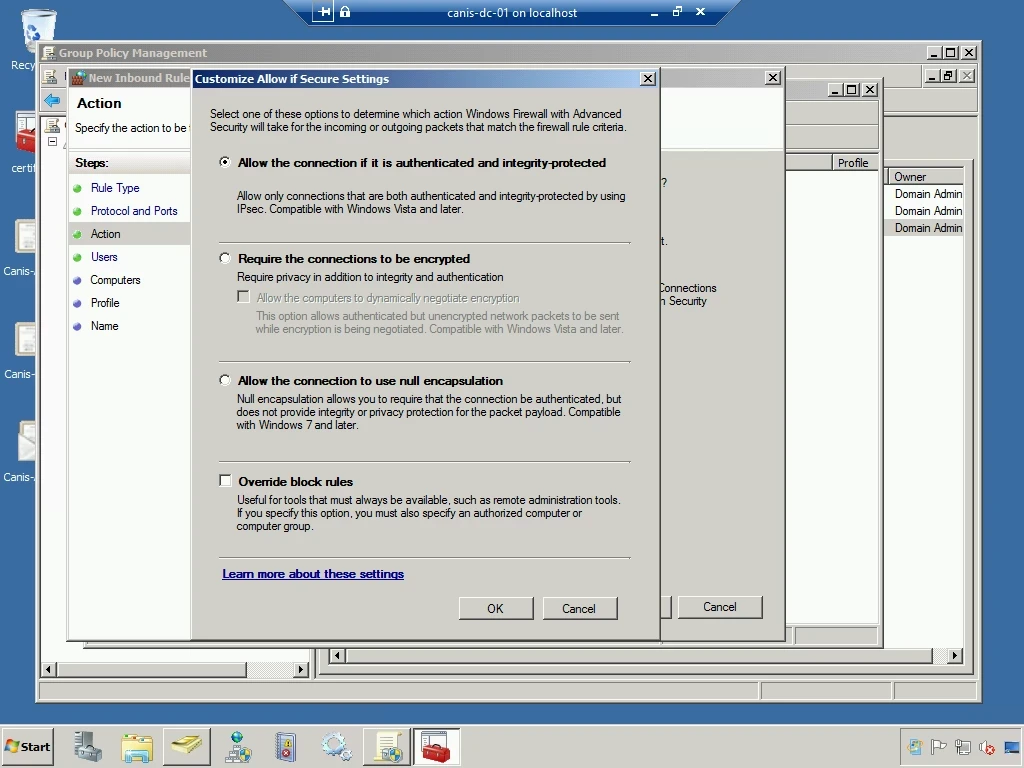

I leave the option to force authentication and integrity protection on the dialog to select security settings, as seen below. I then choose the OK button.

I now select the Next button and continue accepting the defaults for the Users and Computers wizard pages.

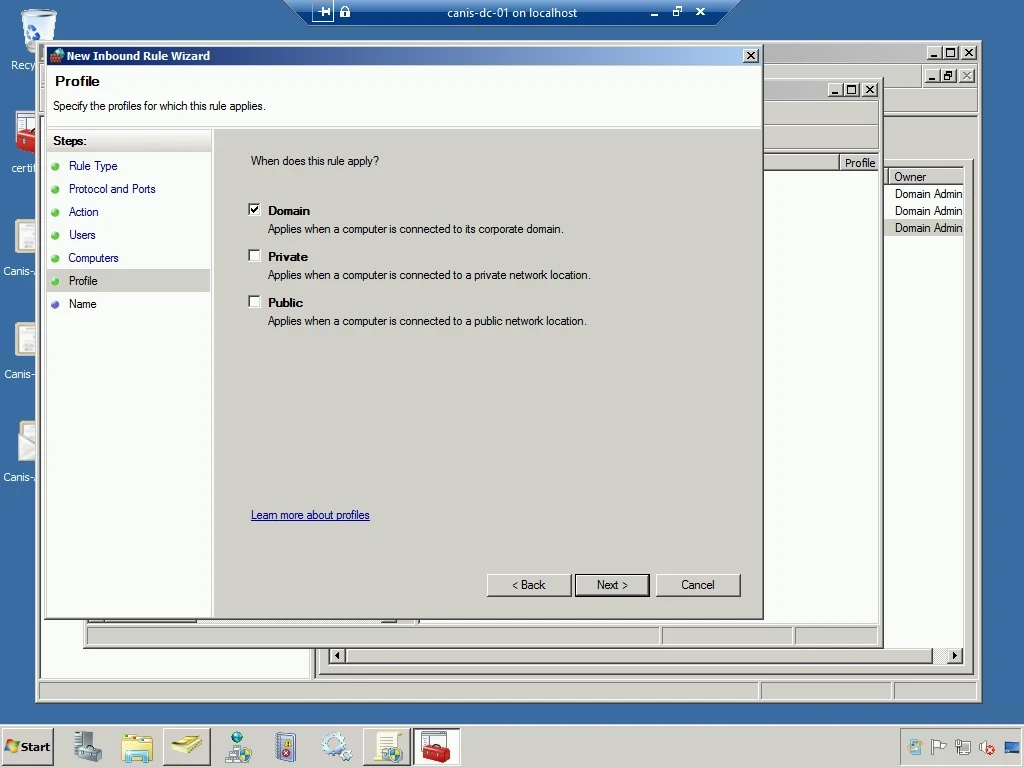

On the Profile wizard page, I select the Domain profile, as seen below.

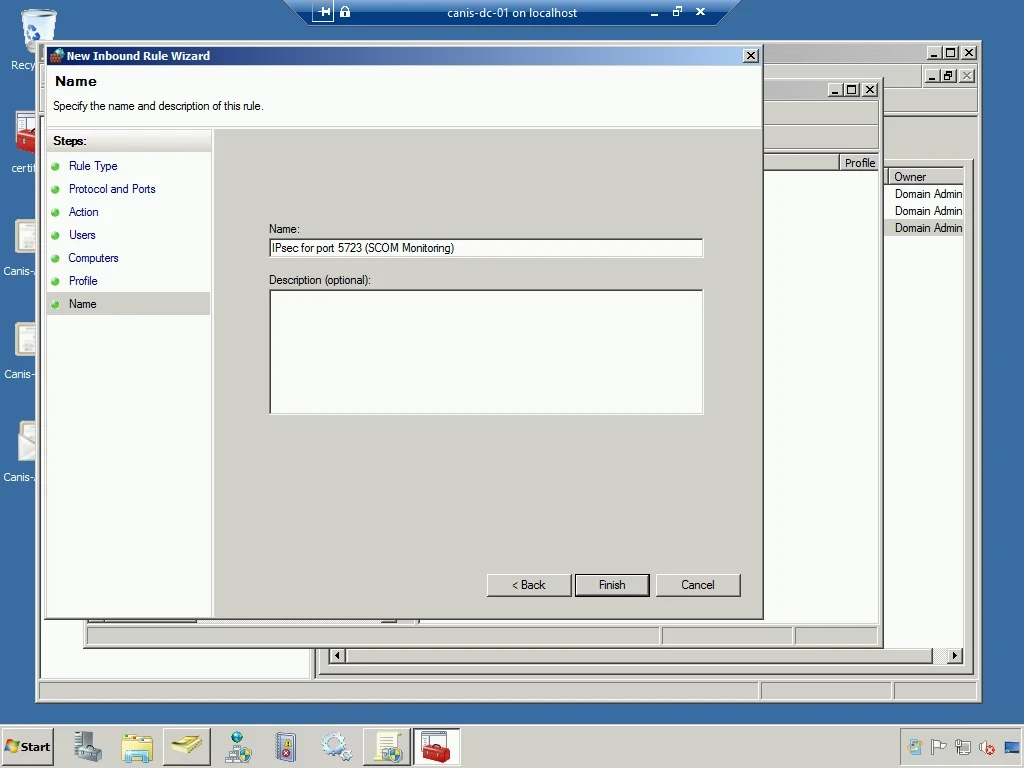

Finally, I give the new inbound rule a name, as seen below.

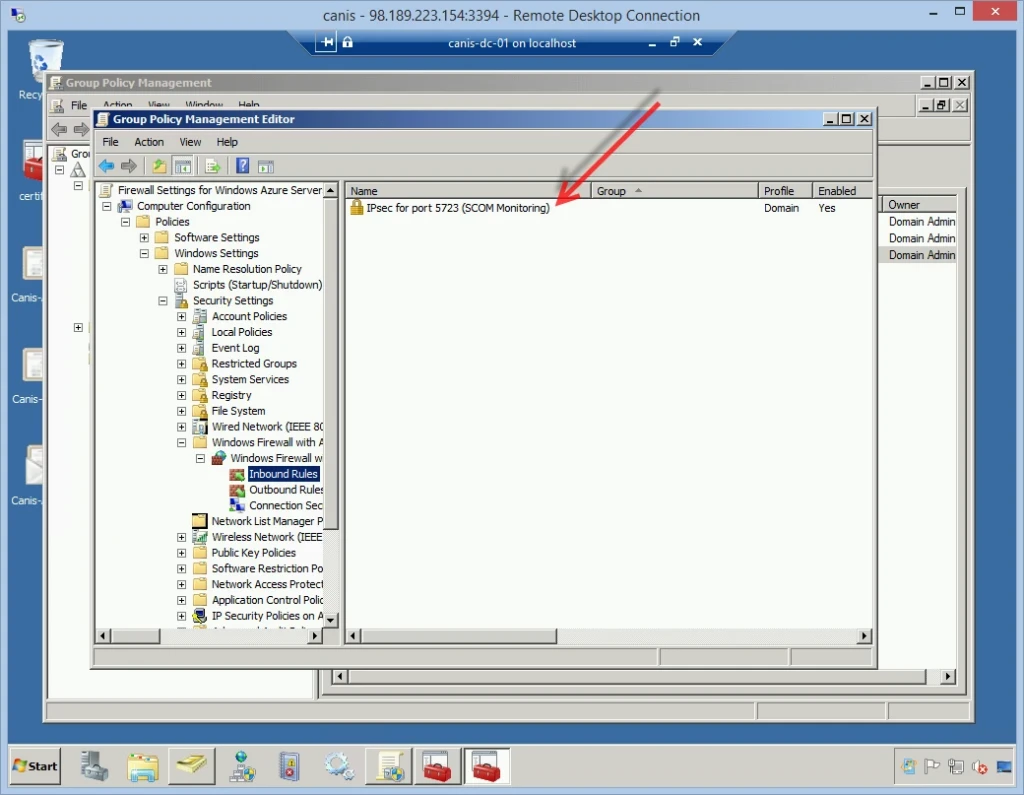

Now that I’m finished setting up the GPO, we now see the new inbound rule in the list of inbound rules in the Group Policy Management Editor. I then go ahead and close the editor, which returns me to the original Group Policy Management console.

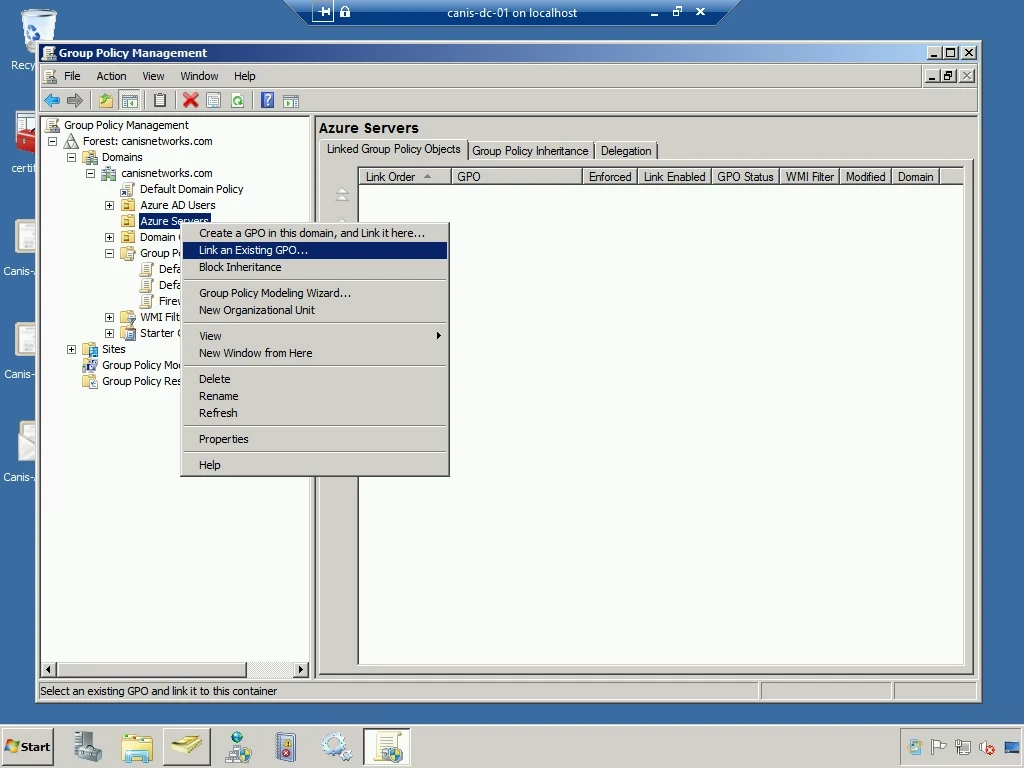

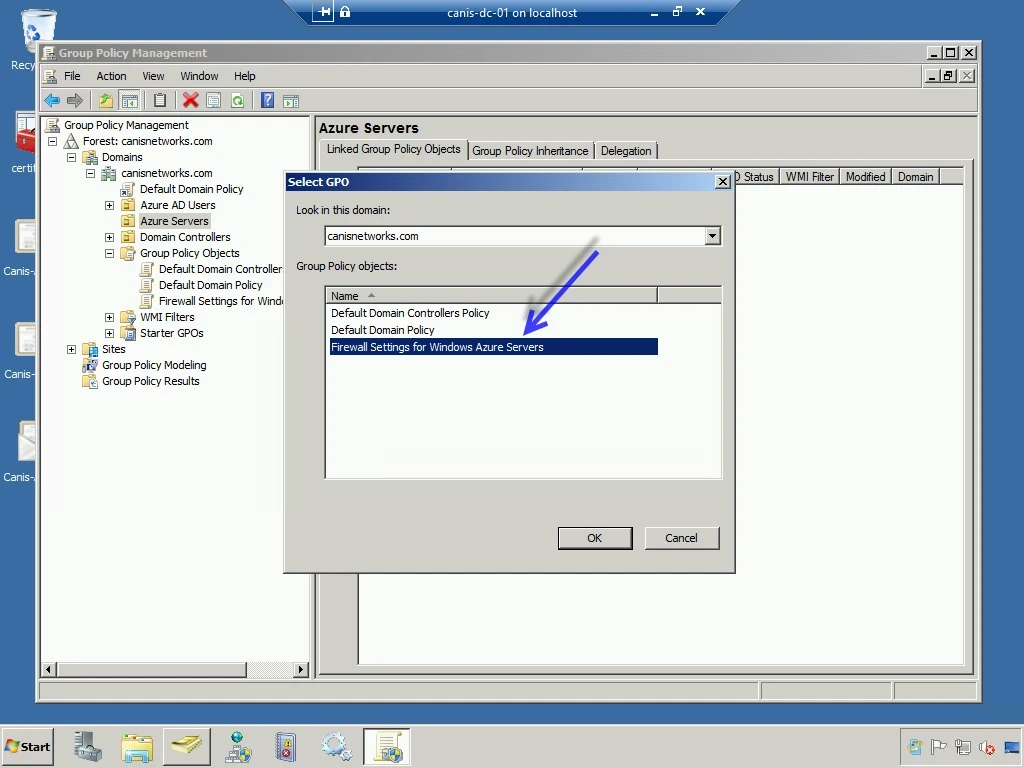

Now that we have our GPO setup, we need to link this GPO to our Azure servers OU. I now right-click on the Azure Servers OU and select Link an Existing GPO… from the popup menu, as seen below.

In the Select GPO dialog, I go ahead and select my new GPO and then close the dialog.

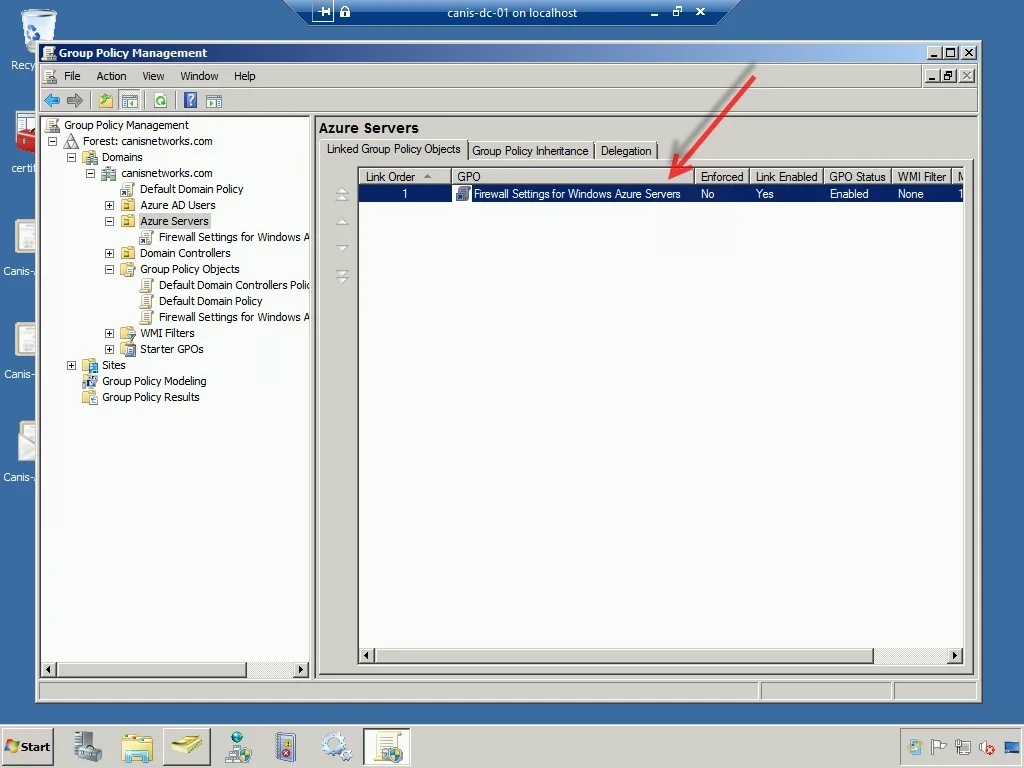

Now our GPO is associated with the Azure Servers OU, as seen below.

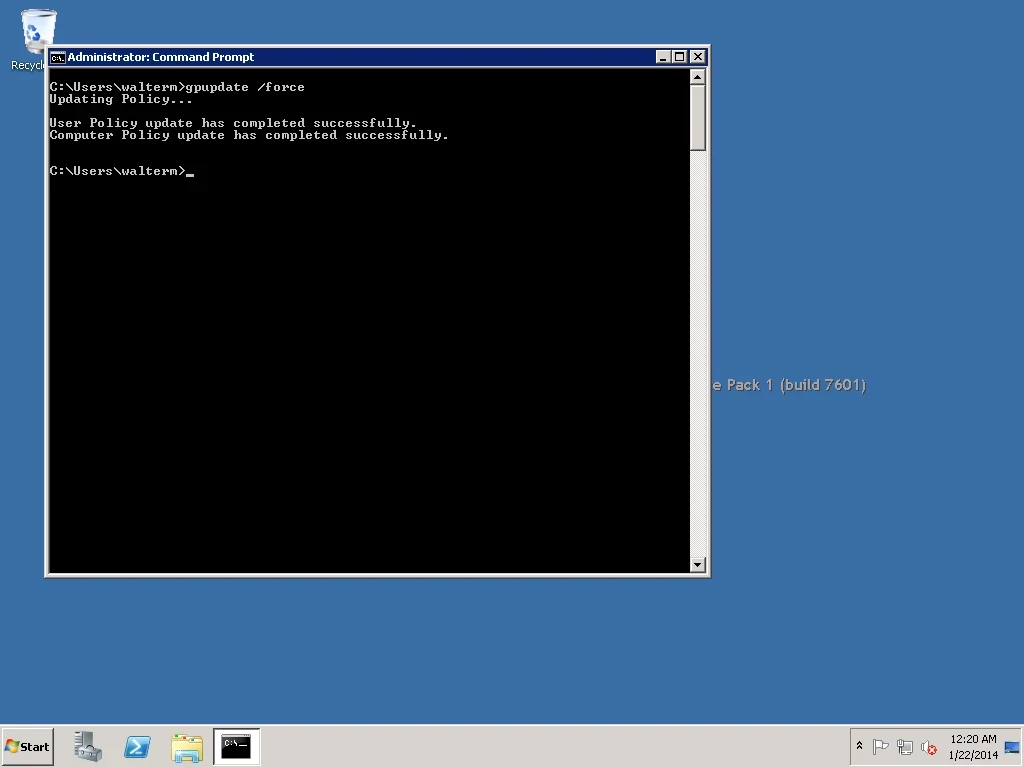

Now we will head back to our virtual machine (canis-sql12-01), apply the GPO, and verify that the GPO was applied. Below, from a command prompt, I enter the gpupdate /force command. We can see below that the policy update has successfully completed.

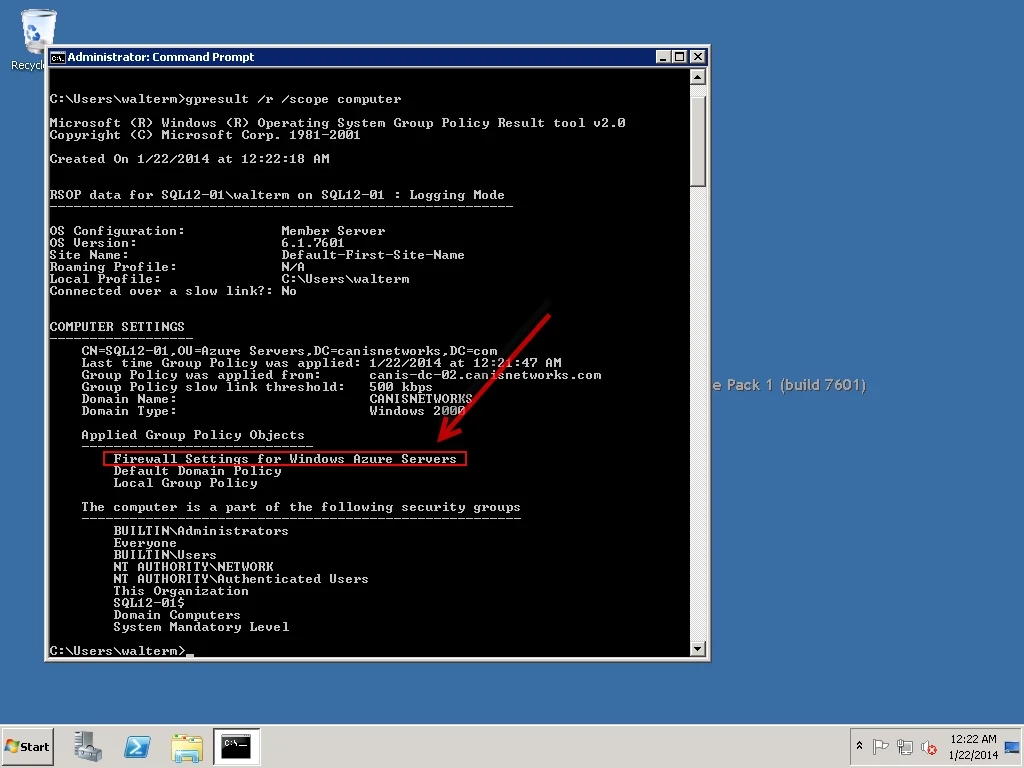

In order to verify that the GPO was applied, I then enter the gpresult /r /scope computercommand, as seen below. As you can see, we have verified that the GPO has been applied.

Now let’s open Windows Firewall and verify both of our GPO objects there. From the Windows Firewall top-level node, we can verify that the Domain Profile windows firewall is on. Also, we see a notification that the firewall state does have Group Policy settings applied (specifically, in this case, for the Domain Profile).

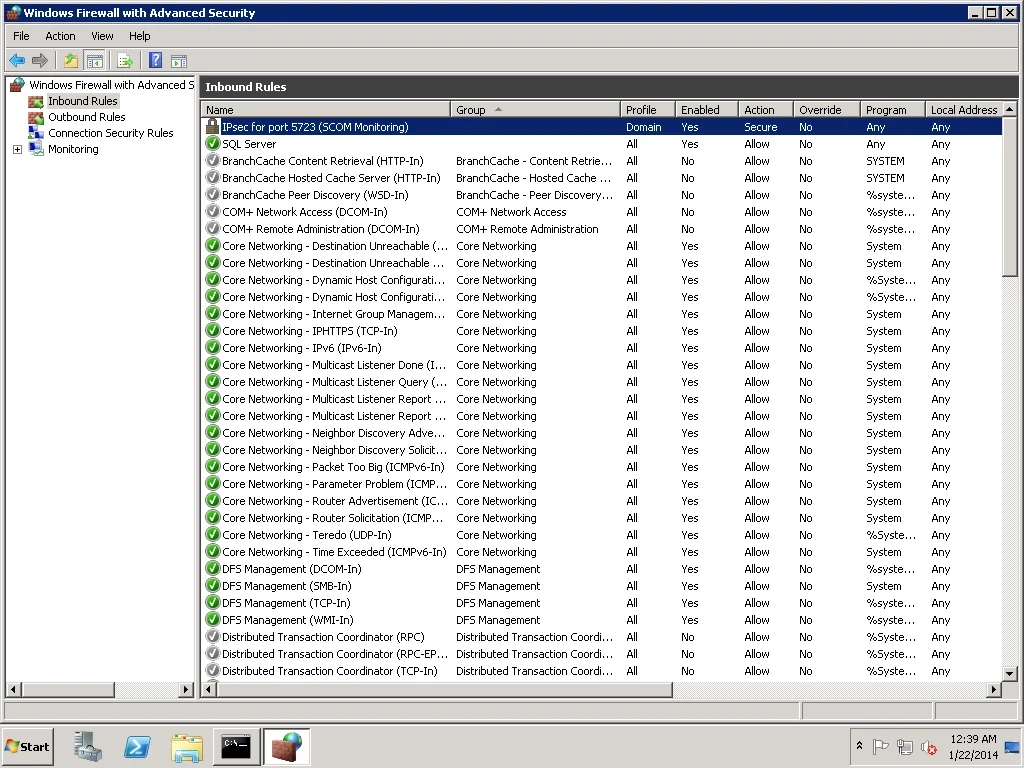

Now if we select the Inbound Rules node, we can verify that the IPSec inbound rule has been applied for port 5723, as seen below.

Limiting Outbound Internet Connections from Virtual Machines

For obvious reasons, it is a common practice for enterprise customers to limit outbound internet access from servers, particularly back-end servers that contain sensitive data with medium or high business impact if shared outside the company. In the public cloud, this becomes more pronounced as the enterprise customer is already placing a great deal of trust in the cloud provider by even considering the cloud platform, and would not desire that security controls be any less robust than on-premises. Thankfully, since Windows Azure virtual machines as well as PaaS machines can be domain-joined and have Group Policy applied as we have just seen, the enterprise custom can use familiar techniques to protect their servers and also prevent their servers themselves from sharing sensitive information. In this walkthrough, I will demonstrate how to limit outbound internet connections from a virtual machine. I won’t use Group Policy in this case, but it would be applied in the same manner as above for IPsec.

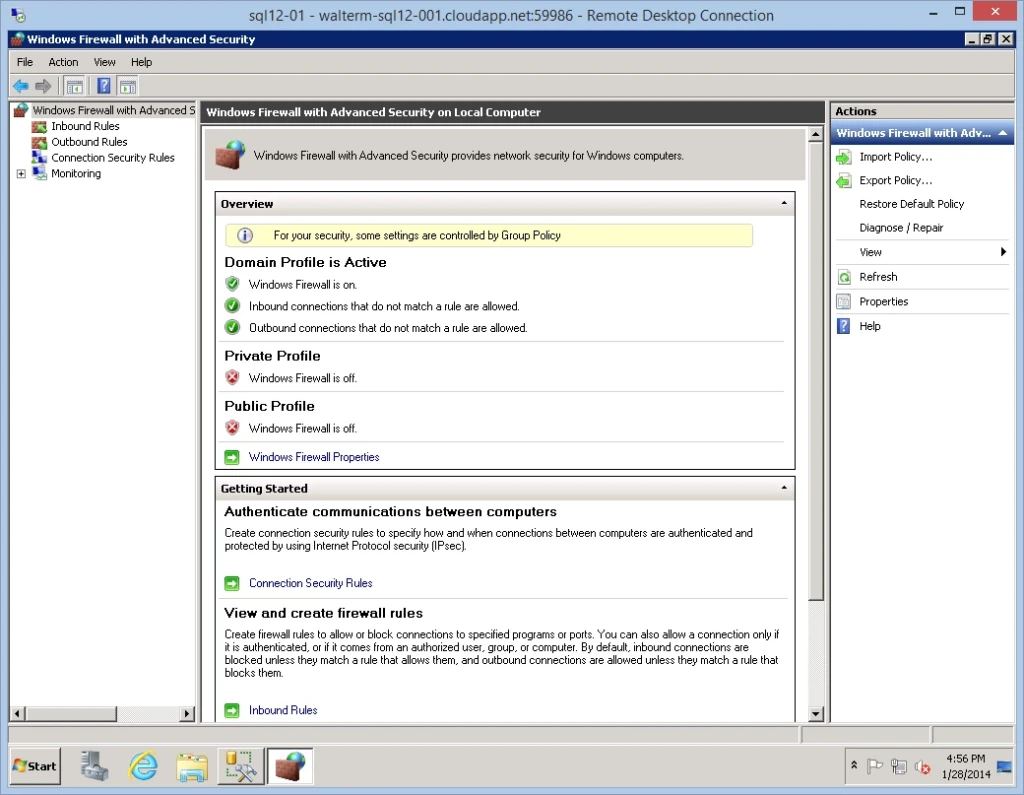

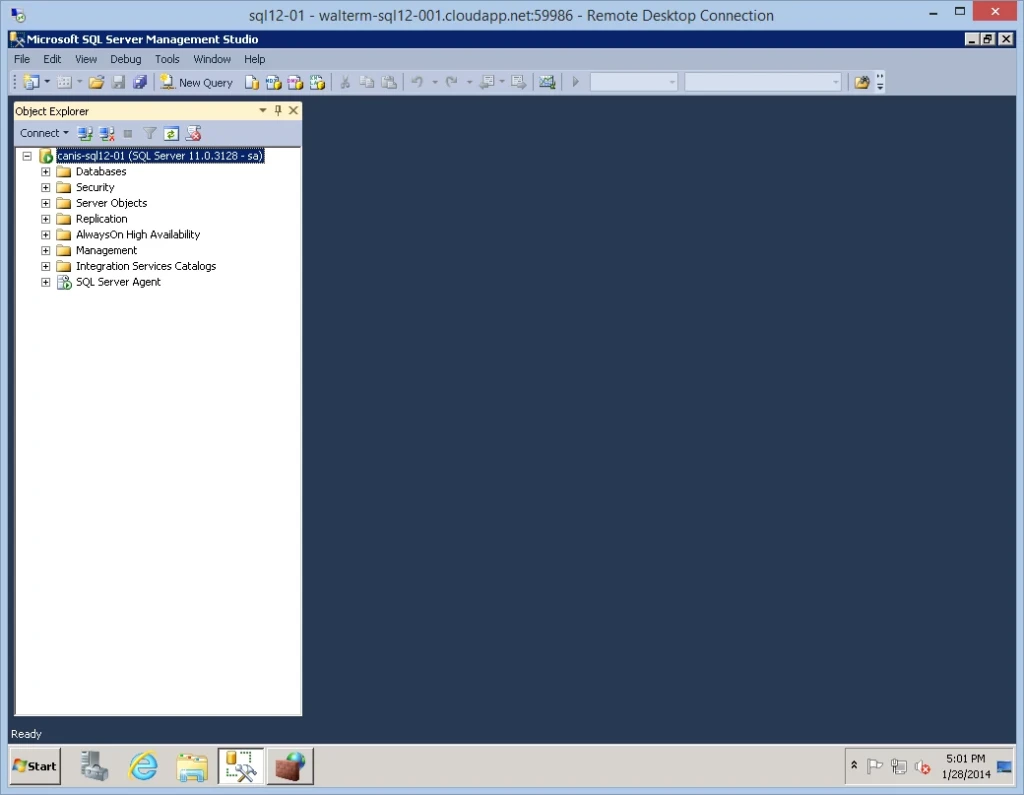

To begin, we’ll start with my SQL Server virtual machine used in the previous section, as seen below. We have Windows Firewall with Advanced Security launched as seen previously after applying Group Policy.

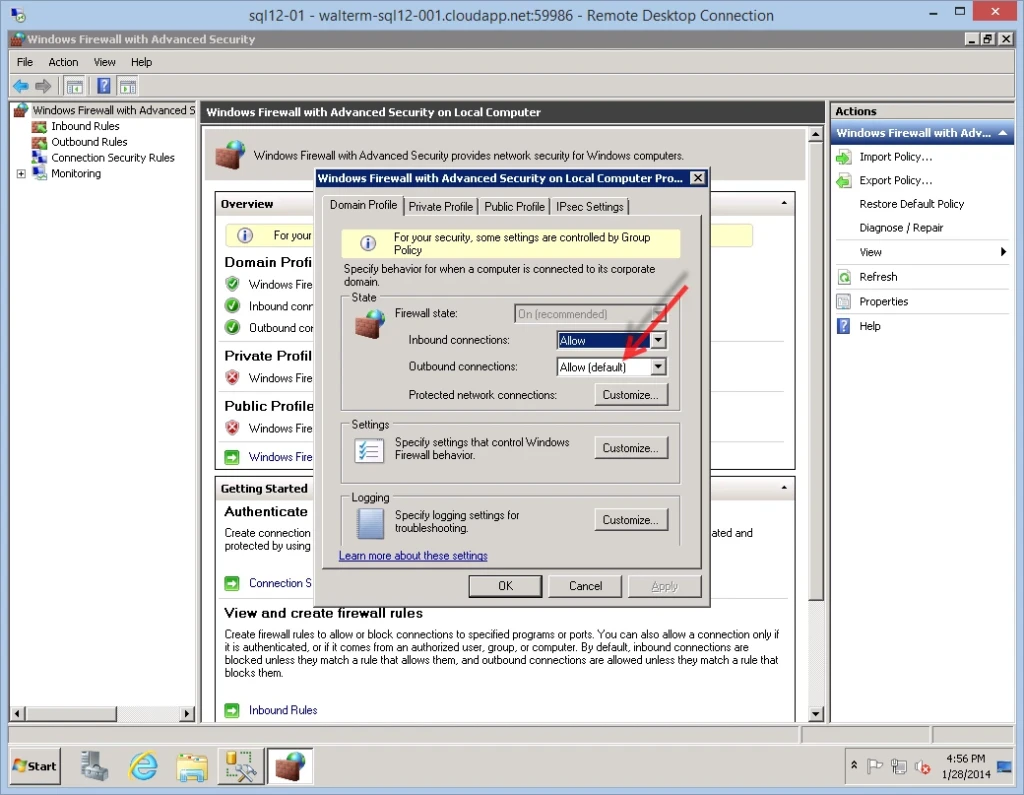

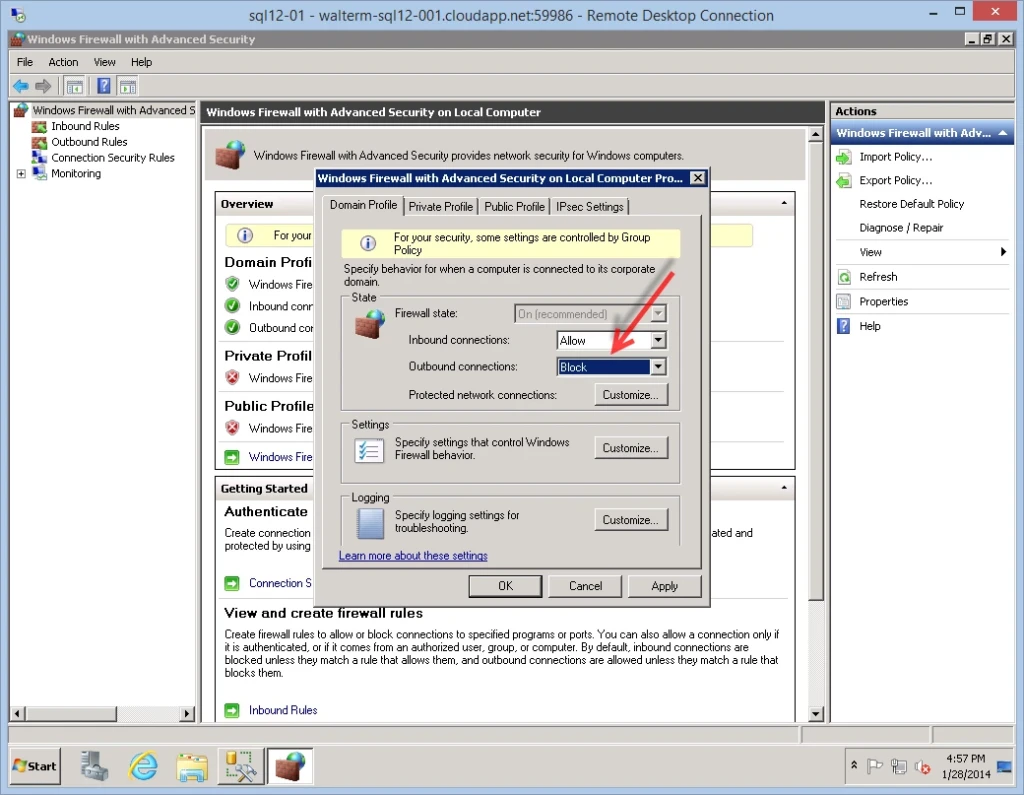

This machine can currently access the internet, and can also access another on-premises SQL Server from SQL Server Management Studio. We want to limit the virtual machine to only be able to access the on-premises subnet as defined in our VPN configuration, which is 192.168.1.0/24. I right-clicked on the top-level node to get the properties page, and noted that the outbound connections by default are allowed, as seen below.

I change this to “Block”, as seen below.

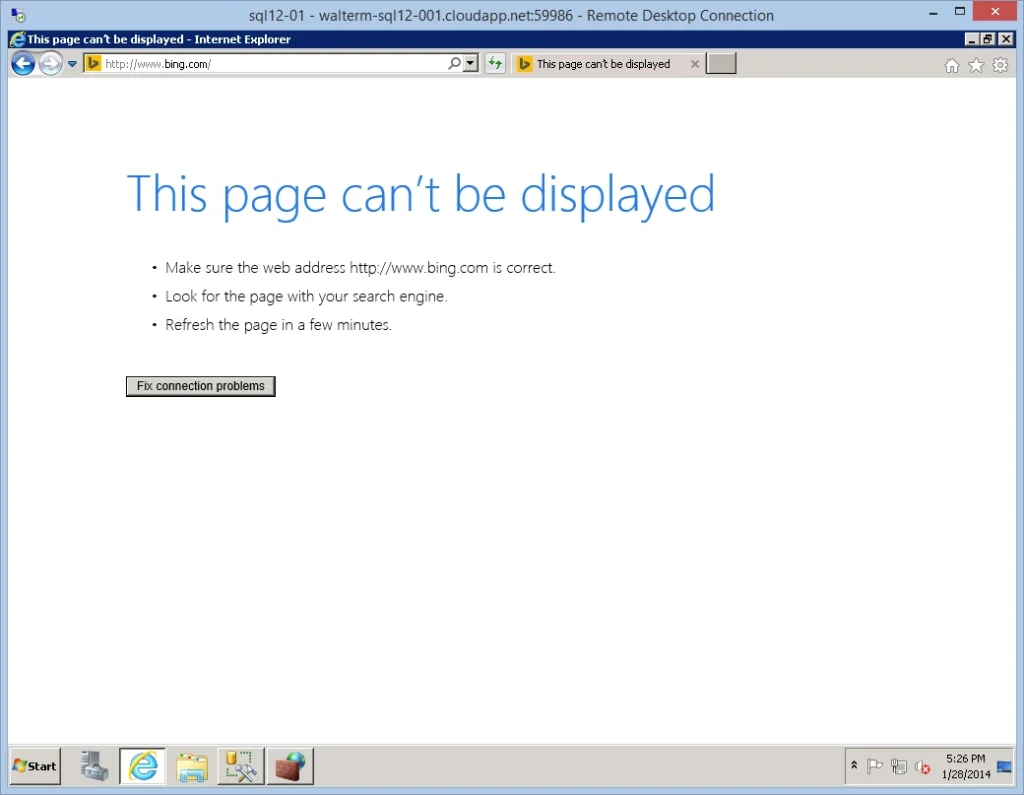

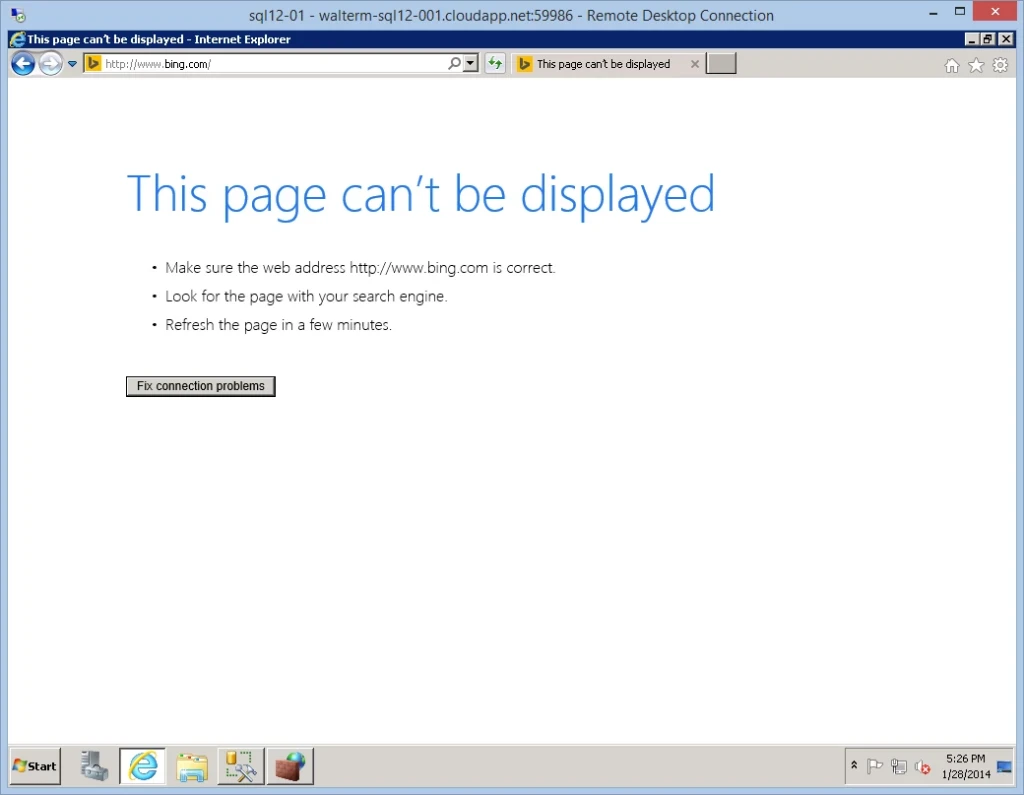

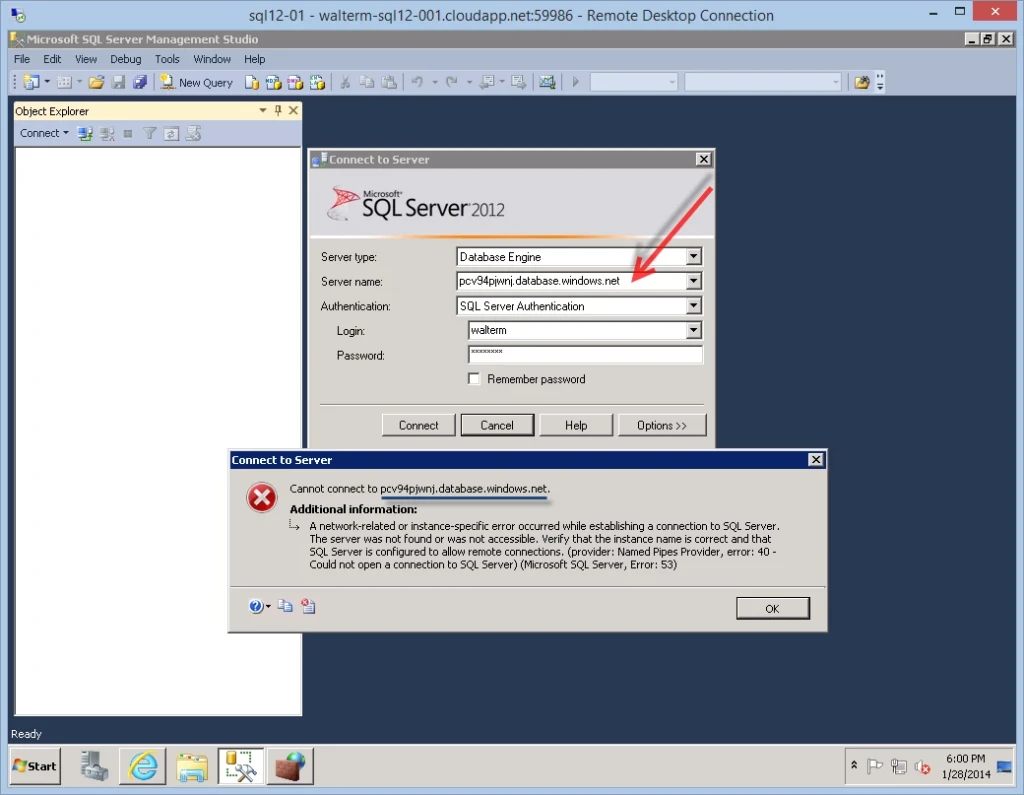

And now, I can’t gain access to my on-premises SQL Server or the internet, as seen below.

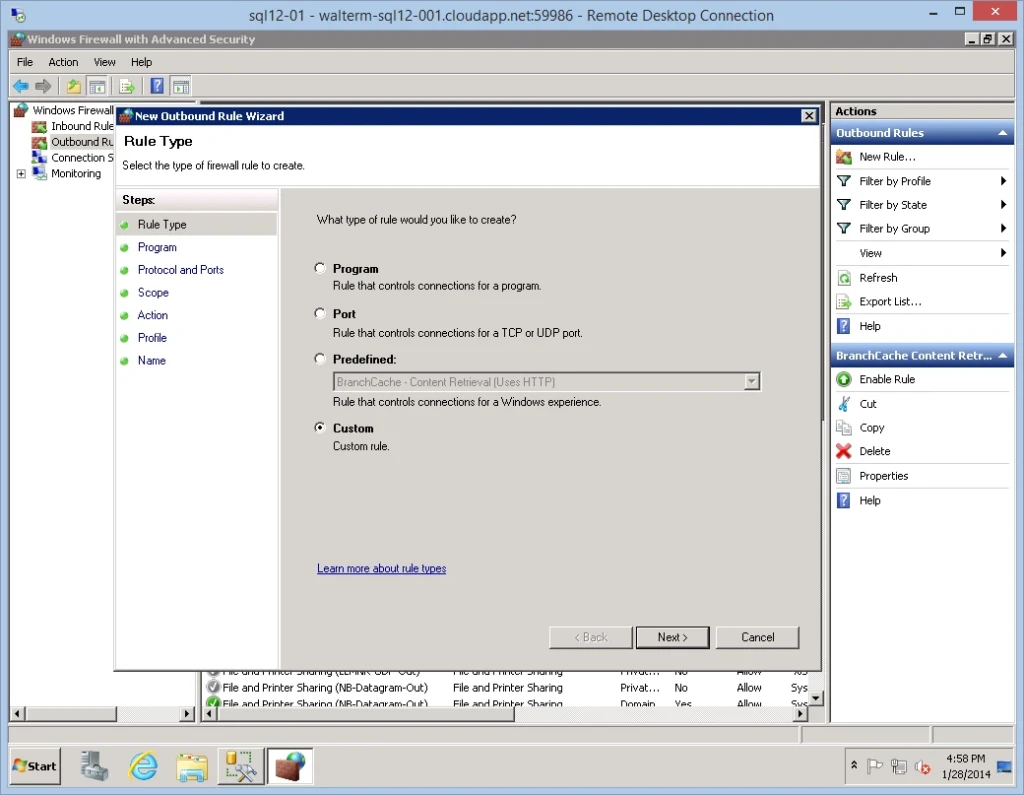

I now click on the Outbound Rules node of Windows Firewall, right-click, and select New Rule…from the popup menu. I choose a Custom rule on the Rule Type page of the New Outbound Rule Wizard, as seen below.

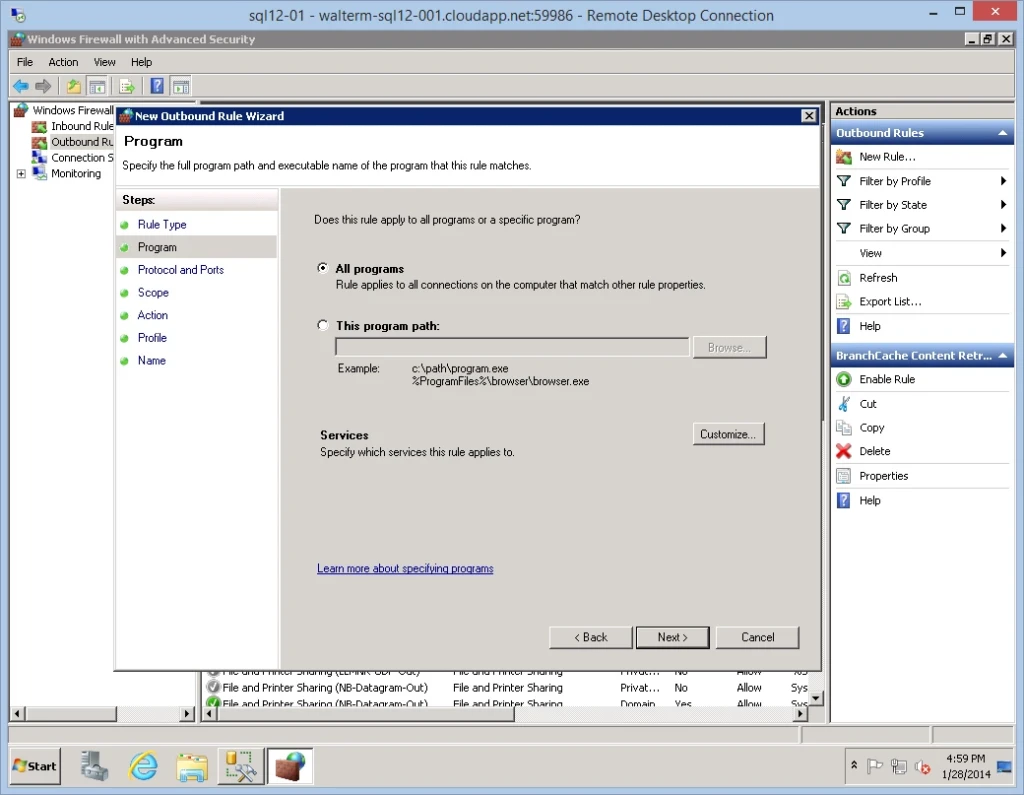

On the Program page, I leave the defaults.

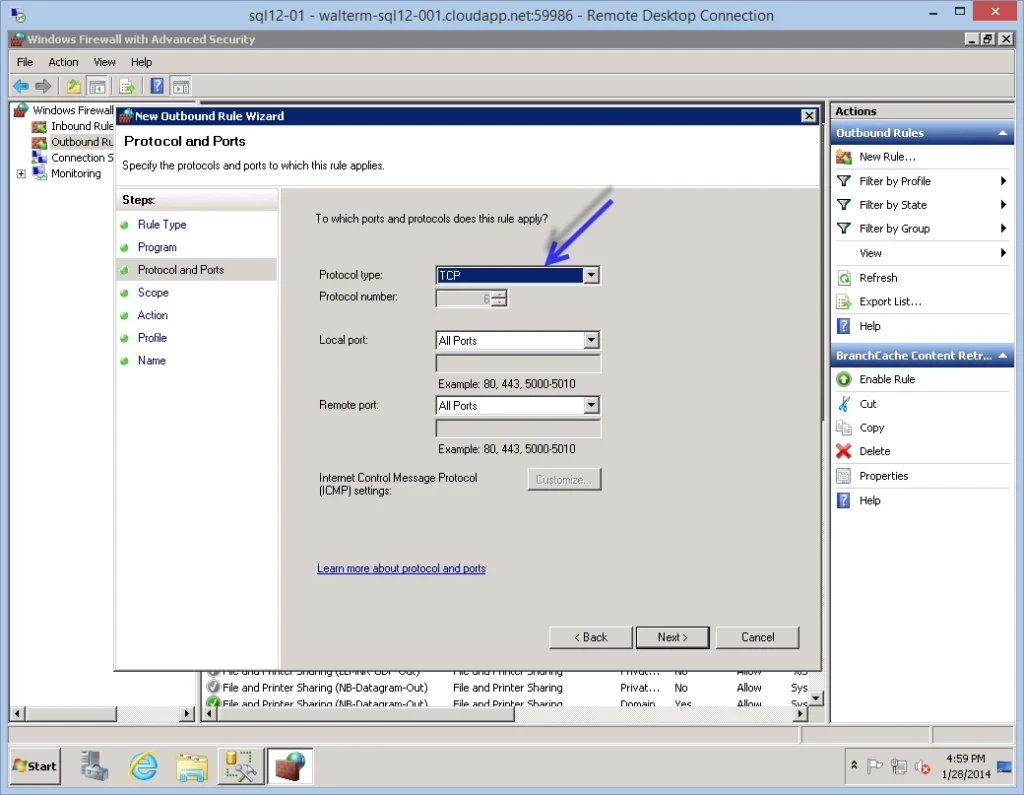

On the Protocol and Ports page, for my purposes I select the TCP protocol, as seen below.

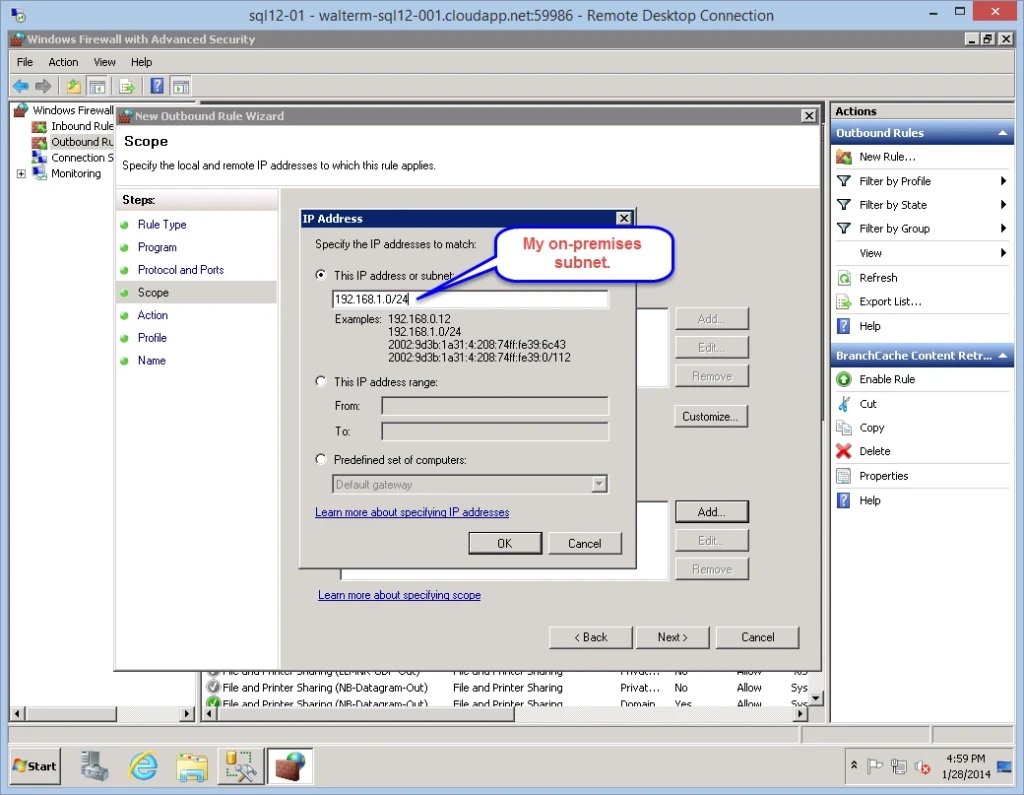

Next, we have the Scope page. I change the remote IP addresses option to “These IP addresses:”, and then select the Add… button.

In the dialog presented, I specify my on-premises subnet as the only set of IP addresses that this virtual machine can access, as seen below.

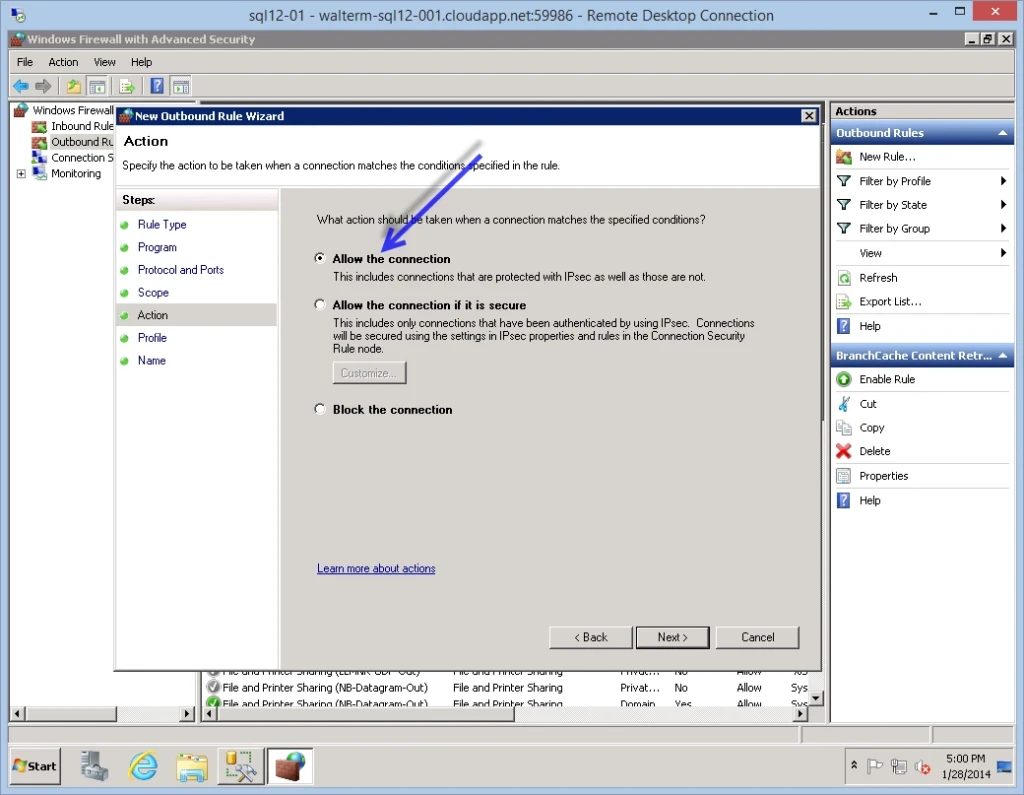

On the Action page, I choose the “Allow the connection” option.

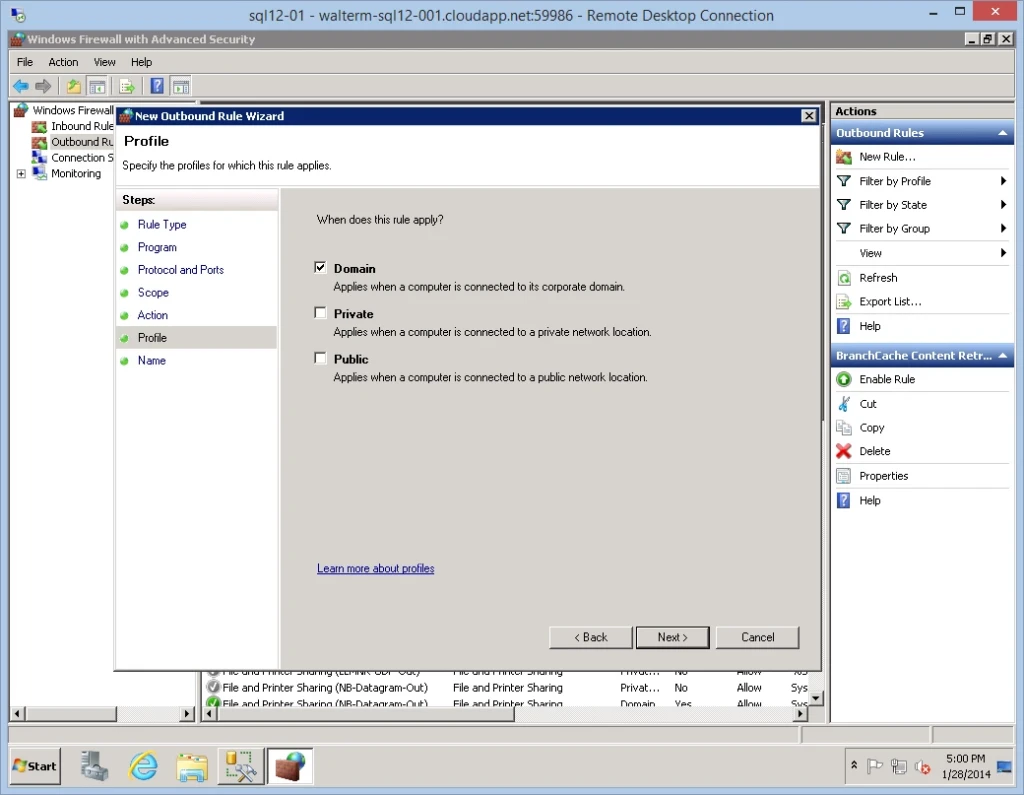

Next we have the Profile page, where for my purposes I select only the Domain profile.

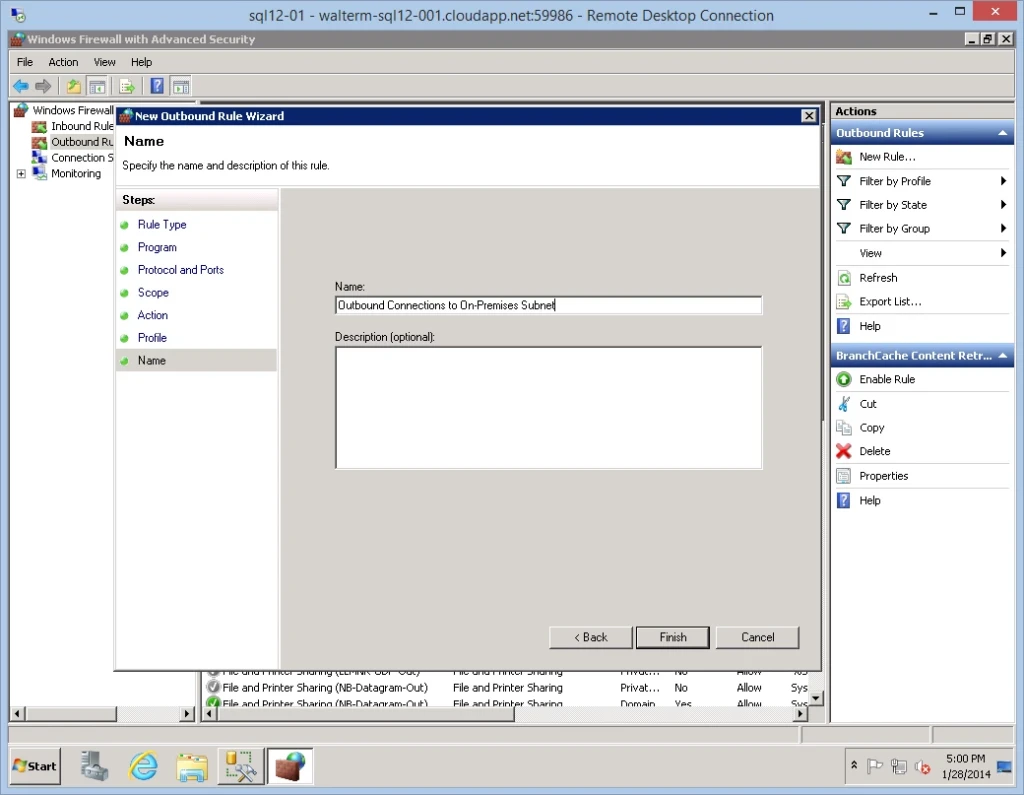

On the last Name page, I give the new rule the name “Outbound Connections to On-Premises Subnet”, as seen below.

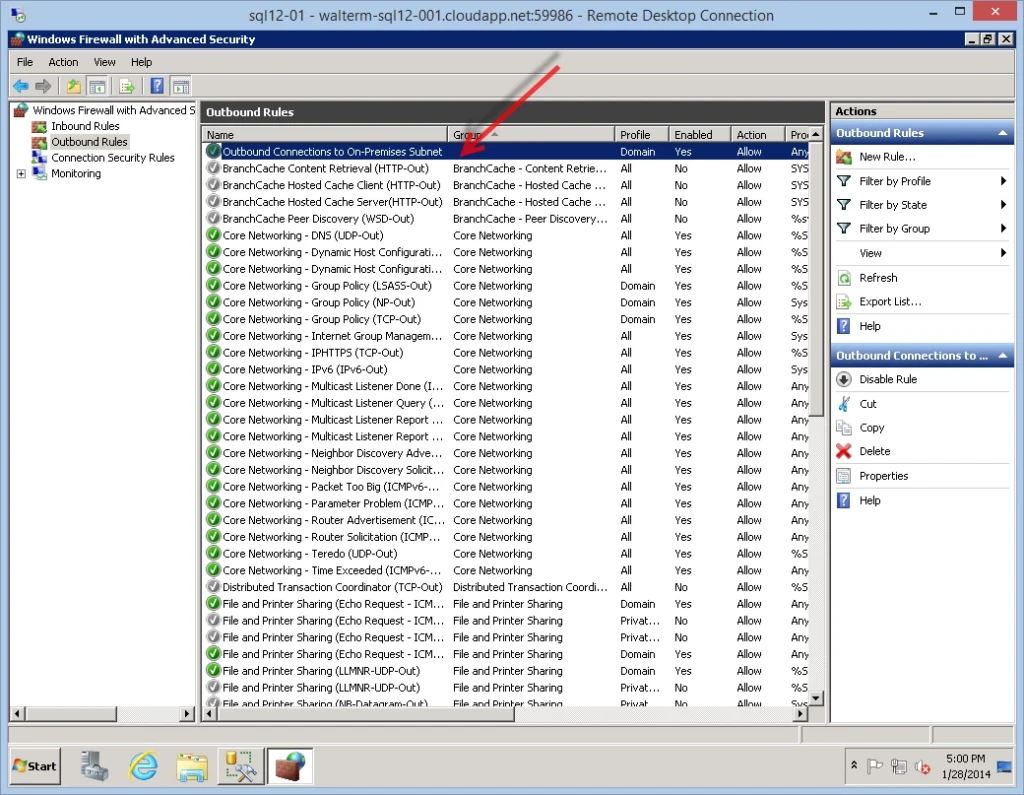

That completes the outbound rule wizard and I see my new rule below.

Now I can connect to my on-premises SQL Server or any other server in the on-premises subnet, but I cannot connect to the internet, as desired.

So we have effectively limited this virtual machine from accessing the internet. But now we can’t access other machines in the Windows Azure environment either. This can be resolved by adding additional individual IP addresses or IP address ranges to the outbound rule we just created. For example, let’s say I wanted to use SQL Server Management Studio on this virtual machine to access an Azure SQL Database server with the URL pcv94pjwnj.database.windows.net, as seen below.

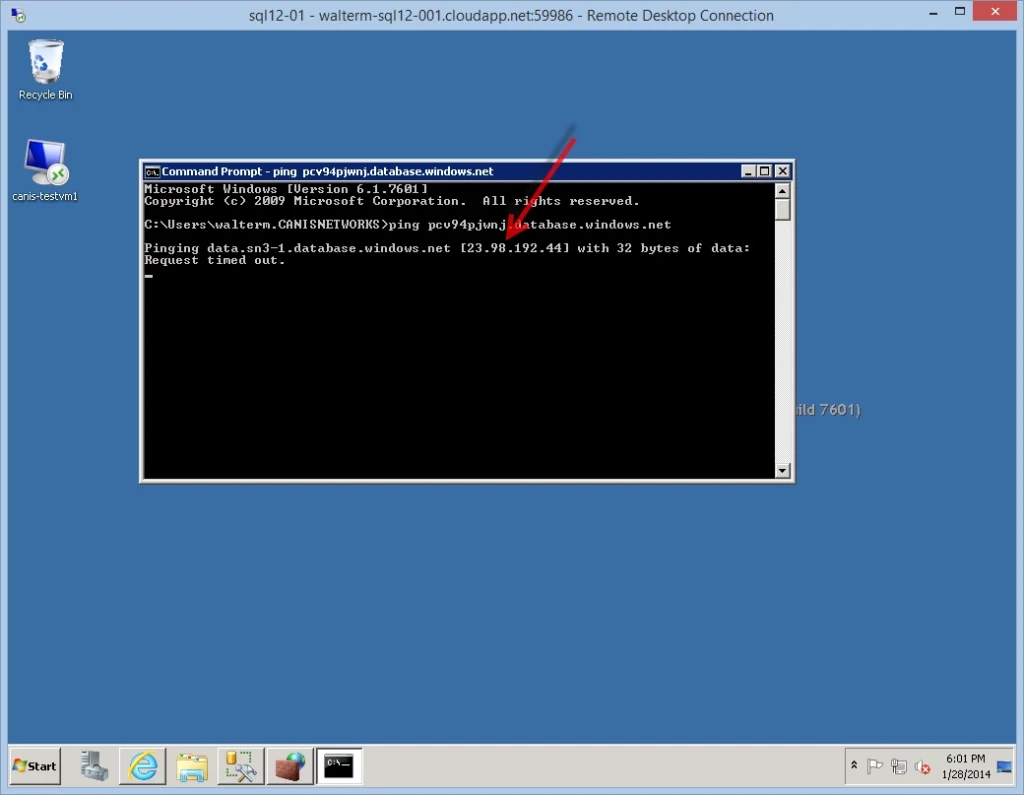

I ping the server and find its IP address, as seen below.

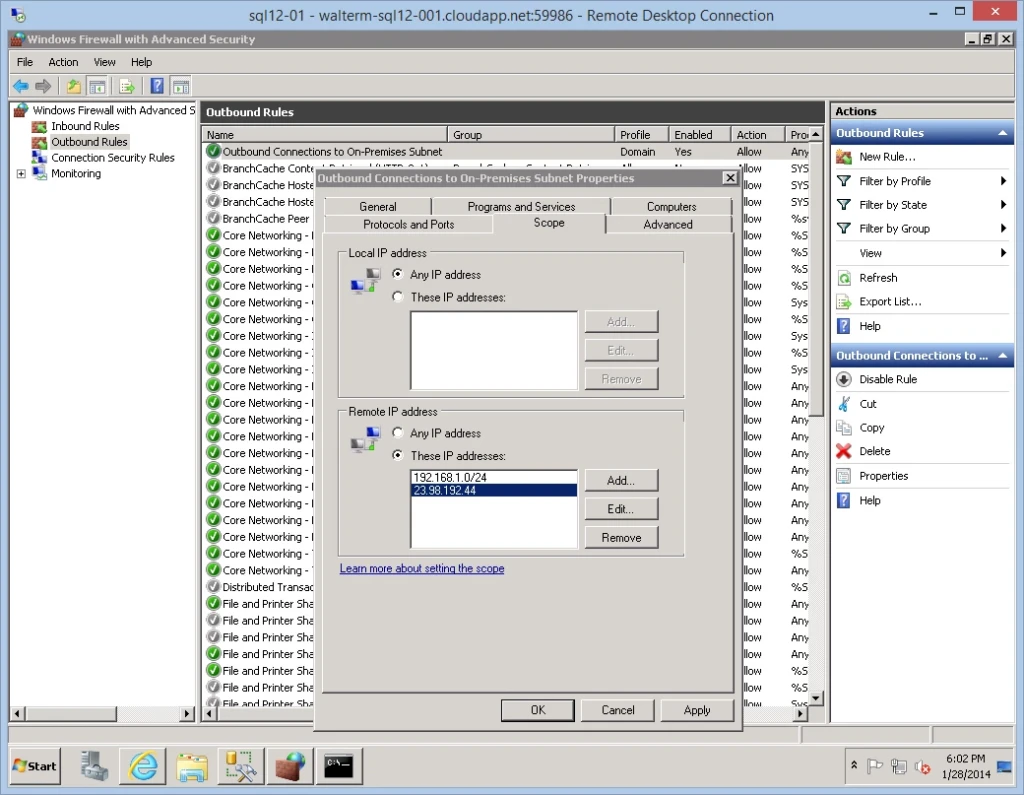

I then add the individual IP address to my whitelist of remote IP addresses, as seen below.

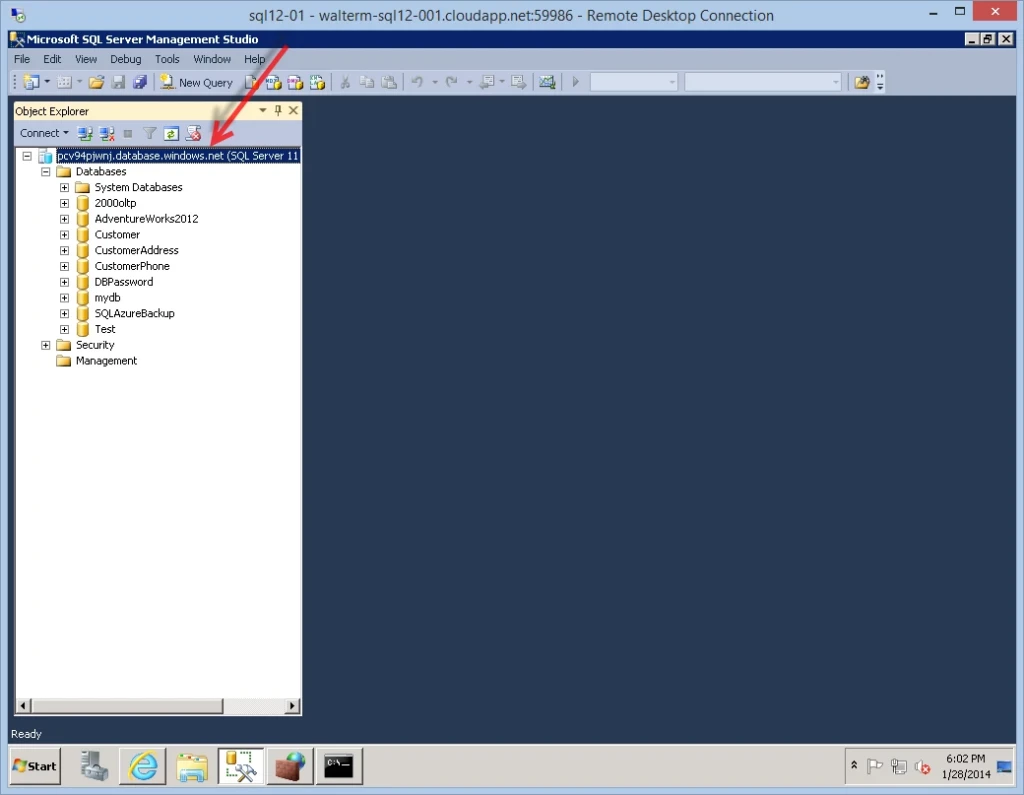

Subsequently, I can access the server.

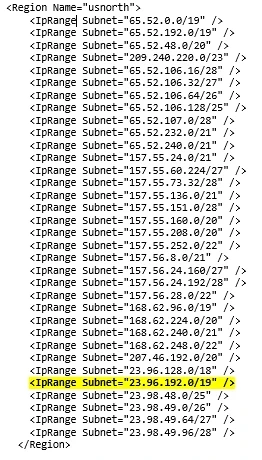

If I want to specify an IP address range from the Windows Azure platform, the list of Azure datacenter IP ranges can be downloaded from here. There are many ranges to choose from, so it is best to be selective and add only the entries that apply to the datacenters you actually use. I primarily use the US West datacenter, but still have some old database servers on US North, so in my example I could have added the following entries to allow the virtual machine to access all of US North. You can see the range below highlighted that contains my database server we just added to the outbound rule.

So now you should be all set with respect to locking down virtual machine outbound access. Also note that periodically Azure address ranges do change, so these ranges should be checked every few months, or if suddenly an Azure resource at a whitelisted IP address is no longer available.

Conclusion

In this document, we examined a number of methods for securing IaaS virtual machines. Indeed, because the Windows Azure IaaS environment can be effectively configured as an extension of your on-premises network, you can take advantage of the familiarity of existing on-premises security constructs that are augmented in Windows Azure, allowing you to effectively secure virtual machines that you have deployed to Azure running enterprise applications. Network ACLs, virtual networks, and Windows Firewall with Advanced Security all work together to ensure robust security scenarios.

References

- Windows Azure Network Security Whitepaper: https://download.microsoft.com/download/4/3/9/43902EC9-410E-4875-8800-0788BE146A3D/Windows%20Azure%20Network%20Security%20Whitepaper%20-%20FINAL.docx

- About Network Access Control Lists (ACLs):

- How to Set Up Endpoints to a Virtual Machine: https://azure.microsoft.com/en-us/manage/windows/how-to-guides/setup-endpoints/

- Network Access Control List Capability in Windows Azure: Powershell

- Setting an Endpoint ACL on a Windows Azure: VM https://convective.wordpress.com/2013/06/08/setting-an-endpoint-acl-on-a-windows-azure-vm/

- Securing End-to-End IPsec Connections by Using IKEv2 in Windows Server 2012: https://technet.microsoft.com/en-us/library/hh831807.aspx#BKMK_Step2

- Windows Firewall and IPsec Policy Deployment Step-by-Step Guide: https://technet.microsoft.com/en-us/library/deploy-ipsec-firewall-policies-step-by-step%28v=WS.10%29.aspx

- Windows Firewall with Advanced Security Administration with Windows PowerShell: https://technet.microsoft.com/en-us/library/hh831755.aspx

- Windows Azure Datacenter IP Ranges:

Ashwin Palekar

Windows Azure Networking Team