This post was co-authored by Andy Randall, VP of Business Development, Kinvolk Gmbh

We are pleased to share the availability of Calico Network Policies in Azure Kubernetes Service (AKS). Calico policies lets you define filtering rules to control flow of traffic to and from Kubernetes pods. In this blog post, we will explore in more technical detail the engineering work that went into enabling Azure Kubernetes Service to work with a combination of Azure CNI for networking and Calico for network policy.

First, some background. Simplifying somewhat, there are three parts to container networking:

- Allocating an IP address to each container as it’s created, this is IP address management or IPAM.

- Routing the packets between container endpoints, which in turn splits into:

- Routing from host to host (inter-node routing).

- Routing within the host between the external network interface and the container, as well as routing between containers on the same host (intra-node routing).

- Ensuring that packets that should not be allowed are blocked (network policy).

Typically, a single network plug-in technology addresses all these aspects. However, the open API used by Kubernetes Container Network Interface (CNI), actually allows you to combine different implementations.

The choice of configurations brings you opportunities, but also calls for a plan to make sure that the mechanisms you choose are compatible and enable you to achieve your networking goals. Let’s look a bit more closely into those details

Networking: Azure CNI

Cloud networks, like Azure, were originally built for virtual machines with typically just one or a small number of relatively static IP addresses. Containers change all that, and introduce a host of new challenges for the cloud networking layer, as dozens or even hundreds of workloads are rapidly created and destroyed on a regular basis, each of which is its own IP endpoint on the underlying network.

The first approach at enabling container networking in the cloud leveraged overlays, like VXLAN, to ensure only the host IP was exposed to the underlying network. Such overlay network solutions like flannel, or AKS’s kubenet (basic) networking mode, do a great job of hiding the underlying network from the containers. Unfortunately that is also the downside, the containers are not actually running in the underlying VNET, meaning they cannot be addressed like a regular endpoint and can only communicate outside of the cluster via network address translation (NAT).

With Azure CNI, which is enabled with advanced mode networking in AKS, we added the ability for each container to get its own real IP address within the same VNET as the host. When a container is created, the Azure CNI IPAM component assigns it an IP address from the VNET, and ensures that the address is configured on the underlying network through the magic of the Azure software-defined network layer, taking care of the inter-node routing piece.

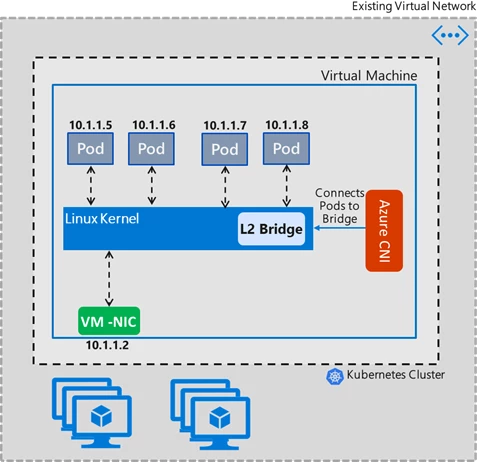

So with IPAM and inter-node routing taken care of, we now need to consider intra-node routing. How do we do intra-node routing, i.e. get a packet between two containers, or between the host’s network interface (typically eth0) and the virtual ethernet (veth) interface of the container?

It turns out the Linux kernel is rich in networking capabilities, and there are many different ways to achieve this goal. One of the simplest and easiest is with a virtual bridge device. With this approach, all the containers are connected on a local layer two segment, just like physical machines that are connected via an ethernet switch.

- Packets from the ‘real’ network are switched through the bridge to the appropriate container via standard layer two techniques (ARP and address learning).

- Packets to the real network are passed through the bridge, to the NIC, where they are routed to the remote node.

- Packets from one container to another also flow through the bridge, just like two PCs connected on an ethernet switch.

This approach, which is illustrated in figure one, has the advantage of being high performance and requiring little control plane logic to maintain, helping to ensure robustness.

Figure 1: Azure CNI networking

Network policy with Azure

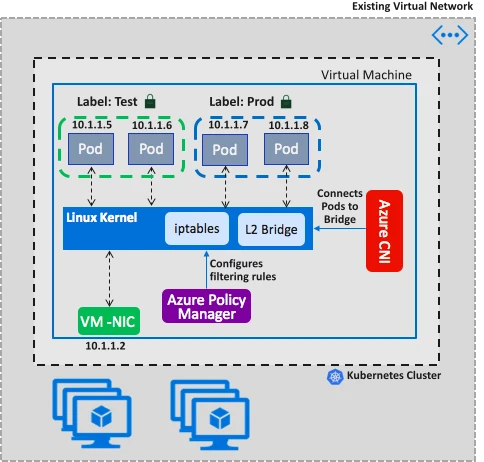

Kubernetes has a rich policy model for defining which containers are allowed to talk to which other ones, as defined in the Kubernetes Network Policy API. As we demonstrated recently at Ignite, we have now implemented this API and it works in conjunction with Azure CNI in AKS or in your own self-managed Kubernetes clusters in Azure, with or without AKS-Engine.

We translate the Kubernetes network policy model to a set of allowed IP address pairs, which are then programmed as rules in the Linux kernel iptables module. These rules are applied to all packets going through the bridge. This is shown in figure two.

Figure 2: Azure CNI with Azure Policy Manager

Network policy with Calico

Kubernetes is also an open ecosystem, and Tigera’s Calico is well known as the first, and most widely deployed, implementation of Network Policy across cloud and on-premise environments. In addition to the base Kubernetes API, it also has a powerful extended policy model which supports a range of features such as global network policies, network sets, more flexible rule specification, the ability to run the policy enforcement agent on non-Kubernetes nodes, and application layer policy via integration with Istio. Furthermore, Tigera offers a commercial offering built on Calico, Tigera Secure, that adds a host of enterprise management, controls, and compliance features.

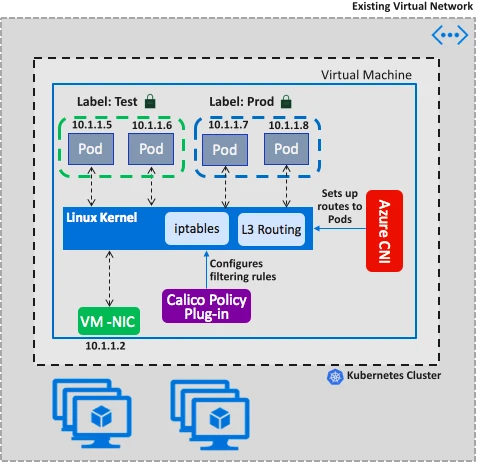

Given Kubernetes’ aforementioned modular networking model, you might think you could just deploy Calico for network policy along with Azure CNI, and it should all just work. Unfortunately, it is not this simple.

While Calico uses iptables for policy, it does so in a subtly different way. It expects containers to be established with separate kernel routes, and it enforces the policies that apply to each container on that specific container’s virtual ethernet interface. This has the advantage that all container-to-container communications are identical (always a layer 3 routed hop, whether internal to the host or across the underlying network), and security policies are more narrowly applied to the specific container’s context.

To make Azure CNI compatible with the way Calico works we added a new intra-node routing capability to the CNI, ,which we call ‘transparent’ mode. When configured to run in this mode, Azure CNI sets up local routes for containers instead of creating a virtual bridge device. This is shown in Figure 3.

Figure 3: Azure CNI with Calico Network Policy

Onward and upstream

A Kubernetes cluster with the enhanced Azure CNI and Calico policies can be created using AKS-Engine by specifying the following configuration in the cluster definition file.

"properties": {

"orchestratorProfile": {

"orchestratorType": "Kubernetes",

"kubernetesConfig":

{ "networkPolicy": "calico", "networkPlugin": "azure" }

These options have also been integrated into AKS itself, enabling you to provision a cluster with Azure networking and Calico network policy by simply specifying the options –network-plugin azure –network-policy Calico at cluster create time.

Find more information by visiting our documentation, “Azure Kubernetes network policies overview.”