Analytics, Azure HDInsight, Microsoft Azure portal, Partners

Visual data ops for Apache Kafka on Azure HDInsight, powered by Lenses

Posted on

2 min read

This blog was written in collaboration with Andrew Stevenson, CTO at Lenses.

Apache Kafka is one of the most popular open source streaming platforms today. However, deploying and running Kafka remains a challenge for most. Azure HDInsight addresses this challenge by providing:

- Ease-of-use: Quickly deploy Kafka clusters in the cloud and integrate simply with other Azure services.

- Higher scale and lower total-cost-of-operations (TCO): With managed disks, compute and storage are separated, enabling you to have 100s of TBs on a cluster.

- Enhanced security: Bring your own key (BYOK) encryption, custom virtual networks, and topic level security with Apache Ranger.

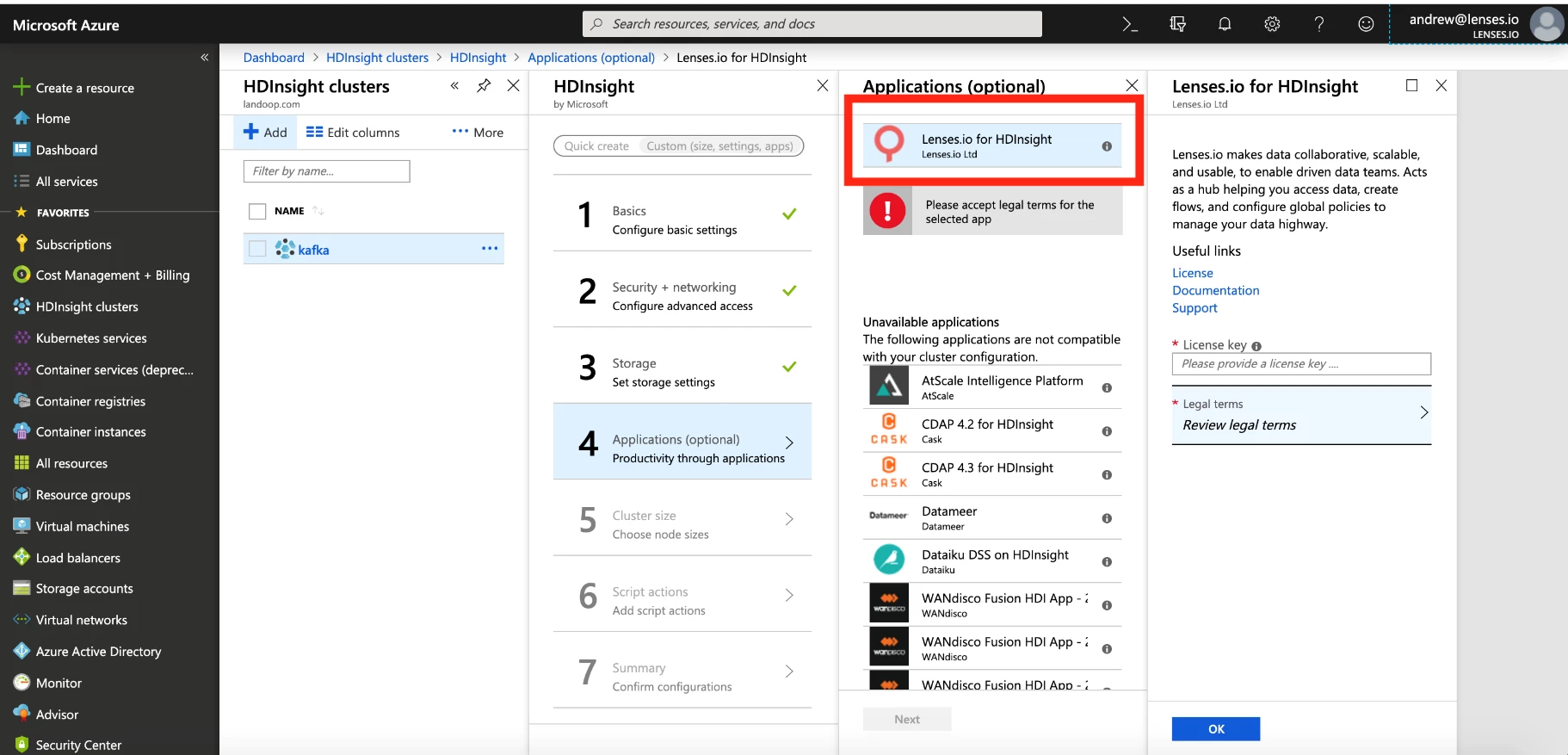

But that’s not all – you can now successfully manage your streaming data operations, from visibility to monitoring, with Lenses, an overlay platform now generally available as part of the Azure HDInsight application ecosystem, right from within the Azure portal!

With Lenses, customers can now:

- Easily look inside Kafka topics

- Inspect and modify streaming data using SQL

- Visualize application landscapes

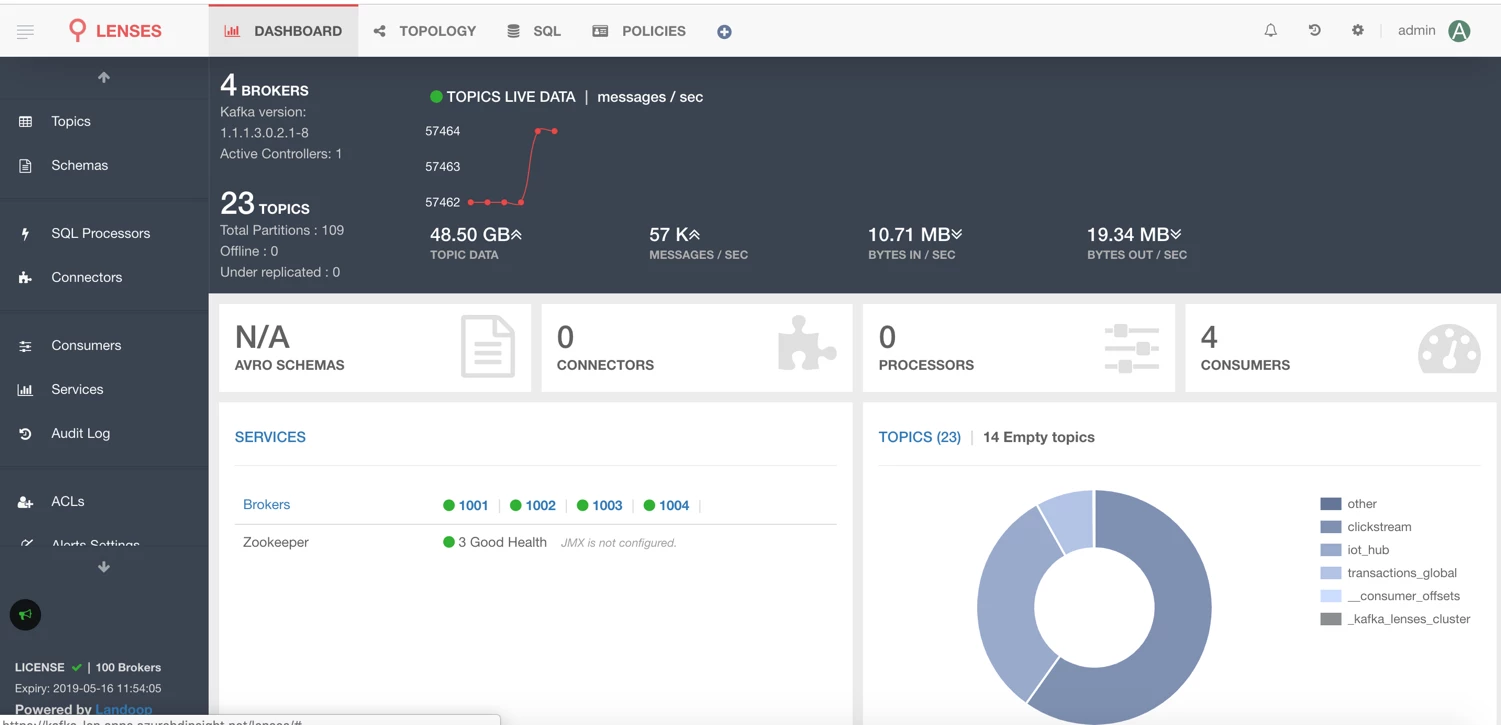

Look inside Kafka topics

A typical production Kafka cluster has thousands of topics. Imagine you want to get a high level view on all of these topics. You may want to understand the configuration of the various topics, such as the replication or partition distribution. Or you may want to look deeper inside a specific topic, investigating the message throughput and the leader broker.

While many of these insights can be provided through the Kafka CLI, Lenses greatly simplifies the experience by unifying key insights for topics and brokers via a simple to use and intuitive visual interface. With Lenses, inspecting your Kafka cluster is effortless.

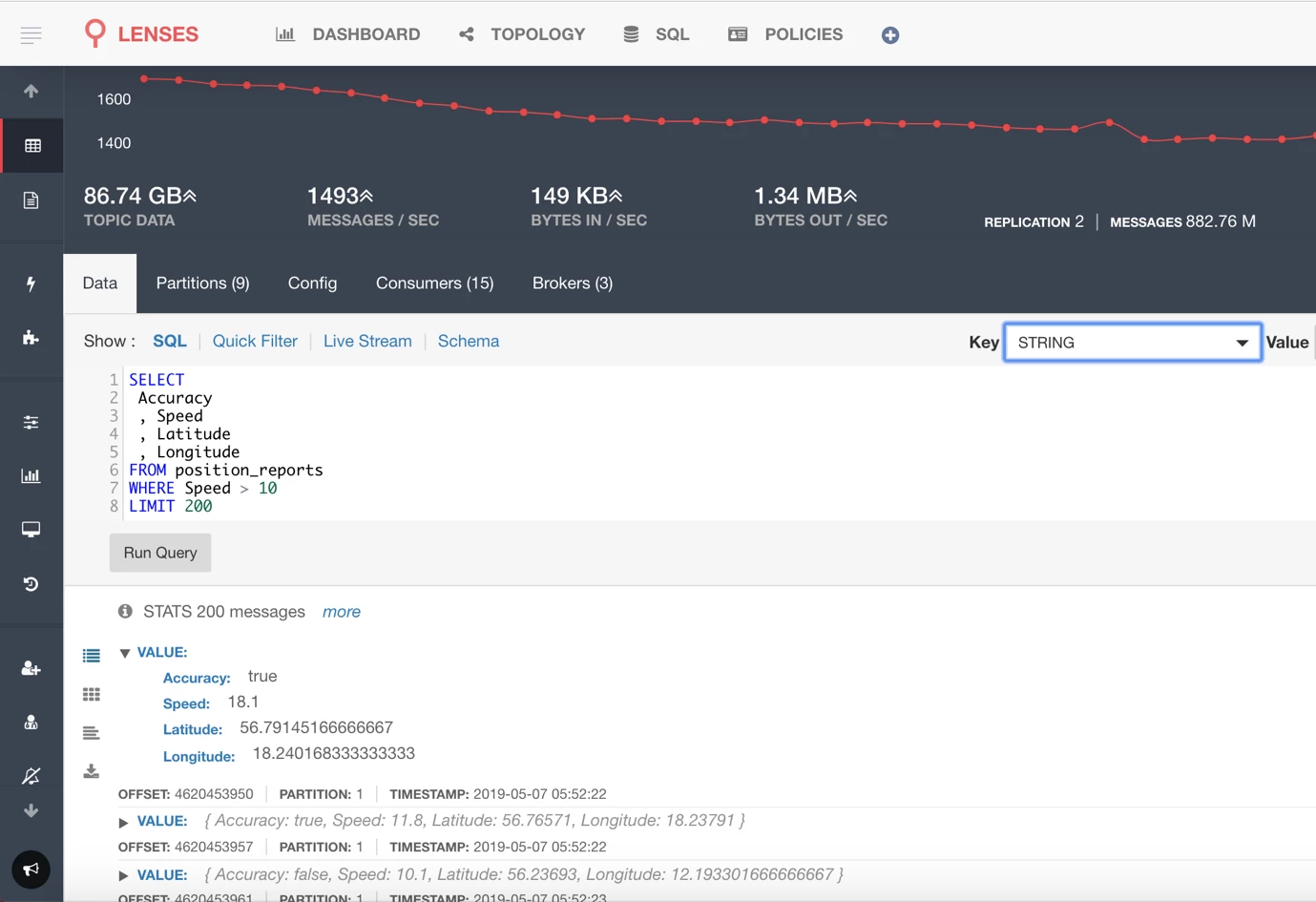

Inspect and modify streaming data using SQL

What if you want to inspect the data within the Kafka topic and view the messages sent within a certain time frame? Or if you actually want to process a subset of that stream and write it back to another Kafka topic. You can achieve that with SQL queries and Processors within the Lenses UI. You can write SQL queries to validate your streaming data and unblock your client organizations faster.

SQL Processors can be deployed and monitored to perform real-time transforms and analytics, supporting all the features you would expect in SQL, like joins and aggregations. You can also configure Lenses to scale out processing with Azure Kubernetes Service (AKS).

Visualize application landscapes

At the end of the day, you’re trying to create a solution that will create business impact. That solution will be composed of various microservices, data producers, and analytical engines. Lenses gives you easy insights into your application landscape, describing the running processes and the lineage of your data platform.

In the Topology view, running applications are dynamically added, recovered at startup, and the topics are included. For creating end-to-end solutions, Lenses also provides an easy way to deploy connectors from the open source Stream Reactor project, containing a large collection of Kafka Connect Connectors.

Check out the following resources to get started with Lenses on Azure HDInsight: