Announcements, Azure Functions, Compute, Serverless

Announcing the Azure Functions Premium plan for enterprise serverless workloads

Posted on

3 min read

We are very excited to announce the Azure Functions Premium plan in preview, our newest Functions hosting model! This plan enables a suite of long requested scaling and connectivity options without compromising on event-based scale. With the Premium plan you can use pre-warmed instances to run your app with no delay after being idle, you can run on more powerful instances, and you can connect to VNETs, all while automatically scaling in response to load.

Huge thanks to everyone that participated in our private preview! Symantec Corporation and Volpara Solutions are just a few of the companies that will benefit from the new features of the Premium plan.

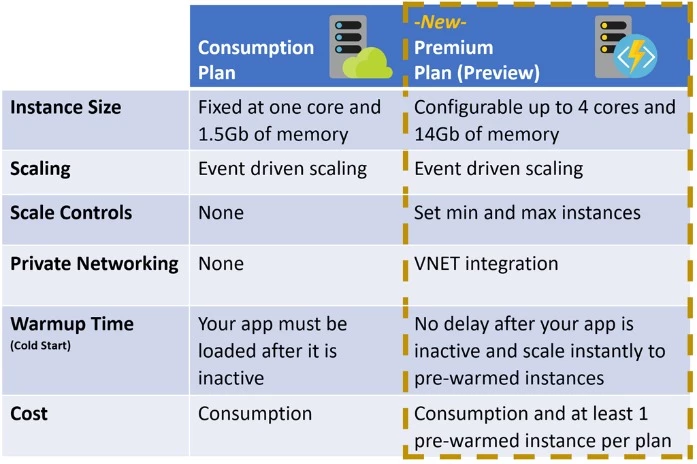

See below of a comparison of how the Premium plan improves on our existing dynamically scaling plan, the Consumption Plan.

Advanced scale controls enable customized deployments

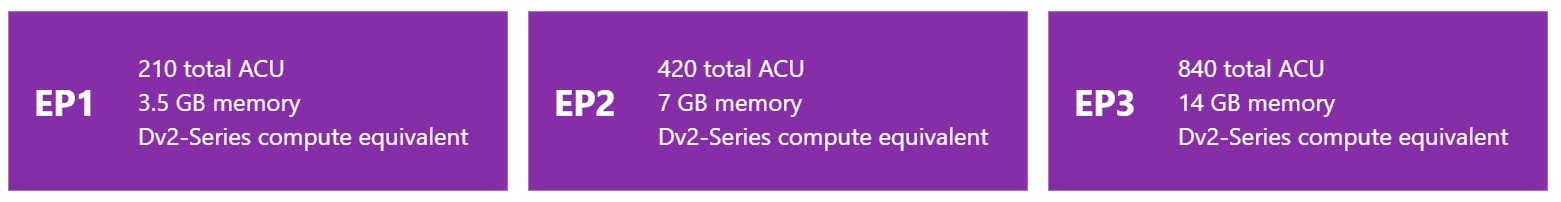

Instance size can now be specified with the Premium plan. You can select up to four D-series cores and 14 GB of memory. These instances are substantially more powerful than the A-series instances available to functions using the Consumption plan, allowing you to run much more CPU or memory intensive workloads in individual invocations.

Available Instance sizes

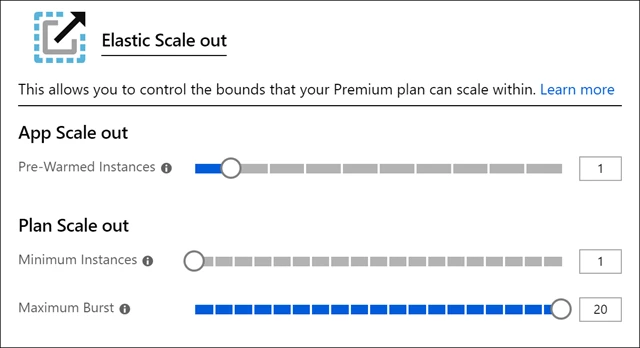

Maximum Instances can now also be specified with the Premium plan. This is one of the most highly requested features and allows you to limit the maximum scale out of your Premium plan. Restricting max scale out can protect downstream resources from being overwhelmed by your functions and allows you to predict your maximum possible bill each month.

Minimum Instances can be specified in the Premium plan to allow you to pre-scale your application ahead of predicted demand. If you suspect an email campaign, sale, or any time gated event will cause your app to scale faster than it can replenish pre-warmed instances. You can increase your minimum instances to pre-load capacity.

We’ve built a sample Durable Function that will move any function between the Consumption and Premium plan with pre-warmed instances on a schedule, allowing you to optimize for the best cost.

Connect Functions to VNET

The Premium plan allows dynamic scaling functions to connect to a VNET and securely access resources in a private network. This feature was previously only available by running Functions in an App Service Plan or App Service Environment, and is now available in a dynamically scaling model by using the Premium plan. Read more about VNET integration.

Pre-warmed Instances let you avoid cold start

With the Functions Premium plan we are offering a solution to the delay when calling a serverless application for the first time: pre-warmed instances. This delay is commonly referred as cold start, and it’s one of the most common problems amongst serverless developers. For more details on what cold start is and why it happens please refer to the blog post, “Understanding serverless cold start.”

In the Premium plan, we offer you the ability to specify a number of pre-warmed instances that are kept warm with your code ready to execute. When your application needs to scale, it first uses a pre-warmed instance with no cold start. Your app immediately pre-warms another instance in the background to replenish the buffer of pre-warmed instances. This model allows you to avoid any delay on the execution for the first request to an idle app, and also at each scaling point.

Today we only allow one pre-warmed instance per site, but we expect to open that up to higher numbers in the following weeks.

Keeping a pool of pre-warmed instances to scale into is one of the core advantages beyond existing workarounds. Today in the Consumption plan many developers work around cold start by implementing a “pinger” to constantly ping their application to keep it warm. While this does work for the first request, apps with pingers will still experience cold start as they scale out, since the new instances pulled to run the application won’t be ready to execute the code immediately. We always keep the number of pre-warmed instances you’ve requested ready as a buffer, so you’ll never see cold-start delays so long as you’re scaling slower than we can warm up instances.

Try it out and learn more!

The Azure Functions Premium plan is available in preview today to try out! Here’s what you can do to learn more about it:

- Check out how to get started with the Premium plan.

- Learn how to switch functions between Consumption and Premium plans.

- Sign up for an Azure free account if you don’t have one yet, and try out the Azure Functions Premium plan.

- Troubleshoot with the community and file any issues you run into on our GitHub repo.

- Learn more about the Premium plan and other enterprise serverless features in the Mechanics Show below: