AI + Machine Learning, Azure DevOps, Azure Machine Learning, DevOps, How to

MLOps Blog Series Part 1: The art of testing machine learning systems using MLOps

Posted on

3 min read

Testing is an important exercise in the life cycle of developing a machine learning system to ensure high-quality operations. We use tests to confirm that something functions as it should. Once tests are created, we can run them automatically whenever we make a change to our system and continue to improve them over time. It is a good practice to reward the implementation of tests and identify sources of mistakes as early as possible in the development cycle to prevent rising downstream expenses and lost time.

In this blog, we will look at testing machine learning systems from a Machine Learning Operations (MLOps) perspective and learn about good case practices and a testing framework that you can use to build robust, scalable, and secure machine learning systems. Before we delve into testing, let’s see what MLOps is and its value to developing machine learning systems.

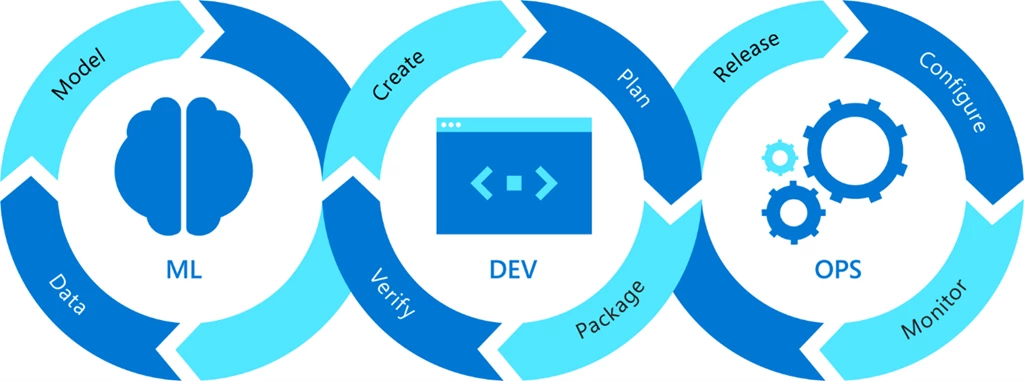

Figure 1: MLOps = DevOps + Machine Learning.

Software development is interdisciplinary and is evolving to facilitate machine learning. MLOps is a process for fusing machine learning with software development by coupling machine learning and DevOps. MLOps aims to build, deploy, and maintain machine learning models in production reliably and efficiently. DevOps drives machine learning operations. Let’s look at how that works in practice. Below is an MLOps workflow illustration of how machine learning is enabled by DevOps to orchestrate robust, scalable, and secure machine learning solutions.

Figure 2: MLOps workflow.

The MLOps workflow is modular, flexible, and can be used to build proofs of concept or operationalize machine learning solutions in any business or industry. This workflow is segmented into three modules: Build, Deploy, and Monitor. Build is used to develop machine learning models using an machine learning pipeline. The Deploy module is used for deploying models in developer, test, and production environments. The Monitor module is used to monitor, analyze, and govern the machine learning system to achieve maximum business value. Tests are performed primarily in two modules: the Build and Deploy modules. In the Build module, data is ingested for training, the model is trained using ingested data, and then it is tested in the model testing step.

1. Model testing: In this step, we evaluate the performance of the trained model on a separated set of data points named test data (which was split and versioned in the data ingestion step). The inference of the trained model is evaluated according to selected metrics as per the use case. The output of this step is a report on the trained model’s performance. In the Deploy module, we deploy the trained models to dev, test, and production environments, respectively. First, we start with application testing (done in dev and test environments).

2. Application testing: Before deploying an machine learning model to production, it is vital to test the robustness, scalability, and security of the model. Hence, we have the “application testing” phase, where we rigorously test all the trained models and the application in a production-like environment called a test, or staging, environment. In this phase, we may perform tests such as A/B tests, integration tests, user acceptance tests (UAT), shadow testing, or load testing.

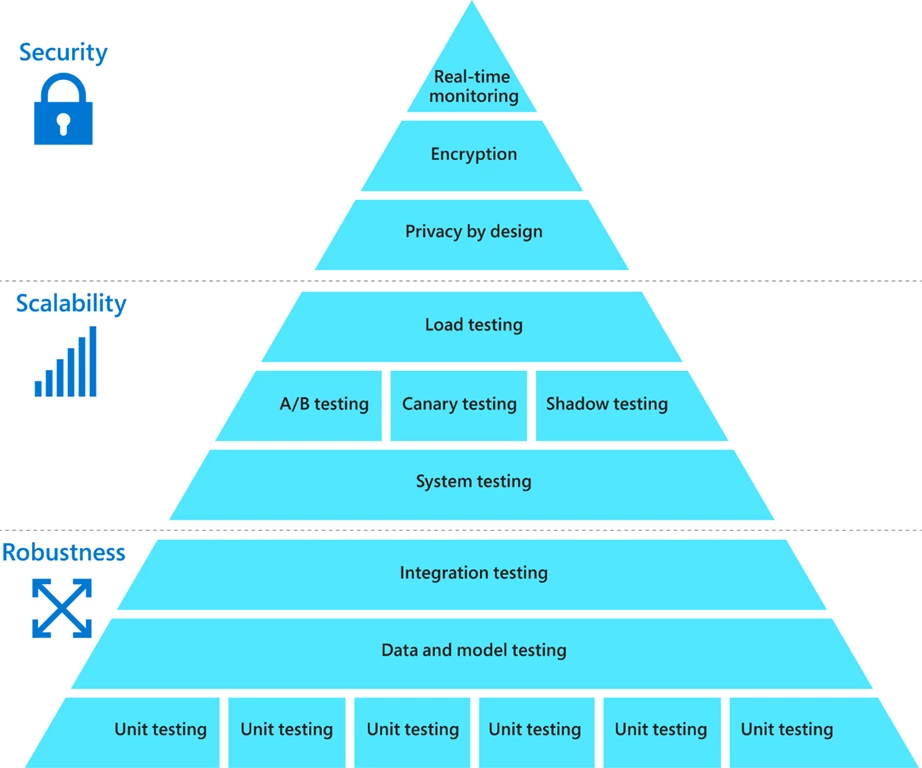

Below is the framework for testing that reflects the hierarchy of needs for testing machine learning systems.

Figure 3: Hierarchy of needs for testing machine learning systems.

One way to think about machine learning systems is to consider Maslow’s hierarchy of needs. Lower levels of a pyramid reflect “survival,” and the true human potential is unleashed only after basic survival and emotional needs are met. Likewise, tests that inspect robustness, scalability, and security ensure that the system not only performs at the basic level but reaches its true potential. One thing to note is that there are many additional forms of functional and nonfunctional testing, including smoke tests (rapid health checks) and performance tests (stress), but they may all be classified as system tests.

Over the next three posts, we’ll cover each of the three broad levels of testing, starting with robustness and then moving on to scalability and finally, security.

For further details and to learn about hands-on implementation, check out the Engineering MLOps book, or learn how to build and deploy a model in Microsoft Azure Machine Learning using MLOps in the Get Time to Value with MLOps Best Practices on-demand webinar. Also, check out our recently announced blog about solution accelerators (MLOps v2) to simplify your MLOps workstream in Azure Machine Learning.

Source for images: Engineering MLOps book