Incrementally copy new files by LastModifiedDate with Azure Data Factory

Posted on

1 min read

Azure Data Factory (ADF) is the fully-managed data integration service for analytics workloads in Azure. Using ADF, users can load the lake from 80 plus data sources on-premises and in the cloud, use a rich set of transform activities to prep, cleanse, and process the data using Azure analytics engines, while also landing the curated data into a data warehouse for getting innovative analytics and insights.

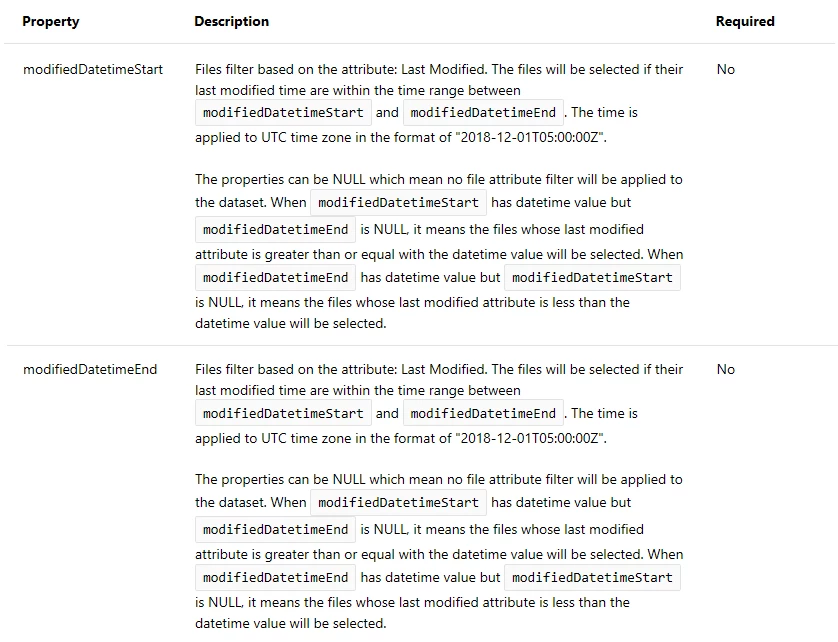

When you start to build the end to end data integration flow the first challenge is to extract data from different data stores, where incrementally (or delta) loading data after an initial full load is widely used at this stage. Now, ADF provides a new capability for you to incrementally copy new or changed files only by LastModifiedDate from a file-based store. By using this new feature, you do not need to partition the data by time-based folder or file name. The new or changed file will be automatically selected by its metadata LastModifiedDate and copied to the destination store.

The feature is available when loading data from Azure Blob Storage, Azure Data Lake Storage Gen1, Azure Data Lake Storage Gen2, Amazon S3, File System, SFTP, and HDFS.

The resources for this feature are as follows:

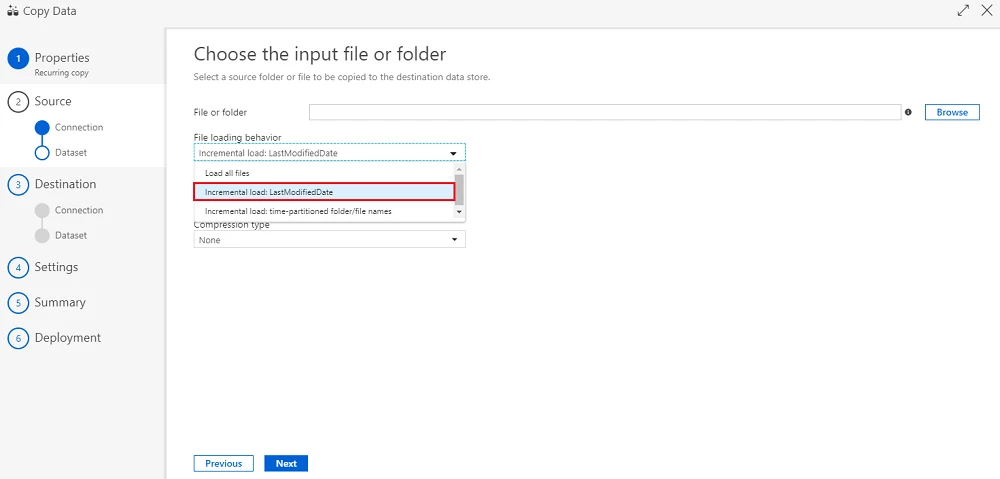

1. You can visit our tutorial, “Incrementally copy new and changed files based on LastModifiedDate by using the Copy Data tool” to help you get your first pipeline with incrementally copying new and changed files only based on their LastModifiedDate from Azure Blob storage to Azure Blob storage by using copy data tool.

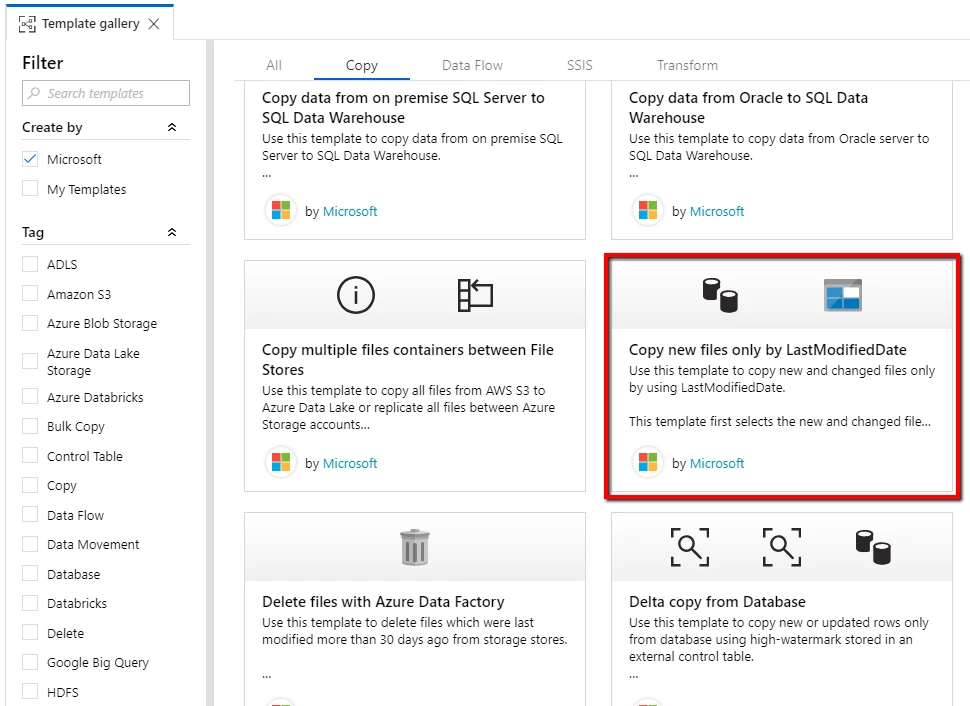

2. You can also leverage our template from template gallery, “Copy new and changed files by LastModifiedDate with Azure Data Factory” to increase your time to solution and provide you enough flexibility to build a pipeline with the capability of incrementally copying new and changed files only based on their LastModifiedDate.

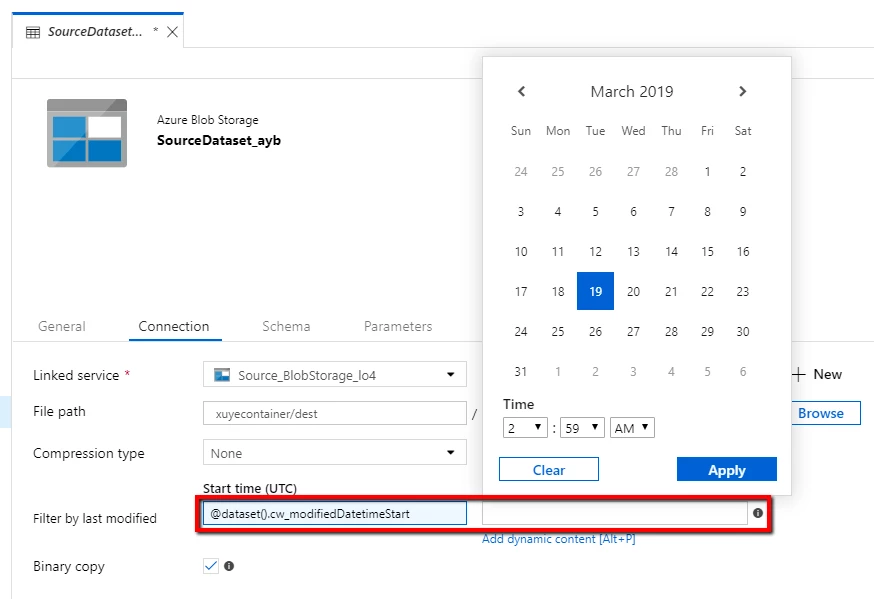

3. You can also start from scratch to get that feature from ADF UI.

3. You can also start from scratch to get that feature from ADF UI.

4. You can write code to get that feature from ADF SDK.

You are encouraged to give these additions a try and provide us with feedback. We hope you find them helpful in your scenarios. Please post your questions on Azure Data Factory forum or share your thoughts with us on Data Factory feedback site.