Azure HPC Cache, Customer stories, Hybrid + Multicloud

CGG speeds geoscience insights on Azure HPC

Posted on

6 min read

A leader in geosciences, CGG has been imaging the earth’s subsurface for more than 85 years. Their products and solutions help clients locate natural resources. When a customer asked whether the cloud could speed the creation of the high-resolution reservoir models that they needed, CGG turned to Azure high performance computing (HPC).

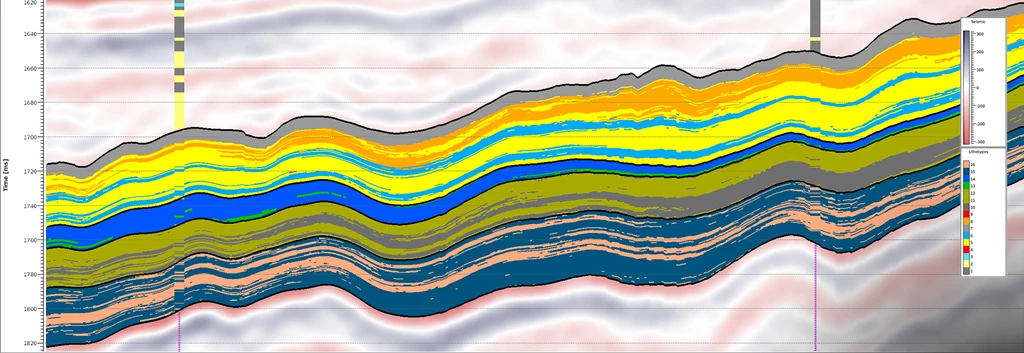

CGG is a team of acknowledged imaging experts in the oil and gas industry. Their subsurface imaging centers are internationally acclaimed, and their researchers are regularly recognized with prestigious international awards for their outstanding technical contributions to the industry. CGG was an early innovator in seismic inversion, the technique of converting seismic survey reflection data into a quantitative description of the rock properties in an oil and gas reservoir. From this data, highly detailed models can be made, like the following image showing the classification of rock types in a 3D grid for an unconventional reservoir interval. These images are invaluable in oil and gas exploration. Accurate models help geoscientists reduce risk, drill the best wells, and forecast production better.

The challenge is to get the best possible look beneath the surface. The specialized software is compute-intensive, and complex models can take hours or even days to render.

Figure 1. An advanced seismic model, using CGG’s Jason RockMod.

Azure provides the elasticity of compute that enables our clients to rapidly generate multiple high-resolution rock property realizations with Jason RockMod for detailed reservoir models.”

– Joe Jacquot, Strategic Marketing Manager, CGG GeoSoftware

The challenge: prove it in the cloud

An oil and gas company in Southeast Asia asked CGG to work with them to find the optimum way to deploy reservoir characterization technology for their international team. The customer also wondered if they could take advantage of the cloud to save on hardware costs in their datacenter, where they already ran leading-edge CGG geoscience software solutions. They knew the theory of the cloud’s elasticity and on-demand scalability were familiar with its use in oil exploration, but they didn’t know if their applications would perform as well in the cloud as they did on-premises.

In a rapidly moving industry, performance gains translate to speedier decision making, accelerated exploration, and development timelines, so the company asked CGG to provide a demonstration. As geoscience experts, CGG wanted to show their software in the best possible light. They turned to the customer advisors at AzureCAT (led by Tony Wu) for help creating a proof of concept that demonstrated how the cloud capacity could help the company get insights faster.

Demo 1: CGG Jason RockMod

CGG Jason RockMod overcomes the limitations of conventional reservoir characterization solutions with a geostatistical approach to seismic inversion. The outcome is accurate reservoir models so in depth that geoscientists can use them to predict field reserves, fluid flow patterns, and future production.

The company wanted to better understand the scale of the RockMod simulation in the cloud, and wondered whether the cloud offered any real benefits compared to running the simulations on their own workstations.

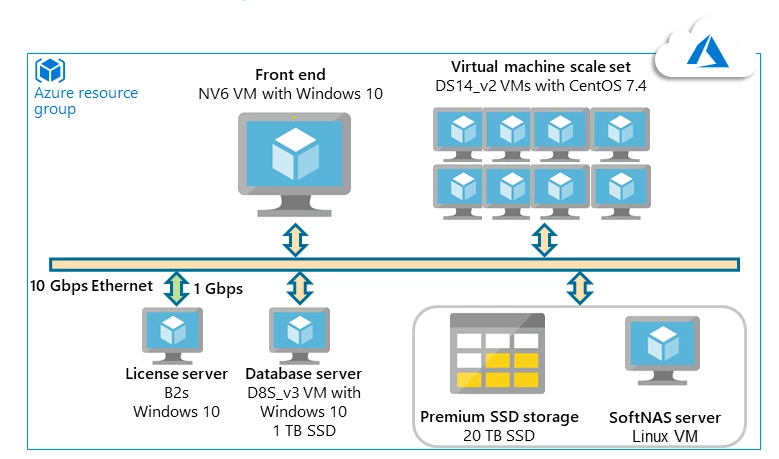

Technical teams from CGG and AzureCAT looked at RockMod as a big-compute workload. In this type of architecture, computationally intensive operations such as simulations are split across CPUs in multiple computers (10 to 1,000s). A common pattern is to administer a cluster of virtual machines, then schedule and monitor the HPC jobs. This do-it-yourself approach would enable the company to set up their own cluster environment in Azure virtual machine scale sets. The number of VM instances can automatically scale per demand or on a defined schedule.

RockMod creates a family of multiple equi-probable simulation outputs, called realizations. In the proof of concept tests for their customer, CGG ran RockMod on a Microsoft Windows virtual machine on Azure. It served as the master node for job scheduling and distribution of multiple realizations across several Linux-based HPC nodes in a virtual machine scale set. Scale sets support up to 1,000 VM instances or 300 custom VM images, more than enough scale for multiple realizations.

An HPC task generates the realizations, and the speed depends on the number of CPU cores or non-hyperthreading more than the processor speed. AzureCAT recommended non-hyperthreading cores as the best choice for application performance. From a storage perspective, the realizations are not especially demanding. During testing, the storage IO rate went up to a few hundred megabytes per second (MB/s). Based on these considerations, CGG chose the cost-effective DS14V2 virtual machine with accelerated networking, which has 16 cores and 7 GB per core.

“Azure provided the ideal blend of HPC and storage performance and price, along with technical support that we needed to successfully demonstrate our high-end reservoir characterization technology on the cloud. It was a technical success and paved the way for full-scale commercial deployment.”

– Joe Jacquot, Strategic Marketing Manager, CGG GeoSoftware

Figure 2. Azure architecture of CGG Jason RockMod.

Benchmarks and benefits

A typical project is about 15 GB in size and includes more than three million seismic data traces. Some of the in-house workstations could render a single realization within a day or two, but the goal was to run 30 realizations by taking advantage of the cloud’s scalability. The initial small scale test ran one realization on one HPC node, which took just under 12 hours to complete. That was within the target range, and now the CGG team needed to see what would happen when they ran at scale.

To test the linear scalability of the job, they first ran eight realizations on eight nodes. The results were nearly identical to the small scale test run. The one realization to one node formula seemed to work. For the sake of comparison, they tried running 30 realizations on just eight nodes. That didn’t work so well, the tests were nearly four times slower.

The final test ran 30 realizations on 30 nodes, one realization to one node. The results were similar to the small scale test, and the job was completed in just over 12 hours. This scenario was tested several times to validate the results which were consistent. The test was a success.

Demo 2: CGG InsightEarth

In their exploration and development efforts, the company also used CGG InsightEarth, a software suite that accelerates 3D interpretation and visualization.

The interpretation teams at the company were using several InsightEarth applications to locate hydrocarbon deposits, faults and fractures, and salt bodies all of which can be difficult to interpret. They asked CGG to compare the performance of the InsightEarth suite on Azure to their workstations on premises. Their projects were typically 15 GB in size, with more than three million seismic data traces.

InsightEarth is a powerful, memory-intensive application. To get the best performance, the application must run on a GPU-based computer, and to get the best performance from GPUs, efficient memory access is critical. To meet this requirement on Azure, the team of engineers from CGG and AzureCAT looked at the specialized, GPU-optimized VM sizes that are available. The Azure NV-series virtual machines are powered by NVIDIA Tesla M60 GPUs and the NVIDIA GRID technology with Intel Broadwell CPUs, which are suited for compute-intensive visualizations and simulations.

The GRID license gives the company the flexibility to use an NV instance as a virtual workstation for a single user, or to give 25 users concurrent access to the VM running InsightEarth. The company wanted the collaborative benefits of the second model. Unlike a physical workstation, a cloud-based GPU virtual workstation would allow them all to view the data after running a pre-processing or interpretation step because all the data was in the cloud. This would streamline their workflow, eliminating the need to move the data back on premises for that task.

The initial performance tests ran InsightEarth on an NV24 VM on Azure. The storage IO demand was moderate, with rates around 100 MB/s. However, the VM’s memory size proved to be a bottleneck. The amount of data that could be loaded from the memory was limited, and the performance wasn’t as good as the more powerful GPU setup used on premises.

Next, the team ran a similar test using an ND-series VM. This type is specifically designed to offer excellent performance for AI and deep learning workloads, where huge data volumes are used to train models. They chose an ND24-size VM with double the amount of the memory and the newer NVIDIA Tesla P40 GPUs. This time, the results were considerably better than NV24.

From an infrastructure perspective, storage also matters when deploying high-performance applications on Azure. The team implemented SoftNAS, a type of storage that supports both the Windows and Linux VMs used in the overall solution. SoftNAS also suits the lower to mid-range storage IO demands for these simulation and interpretation workloads.

Figure 3. Azure Architecture for InsightEarth

Summary

GPUs and memory-optimized VMs provided the performance that the company needed for both Jason Rockmod and InsightEarth. Better yet, Azure gave them the scale they needed to run multiple realizations in parallel. In addition, they deployed GPUs to both software solutions, giving the CGG engineers a standard front end to work with. They set up access to the Azure resources through an easy-to-use portal based on Citrix Cloud, which also runs on Azure.

CGG’s customer, the oil and gas company, was delighted with the results. Based on the results of the benchmark tests, the oil and gas company decided to move all of their current CGG application workload to Azure. The cloud’s elasticity and Azure’s pay-per-use model were a compelling combination. Not only did the Azure solution perform, it proved to be more cost-effective compared to the limited scalability they could achieve with their computing power on-premises.

| Company: | CGG |

| Microsoft Azure CAT Technical Lead: | Tony Wu |